ทวีตที่ปักหมุด

Ben Anderson

242 posts

Ben Anderson

@anchorstack_dev

Senior engineer. Building things that actually work, fixing things that don't. I fix vibe coded apps so they hold up when your users need them.

San Antonio, TX, USA เข้าร่วม Şubat 2026

238 กำลังติดตาม75 ผู้ติดตาม

@plainionist One pattern I find myself following is:

1) automated unit tests

2) automated integrations tests

3) and doing manual smoke tests to make sure the functionality of the changed code remains.

English

@GergelyOrosz I think one of the joys of writing is producing something with your voice. To me writing with AI feels like cheating way more than coding with AI.

English

AI - in theory - should make writing easier, thus expressing ideas should be a lot easier.

And yet, I don't see all that much more things that are worthwhile to read. Sure, there's a lot more junk. But I don't see more interesting eng blogs, personal tech-related blogs etc.

What is going on?

(Is this a discoverability issue? Or are people / teams not writing/sharing all that much more, indeed?)

English

@KaiXCreator Can you call yourself a founder if your whole product was built by employees?

English

@zuess05 This is scary how accurate it is. I think over time standards will present themselves that make this easier.

English

@karpathy I have a hard time believing in HTML over markdown but I will give it a try.

The tradeoff is being able to write and comment on specific lines in the markdown. I don't think we are there yet with HTML I could be swayed. (This is basically Claude Design.)

English

This works really well btw, at the end of your query ask your LLM to "structure your response as HTML", then view the generated file in your browser. I've also had some success asking the LLM to present its output as slideshows, etc.

More generally, imo audio is the human-preferred input to AIs but vision (images/animations/video) is the preferred output from them. Around a ~third of our brains are a massively parallel processor dedicated to vision, it is the 10-lane superhighway of information into brain. As AI improves, I think we'll see a progression that takes advantage:

1) raw text (hard/effortful to read)

2) markdown (bold, italic, headings, tables, a bit easier on the eyes) <-- current default

3) HTML (still procedural with underlying code, but a lot more flexibility on the graphics, layout, even interactivity) <-- early but forming new good default

...4,5,6,...

n) interactive neural videos/simulations

Imo the extrapolation (though the technology doesn't exist just yet) ends in some kind of interactive videos generated directly by a diffusion neural net. Many open questions as to how exact/procedural "Software 1.0" artifacts (e.g. interactive simulations) may be woven together with neural artifacts (diffusion grids), but generally something in the direction of the recently viral x.com/zan2434/status…

There are also improvements necessary and pending at the input. Audio nor text nor video alone are not enough, e.g. I feel a need to point/gesture to things on the screen, similar to all the things you would do with a person physically next to you and your computer screen.

TLDR The input/output mind meld between humans and AIs is ongoing and there is a lot of work to do and significant progress to be made, way before jumping all the way into neuralink-esque BCIs and all that. For what's worth exploring at the current stage, hot tip try ask for HTML.

Thariq@trq212

English

@SimonHoiberg How do you ensure quality in the code produced by your agents?

English

In the past 3 weeks, I've pushed:

- +25 commits

- +4300 lines of code

On average, every day.

And I haven't opened VSCode (or any other code editor) one single time.

All I do is talk to my agents through voice on Telegram. And I'm more productive than ever when I don't need to actually code.

I had no idea we'd get here this fast.

English

5 production gaps I find in almost every vibe-coded app:

1) No idempotency on payment webhooks

2) Secrets in git history

3) Schema lives in the dashboard, not the repo

4) No structured logging so failures are invisible

5) Auth checked on the client, not the server

Fix these before launch. Everything else can wait.

English

@asaio87 I agree to a certain extent. With proper context separation and architecture they are still useful.

However, if you are at a point where you are just prompting co-pilot that is bad. We need to get to the point where they are defining clear specs and implementing from there.

English

I was looking at an interesting architecture the other day where they were running a couple of fine tunes for quick easy inference and using the frontier models for handling conversation turns.

I think the truth is that is more sophisticated than 99% of the setups out there. Most people have a single API calling OpenAI or Anthropic.

You've inspired me to start thinking about self-hosting a small model at home. 🤔

English

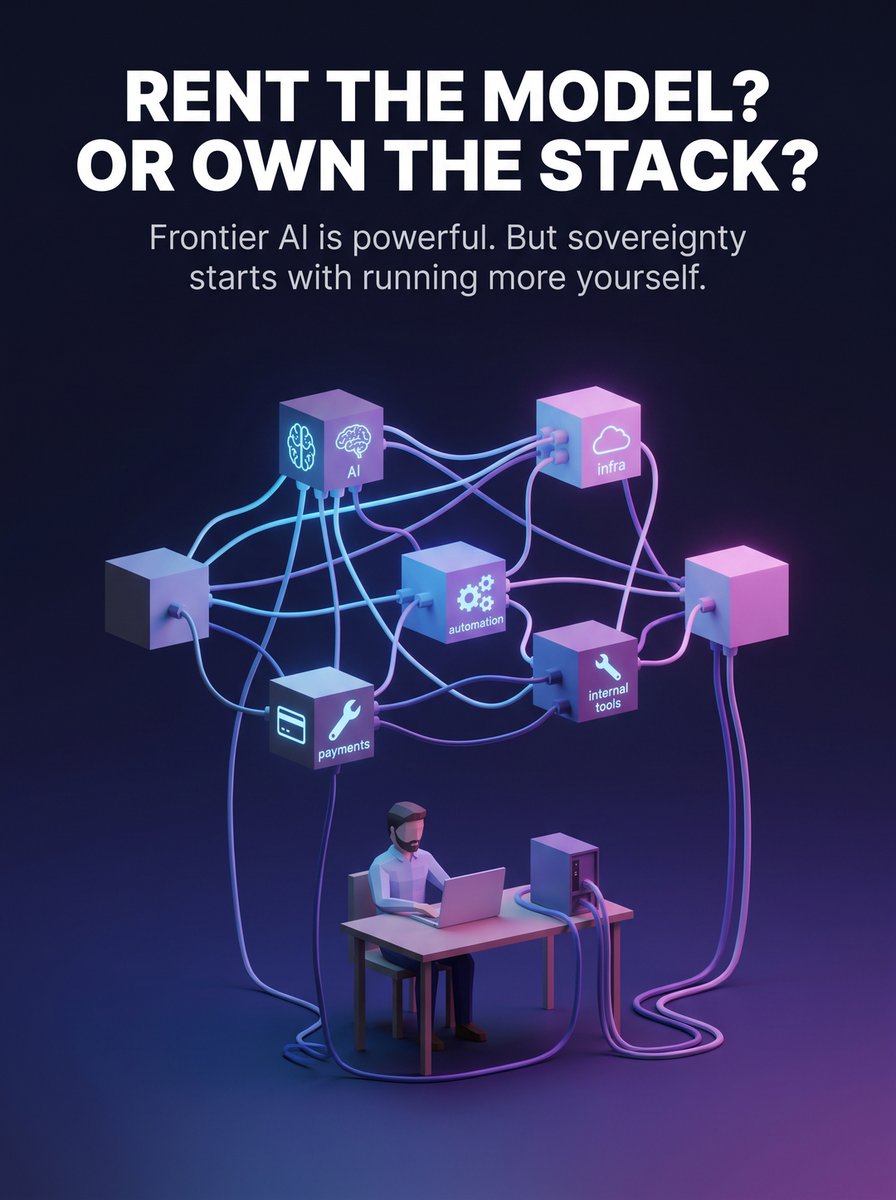

Open-weight models are still so far behind OpenAI and Anthropic for any serious work.

If you want to full agent experience, use the frontier models. I do. On the other hand - we need to get a foot in the door with models we can run ourselves now.

GPT-5.5 and Opus 4.7 are VC handouts. The models are SO expensive to run, and OpenAI/Anthropic are burning BILLIONS to subsidize the cost for you. But sooner or later, the money will run out and the prices will skyrocket.

And one thing is the price, but that's only half the problem.

A few tech companies are about to own the operating layer of 95% of all businesses (...again).

Right now is the chance to prepare for soverignity.

Models like qwen, kimi, deepseek - they feel very far behind, and they can do what frontier models do. But it's a great place to start.

Get used to the process.

Run on hardware you buy (like DGX Spark or Mac Mini) - or rent GPU instances on platforms like Vast AI.

Then start with boring tasks.

Classification.

Routing.

Summaries.

Internal search.

You're gonna be doing this soon. Or a few US tech companies are gonna own you once again.

English

Skills week 5/7

This one is super important and simple.

as-secret-scan

Scans for accidentally committed API keys, tokens, and credentials across staged changes, working tree, or full git history.

The reality is that AI coding tools write fast. They might also commit your Stripe key.

as-secret-scan catches what vibe coding leaves behind before it becomes an incident.

English

Founder sent me their repo. Said "it's working great, just needs a polish pass."

Found their .env committed in git history. Supabase service role key. Stripe secret. OpenAI key. All of it.

The app was live. With real users.

We're not talking about a sophisticated attack. Anyone who cloned the repo had every credential in plain text.

English