Sam Cooper

2.7K posts

Sam Cooper

@camsooper

Asc. Prof. at the Dyson School of Design Engineering, Imperial College London. Energy materials design. Online learning enthusiast. @tldr_group leader 🔗

This is the best paper written so far about the impact of AI on scientific discovery

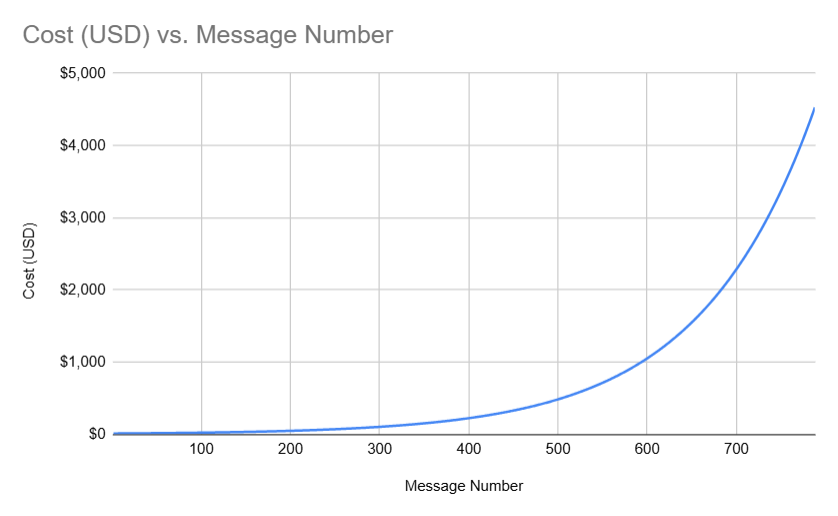

DeepSeek (Chinese AI co) making it look easy today with an open weights release of a frontier-grade LLM trained on a joke of a budget (2048 GPUs for 2 months, $6M). For reference, this level of capability is supposed to require clusters of closer to 16K GPUs, the ones being brought up today are more around 100K GPUs. E.g. Llama 3 405B used 30.8M GPU-hours, while DeepSeek-V3 looks to be a stronger model at only 2.8M GPU-hours (~11X less compute). If the model also passes vibe checks (e.g. LLM arena rankings are ongoing, my few quick tests went well so far) it will be a highly impressive display of research and engineering under resource constraints. Does this mean you don't need large GPU clusters for frontier LLMs? No but you have to ensure that you're not wasteful with what you have, and this looks like a nice demonstration that there's still a lot to get through with both data and algorithms. Very nice & detailed tech report too, reading through.

🌟 Join us for a special lecture with Professor @CarlosMorenoFr , the visionary behind the 15-minute city concept, as we celebrate our 10th anniversary!🌍 Discover his innovative approach to creating sustainable urban environments. 📅 Wed, 4 Sep 2024 eventbrite.co.uk/e/special-lect…

A student at a prestigious Beijing university has accused her Ph.D. supervisor of trying to coerce her into a sexual relationship. ow.ly/a4m650SI8FZ