ทวีตที่ปักหมุด

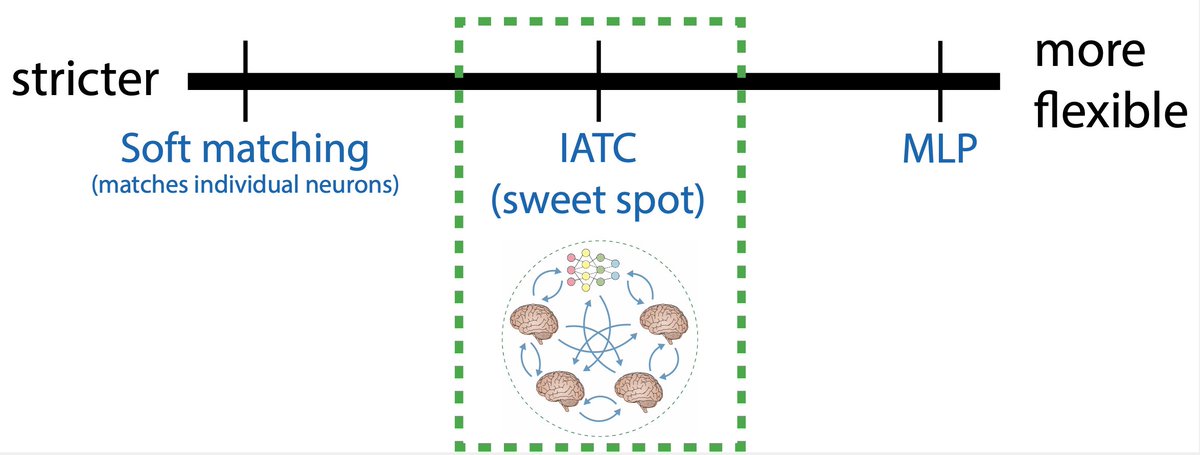

1/x Our new method, the Inter-Animal Transform Class (IATC), is a principled way to compare neural network models to the brain. It's the first to ensure both accurate brain activity predictions and specific identification of neural mechanisms.

Preprint: arxiv.org/abs/2510.02523

English