ทวีตที่ปักหมุด

Yves crypto

3.5K posts

Yves crypto

@cryptoyves1

professional MOD/project manager / check #Brothersmarketing for good services

เข้าร่วม Kasım 2015

1.3K กำลังติดตาม716 ผู้ติดตาม

Because you always active and I see you

“Sorry I don’t have my wallets on me”

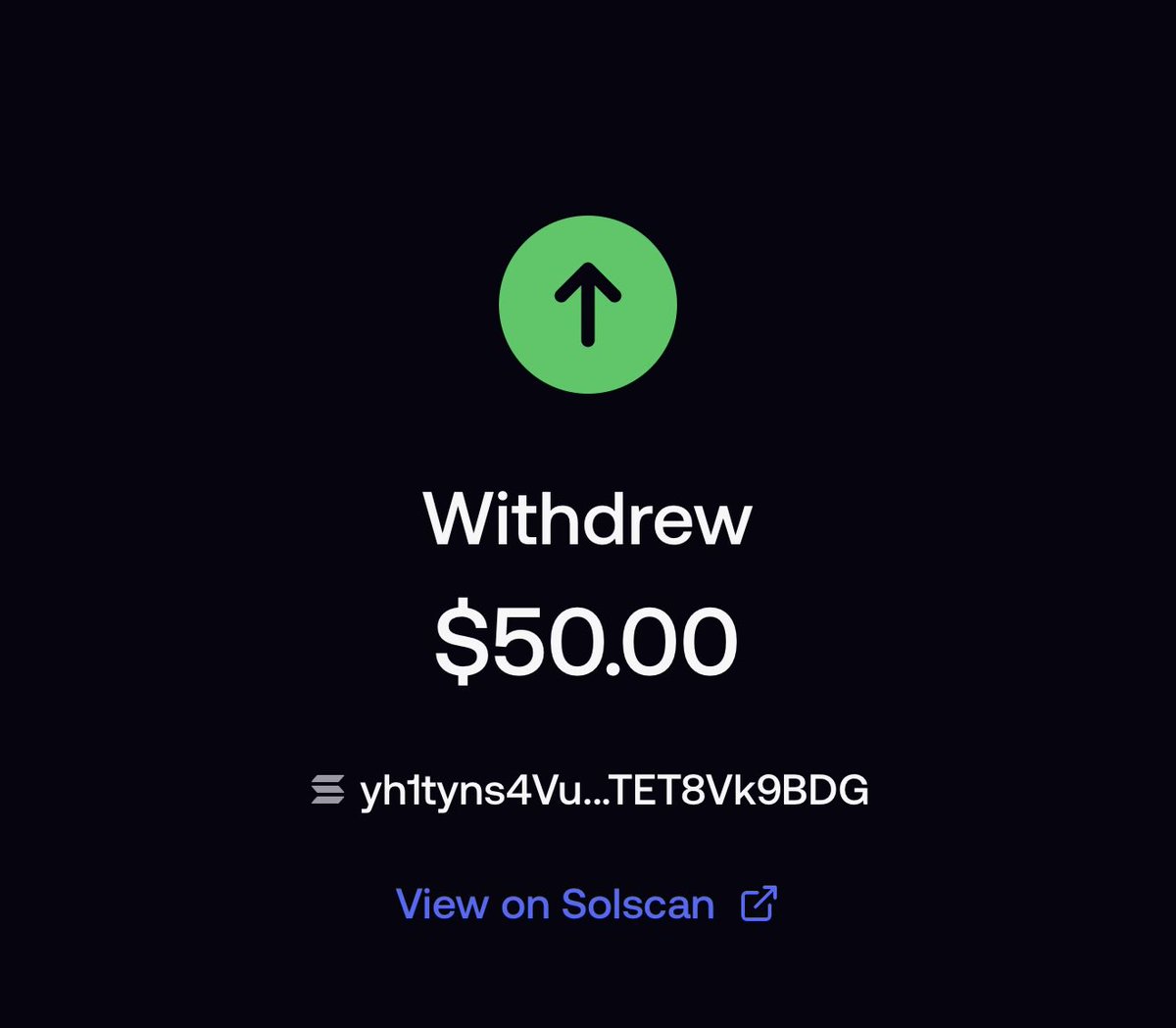

Kelly of web3@kellyofweb3_

@AceAgain_ Ggs Ace Kindly send me a sol so I can go again into the trenches FUuzRJjQRVxnLvW6Dfp9k3MVpZZfx9jnTbwQ4NaRMZDr

English

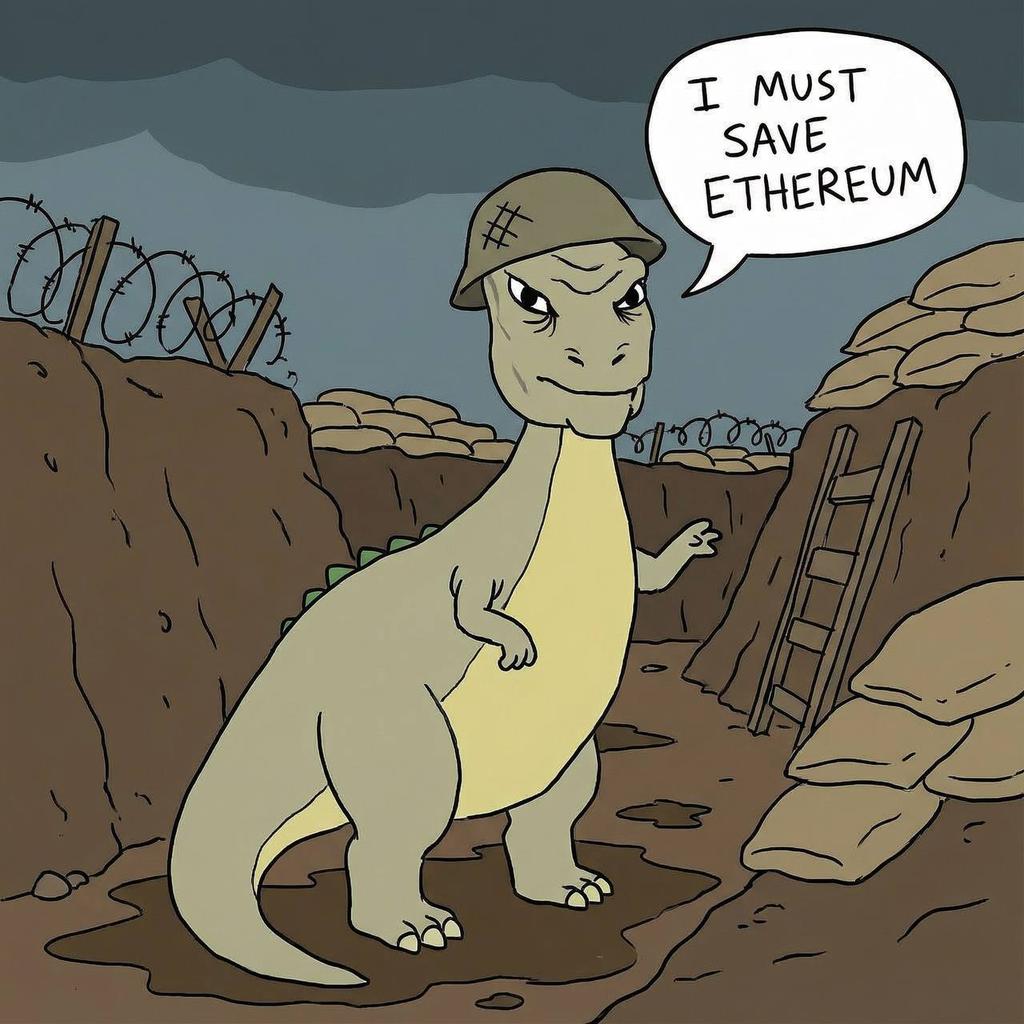

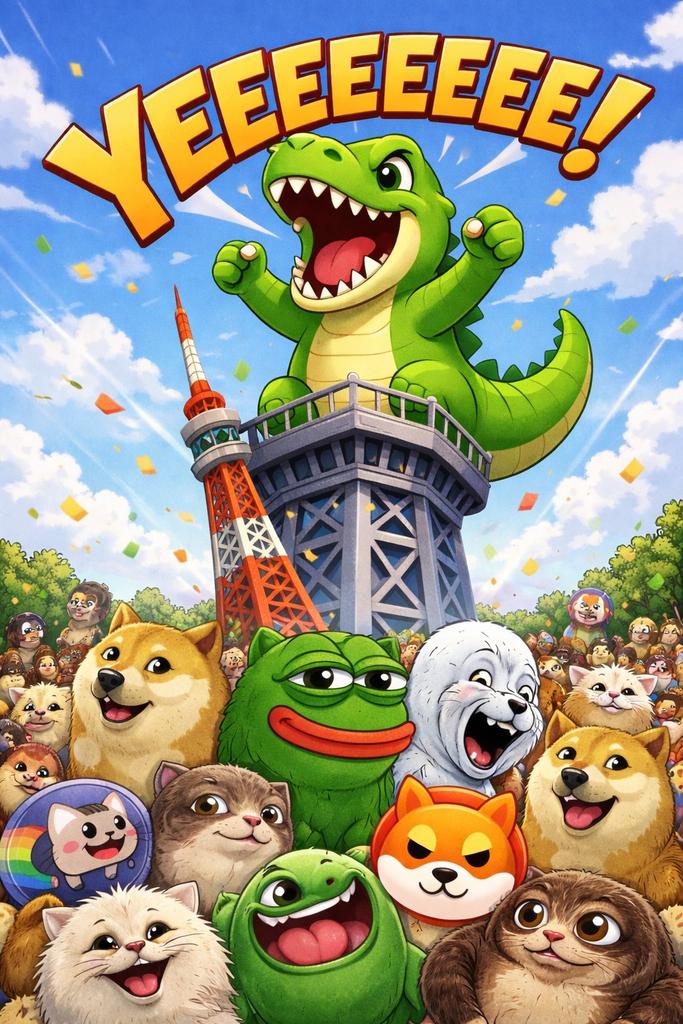

@BitcoinChina_ Take a look at @YeeErc20

Haven‘t seen a better narrative since $Pepe

Study $Yee

The return of the dino is coded for billions. 🦖🫶

STUDY THE TRILOGY

🐶🐸🦖

English

Ok, what up y'all. I'm back in action. Took some time off. About a week and a half. First real break in a while. Spent the entire thing building my own AI inference cluster because apparently that's what I do for fun now.

Picked up a pair of used NVIDIA DGX Stations. Each one has 4x Tesla V100 GPUs, 64GB VRAM, NVLink, 256GB RAM. Originally $69,000 each. Got both used for a less than $10k total. Connected the first one to my main workstation (Threadripper 3970X, RTX 3090) over a direct 10GbE ethernet link with jumbo frames. 8 V100s, one RTX 3090, 160GB of combined GPU memory across three machines sitting on my desk. Two of them are former enterprise servers that a Fortune 500 company probably depreciated off their books years ago.

The model lineup running right now:

- Qwen3 30B (MoE, 3B active params): 21 tokens/sec

- GPT-OSS 20B (MoE, 3.6B active): 13 tokens/sec

- Qwen2.5 Coder 32B: 3 tok/s, best open-source coding model at this size

- DeepSeek R1 32B: 3 tok/s, reasoning model that benchmarks above o1-mini

- Llama 3.3 70B: 1.5 tok/s, strongest overall open model

MoE architectures are the move for this hardware. Only 3B parameters active per forward pass means you get 21 tok/s out of a 30B model. Dense 32B models crawl at 3 tok/s on V100s. The 70B is usable for batch work but you're not having a conversation at 1.5 tok/s.

Biggest lesson I learned the hard way is that vLLM (the standard high-performance inference engine) straight up does not work on V100 GPUs. AWQ quantization requires compute capability 7.5+. V100 is 7.0. GPTQ kernel is documented as "buggy" and hung indefinitely. Marlin needs Ampere or newer. Three quantization backends, three failures. Spent days debugging before pivoting entirely to Ollama with GGUF models. llama.cpp just works on everything.

NVIDIA also dropped V100 driver support starting at version 550. Locked to the legacy R535 branch forever. Enterprise hardware depreciates like a brick and the software ecosystem moves on without it.

For comparison, a Mac Mini M4 Pro with 48GB unified memory ($2,000) runs 30B models at 12-18 tok/s and MoE models at up to 83 tok/s via MLX. Faster than a single DGX for single-model inference and uses 30 watts instead of 1,200. The Mac wins on efficiency, but it can't run 70B models, which is what I need for the research I'm doing. A 70B Q4 needs 42GB, leaving almost nothing for context in 48GB. One DGX handles it with room to spare. Two of them with 128GB of combined VRAM opens up models the Mac can't even attempt.

Cost math:

- Claude Max: $100-200/mo

- ChatGPT Pro: $200/mo

- Heavy API usage (Opus): Can be thousands/mo

- Two DGX Stations electricity at full load: $180-300/mo for unlimited local inference across both

Unlimited requests, complete data privacy, zero dependence on third-party APIs. The hardware pays for itself within a year and then it's just electricity forever.

Local models do not replace Claude or GPT-4 (yet) for complex multi-file agentic coding. That gap is still massive and I'll be real about it. I use local for code completion, quick questions, reasoning tasks, RAG pipelines, and anything I don't want touching a third-party server. Cloud for the heavy agentic loops.

Optimization stack, for anyone building something similar:

- CPU governor locked to performance mode

- Transparent hugepages enabled, swap disabled, vm.swappiness at 1

- NCCL for NVLink GPU communication

- Models pinned in VRAM for 24 hours (no cold-start penalty)

- 10GbE jumbo frames (MTU 9000) between machines

- UFW firewall locked to only accept requests from my workstation

Every knob I could find, turned.

Next step is clustering the two DGX Stations together. 128GB VRAM across 8 GPUs. 10GbE is too slow for tensor parallelism across machines (you need InfiniBand) but pipeline parallelism and model routing work fine over ethernet. Run different models on different boxes, route requests to whichever one has capacity.

This is me getting back to the grind. I'm diving back into agentic research, specifically the intersection of decentralized AI and Ethereum. If individuals can assemble competitive inference clusters from depreciated enterprise hardware, the economics of AI access change permanently. You don't need a data center. You need a couple used servers, some ethernet cable, and about a week of fighting NVIDIA drivers.

The models are open, the hardware is cheap, and the software exists. Sovereign compute is the play. Decentralized AI starts in your garage. We just have to build it.

Will be sharing some cool new stuff here in the coming days, so stay tuned!

English

April 7, market bottom.

"This is what it means for extinct species"

April 10

@BBCEarth "The world of dinosaurs brought to life like never before"

April 11🦖

$YEE the extinct dinosaur was reborn.

TIME@TIME

TIME's new cover: The dire wolf is back after over 10,000 years. Here's what that means for other extinct species ti.me/4jlJB54

English

TASSHUB PITCH PIT HOSTED BY: @Mystik_Crypto🧙♂️

Tomorrow, Wednesday Mar. 4th, 2:00PM (EST) / 7:00PM (UTC)

x.com/i/spaces/1jGXg…

Don't miss our next Pitch Pit episode, featuring:

@YeeErc20

@MeanPippin

@Whaleminersol

Join your favorite hosts and tune in live with TASSHUB for our 3x weekly Spaces, where we showcase promising projects and talk some Tass! Dive deeper into Tasshub: tasshub.com

English