ทวีตที่ปักหมุด

Practical AGI is achievable already, but requires 3 changes to the current LLM tool-calling approach:

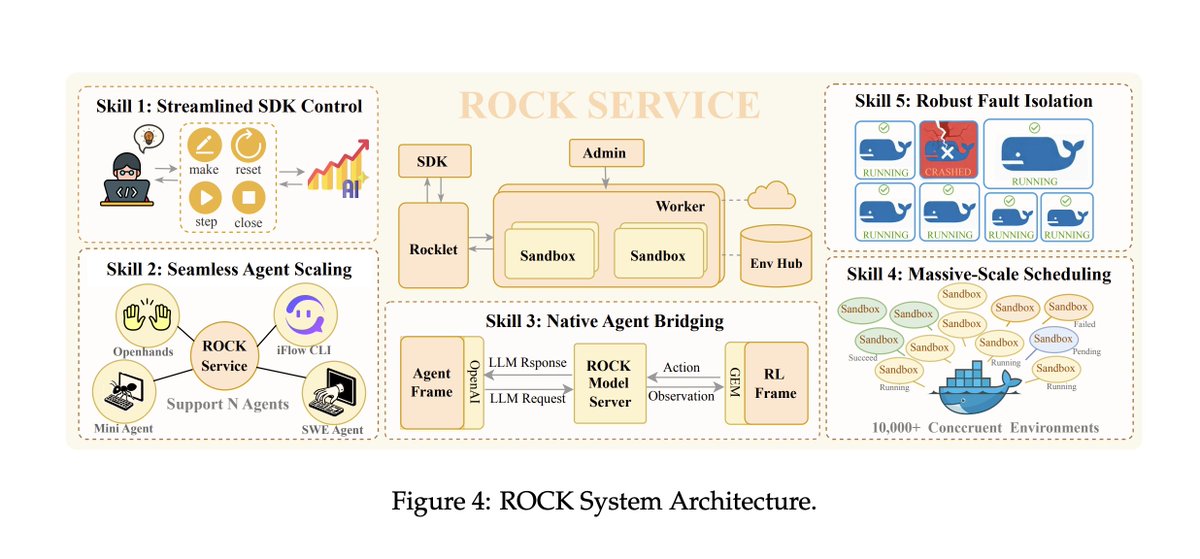

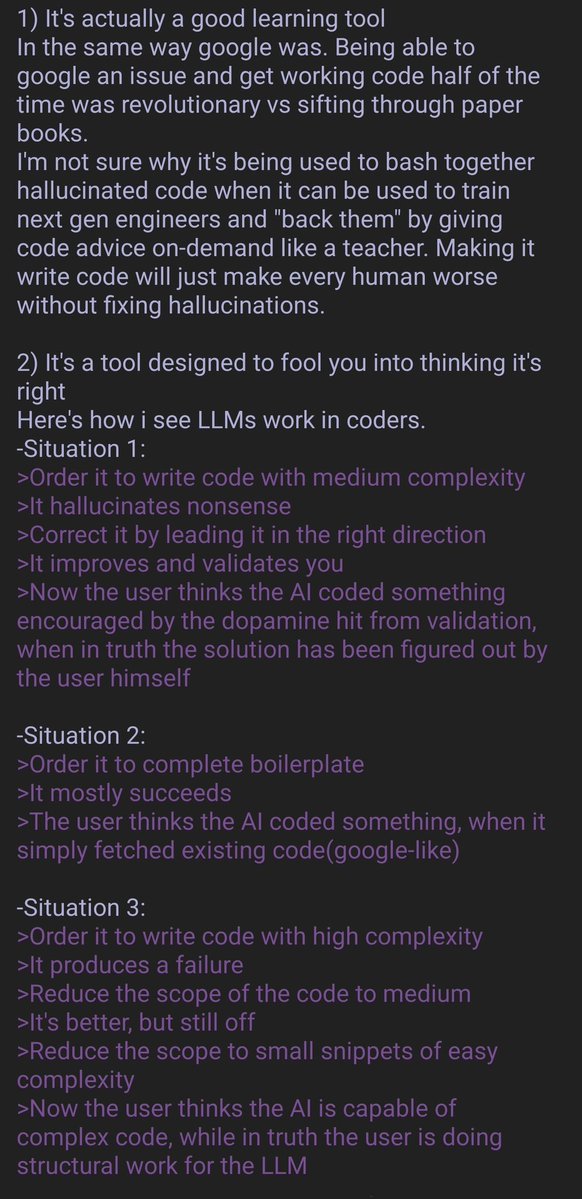

1. Tools assume that all information is already available in the prompt, but users in the real world are rarely so forthcoming. Consequently, we should build each tool to assume that details are missing by default, which is then solved through a continuous slot-filling exercise, rather than placing the onus on the user to provide everything upfront.

2. Moreover, each 'tool' should actually be its own specially trained module, which is able to provide outputs in addition to taking action (such as notifying partially successful actions, rather than just returning a final result). Each module must be modified to intrinsically handle ambiguity by establishing its own expectations about reasonable inputs and outputs. This (Bayesian) prior is baked in by humans, which allows us to control it.

3. Lastly, each module is a single node within a graph, operating as a federated system. There is no single monolithic entity controlling all the tools, but simply an orchestration node which operates just like any other module in the network. This allows exponential scaling in intelligence as you add additional modules. We already have something similar with MoE, but the key difference is that these expert modules are programmable and interpretable, rather than black boxes.

When we recognize that most users in reality are unwilling to learn proper prompting techniques, we can then embrace the chaos by building a system that is robust to failure and capable of continuous learning.

Luckily, there are no further research breakthroughs to start moving in this direction. More details to be revealed soon, please comment below to poke holes or provide feedback!

English