Yutong (Kelly) He

191 posts

@electronickale

PhD student @mldcmu, I’m so delusional that doing generative modeling is my job

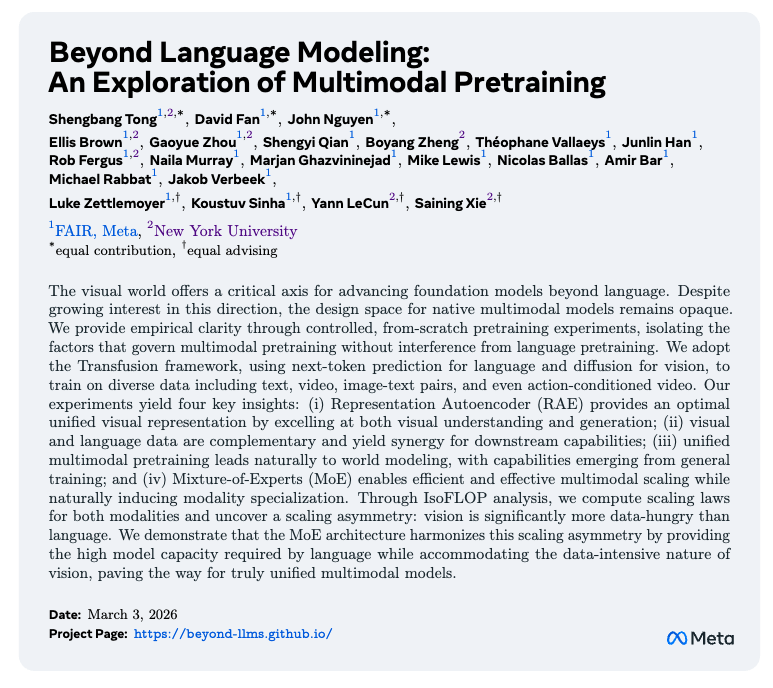

The newest model in the Mamba series is finally here 🐍 Hybrid models have become increasingly popular, raising the importance of designing the next generation of linear models. We've introduced several SSM-centric ideas to significantly increase Mamba-2's modeling capabilities without compromising on speed. The resulting Mamba-3 model has noticeable performance gains over the most popular previous linear models (such as Mamba-2 and Gated DeltaNet) at all sizes. This is the first Mamba that was student led: all credit to @aakash_lahoti @kevinyli_ @_berlinchen @caitWW9, and of course @tri_dao!

Excited to announce our workshop on flow-based generative models at CMU: Frontiers of Flows for Generative AI March 26-27, Pittsburgh PA cmu-l3.github.io/flows2026/ We have an amazing lineup of featured talks, panel discussions, and lightning talks. Registration is now open!

We just brought flow maps to language modeling for one-step sequence generation 💥 Discrete diffusion is not necessary -- continuous flows over one-hot encodings achieve SoTA performance and ≥8.3× faster generation 🔥 We believe this is a major step forward for discrete generative modeling and language modeling alike. 🚀 Full thread from first author @chandavidlee: x.com/chandavidlee/s…

You like discrete diffusion, but it's too slow? 🥀 You like test-time inference, but it's for continuous methods? 😩 We fixed it. Introducing Categorical Flow Maps: continuously sample discrete data in a single step 🚀💫 How? 🧵⬇️ 💪 Co-led with @FEijkelboom, @daan_roos_

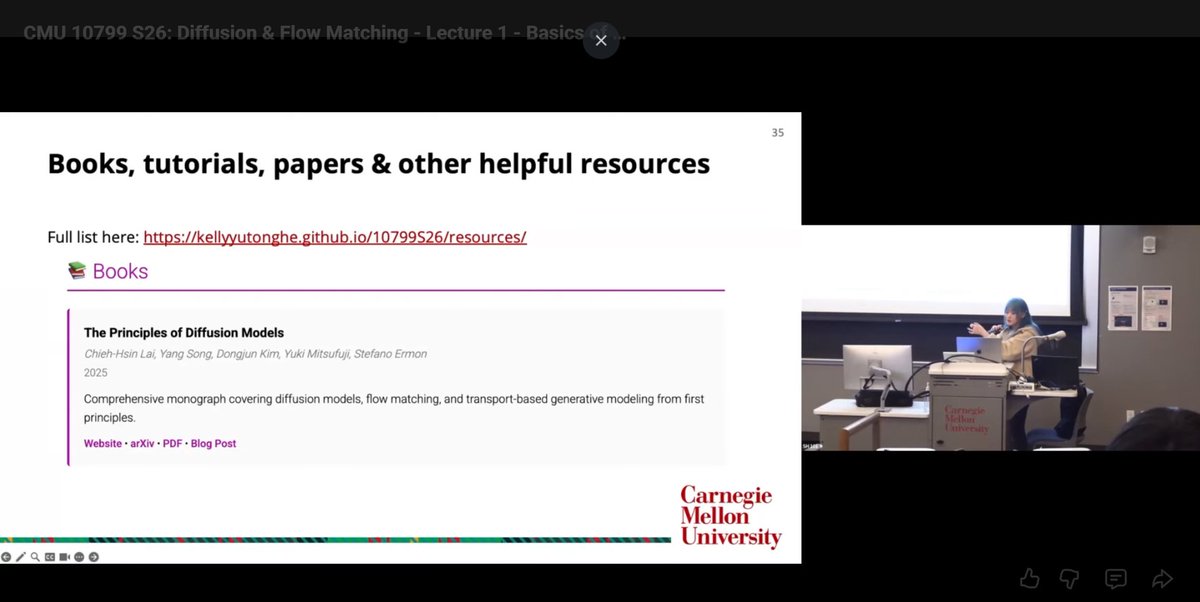

Tired to go back to the original papers again and again? Our monograph: a systematic and fundamental recipe you can rely on! 📘 We’re excited to release 《The Principles of Diffusion Models》— with @DrYangSong, @gimdong58085414, @mittu1204, and @StefanoErmon. It traces the core ideas that shaped diffusion modeling and explains how today’s models work, why they work, and where they’re heading. 🧵You’ll find the link and a few highlights in the thread. We’d love to hear your thoughts and join some discussions! ⚡ Stay tuned for our markdown version, where you can drop your comments!

🎓 Happy to share: CMU is incorporating our book 《The Principles of Diffusion Models》 as a core resource for their diffusion & flow-matching course materials. If you’re teaching or learning diffusion models — or want a systematic, principled handbook — feel free to use it too. Feedback welcome 🙂 Huge thanks to Kelly (@electronickale) for the endorsement! See their course post w/ @zicokolter @rsalakhu 👇 x.com/electronickale… 🖇️ Links to our book + webpage in the thread.

I'm teaching a diffusion & flow matching class at CMU in Spring 2026 where students can use ChatGPT, Cursor, or any AI tool they want. No exams. Just build with open internet. 139 students signed up for 20 spots. Here's what's happening: 🧵 kellyyutonghe.github.io/10799S26/

ARC Prize 2025 Winners Interviews Paper Award 3rd Place @LiaoIsaac91893 shares the story behind CompressARC - an MDL-based, single puzzle-trained neural code golf system that achieves ~20–34% on ARC-AGI-1 and ~4% on ARC-AGI-2 without any pretraining or external data.