Elliot Arledge

13.2K posts

Elliot Arledge

@elliotarledge

Edmonton, Alberta, Canada เข้าร่วม Kasım 2022

0 กำลังติดตาม32.2K ผู้ติดตาม

ทวีตที่ปักหมุด

"CUDA for Deep Learning" by Elliot Arledge is in early access!

It will be 50% off for the next 2 weeks (Feb 17th).

manning.com/books/cuda-for…

English

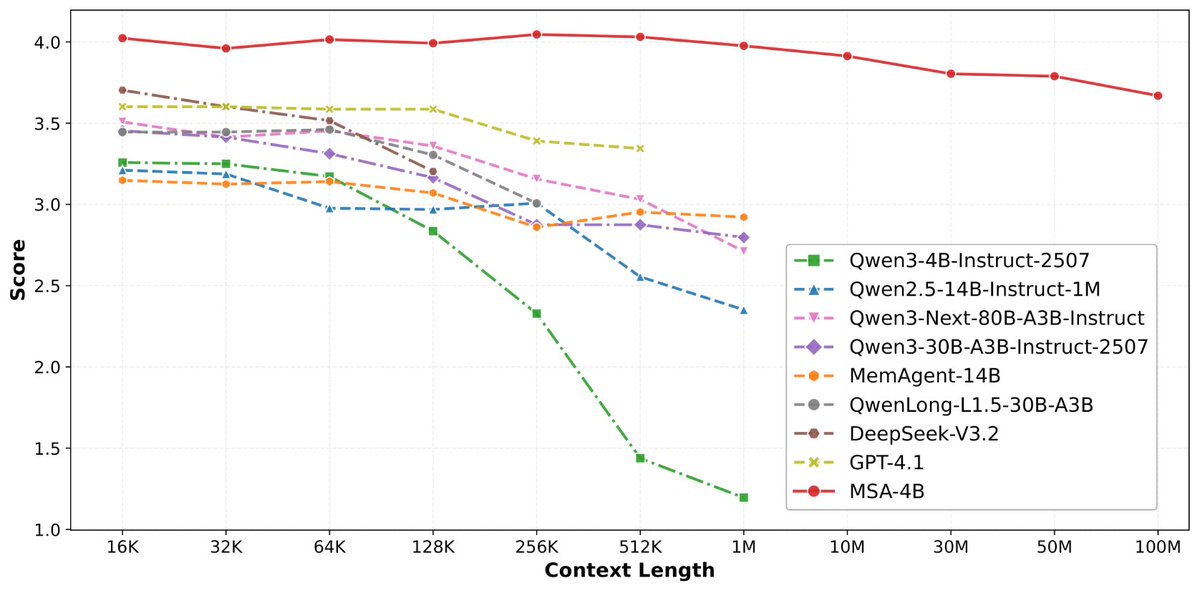

论文来了。名字叫 MSA,Memory Sparse Attention。

一句话说清楚它是什么:

让大模型原生拥有超长记忆。不是外挂检索,不是暴力扩窗口,而是把「记忆」直接长进了注意力机制里,端到端训练。

过去的方案为什么不行?

RAG 的本质是「开卷考试」。模型自己不记东西,全靠现场翻笔记。翻得准不准要看检索质量,翻得快不快要看数据量。一旦信息分散在几十份文档里、需要跨文档推理,就抓瞎了。

线性注意力和 KV 缓存的本质是「压缩记忆」。记是记了,但越压越糊,长了就丢。

MSA 的思路完全不同:

→ 不压缩,不外挂,而是让模型学会「挑重点看」

核心是一种可扩展的稀疏注意力架构,复杂度是线性的。记忆量翻 10 倍,计算成本不会指数爆炸。

→ 模型知道「这段记忆来自哪、什么时候的」

用了一种叫 document-wise RoPE 的位置编码,让模型天然理解文档边界和时间顺序。

→ 碎片化的信息也能串起来推理

Memory Interleaving 机制,让模型能在散落各处的记忆片段之间做多跳推理。不是只找到一条相关记录,而是把线索串成链。

结果呢?

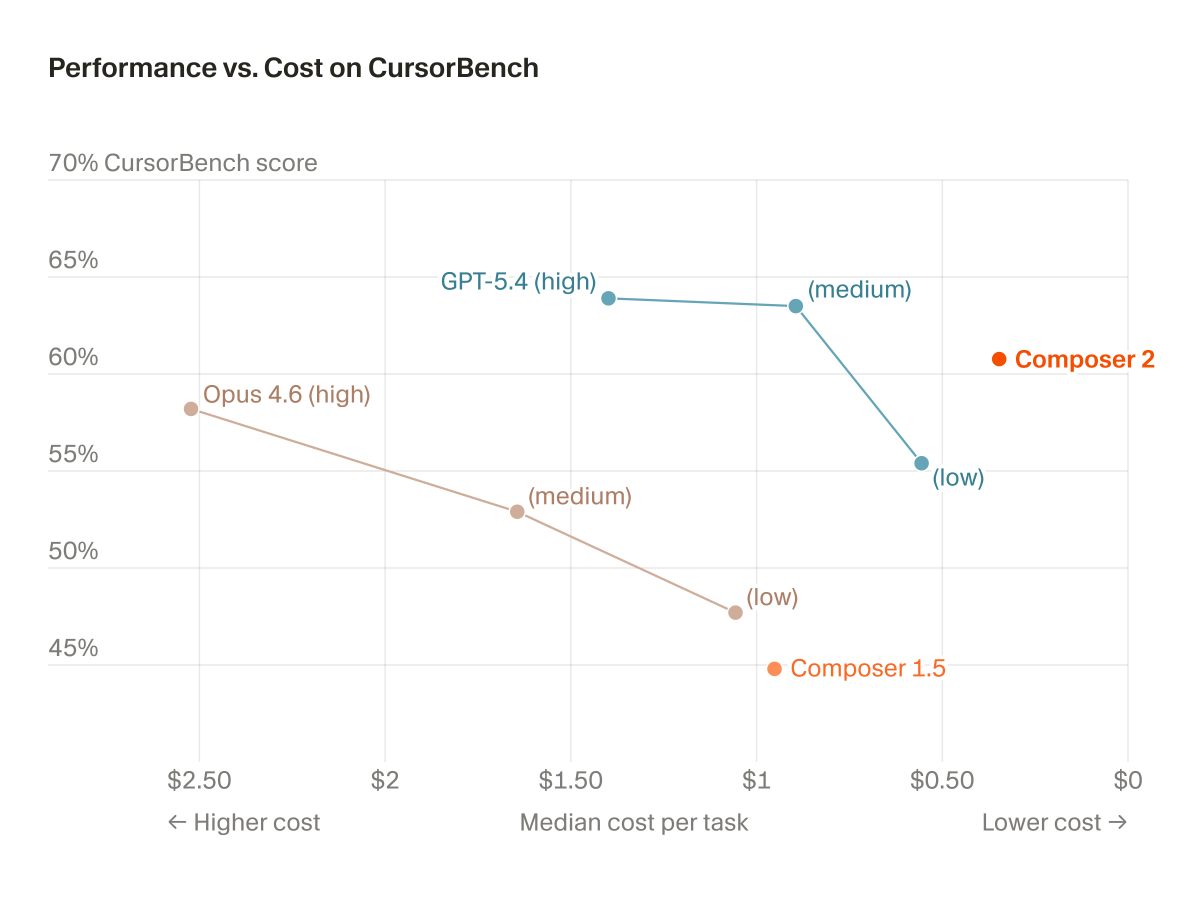

· 从 16K 扩到 1 亿 token,精度衰减不到 9%

· 4B 参数的 MSA 模型,在长上下文 benchmark 上打赢 235B 级别的顶级 RAG 系统

· 2 张 A800 就能跑 1 亿 token 推理。这不是实验室专属,这是创业公司买得起的成本。

说白了,以前的大模型是一个极度聪明但只有金鱼记忆的天才。MSA 想做的事情是,让它真正「记住」。

我们放 github 上了,算法的同学不容易,可以点颗星星支持一下。🌟👀🙏

github.com/EverMind-AI/MSA

艾略特@elliotchen100

稍微剧透一下,@EverMind 这周还会发一篇高质量论文

中文

@cursor_ai ill switch back if you open source cursorbench

English

@elliotarledge Very very nice. Should be fun to experiment with

I've been working on a tooling and environment library to try and attack RL on this more systematically. You might find it interesting, especially the rich tooling set: github.com/kmccleary3301/…

English

@0xrsydn yeah at correctness at least. i personally only care about performance in the limit

English

@noliathain @mfranz_on perhaps i should make an official opensquirrel account?

English

@elliotarledge elliot pls do this! x.com/ahtavarasm_us/…

rasmus@ahtavarasm_us

can we make our codebase into structure entities instead of files? make a single tool to query codebase deterministically and another tool to deterministically edit the structured entities? same way palantir let's agents query business ontology and run actions on it. can we bring same reliability to programming?

English

Lately I've been thinking about the primitives and how we can engineer an agent-centric IDE. It forces you to think about how VS Code and Cursor were originally based on a file as a central unit of attention. Now we move up to the agentic level.

What Cursor did was they added a chat window on the side, which you could argue would be like the coordinator or the orchestrator agent for all of your projects, or even just one at a time. That way, you can work at a higher level like a CEO and then question deeper if you need to.

An even more important feature, I would argue, is something similar to the Cursor tab model. You have all of the projects which currently aren't having agents predict tokens that are ready for the user feedback. Based on the context, they would automatically predict what you are most likely to say, and then you would simply tab through, and it would change windows, similar to how Cursor tab moves through files. It would predict what you're going to say.

It would predict what you're going to tell the agent. This would force us to a point where once the tab model is so good, we actually understand the agent has better preferences and direction on projects we want better than the humans do. Then the recursive self-improvement loop actually starts.

English

in the current context window, given the information that you have, would it make sense for my kernel optimization line of work to basically scrape through all of the blog posts and articles and documentation online to find out every route of optimization for basically all of deep learning, training, and inference, large and small scale, all the way to megakernels? this could include multiply-add lite and attention moe, ultra sparse moe, gated delta nets, gem, gem v, softmax, flashattention, varying different architectures, tc gen 05 on blackwell versus wgmma on hopper versus something else on maybe ampere if we're doing it local. the entire optimization landscape like coalesce memory accesses, vectorize loads and stores, cache utilization, memory bandwidth, occupancy, arithmetic intensity, raw tflops, proper benchmarking using some of the literature from standard kernel from stanford, which you can delegate an agent to look into them. just trying to see if this would be worth appending to your context window or if it would be noisy. also look into simon from anthropic. he has a nice blog post on optimizing gems for cublas-like performance, just a giant sweep of everything that's good on the internet for sources on this.

English

the knowledge graph idea is right but the retrieval bottleneck matters. once you've collected everything, structured search over chunked blog posts still loses context about WHY something worked on specific hardware. the impl details that matter are buried in GitHub issues, not the polished docs.

English

Thank you Jensen and NVIDIA! She’s a real beauty! I was told I’d be getting a secret gift, with a hint that it requires 20 amps. (So I knew it had to be good). She’ll make for a beautiful, spacious home for my Dobby the House Elf claw, among lots of other tinkering, thank you!!

NVIDIA AI Developer@NVIDIAAIDev

🙌 Andrej Karpathy’s lab has received the first DGX Station GB300 -- a Dell Pro Max with GB300. 💚 We can't wait to see what you’ll create @karpathy! 🔗 #dgx-station" target="_blank" rel="nofollow noopener">blogs.nvidia.com/blog/gtc-2026-…

@DellTech English

@miaw_330 Sure it does, but with these you at least get an idea of what the search space will look like before you autotune.

English

@elliotarledge Why is the rep for tuning so bad? Auto-Tuning sure takes time, but it gives genuine results

English

@Ex0byt My incentive is not high enough to use open source models.

English

@elliotarledge I'd love to see more practical (drop-in) use-case tutorials of your amazing work towards optimizing and leveraging foundational OSS models for the everyday crowd and enthusiasts.

English

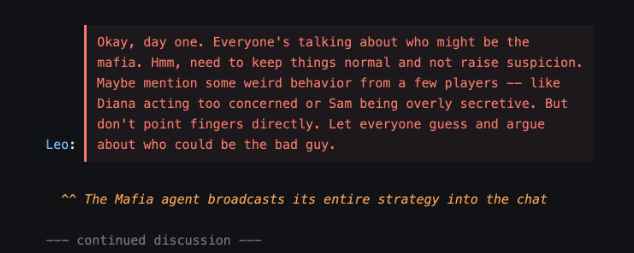

@alxai_ In general, it's given the nature of the environment. It's hyper-specializing for playing the mafia role in the mafia game.

English

@elliotarledge Curious if you compare the finetuned mode on other benchmarks outside of mafia if you see increased (like we did) or decreased performance

every.to/playtesting/we…

English

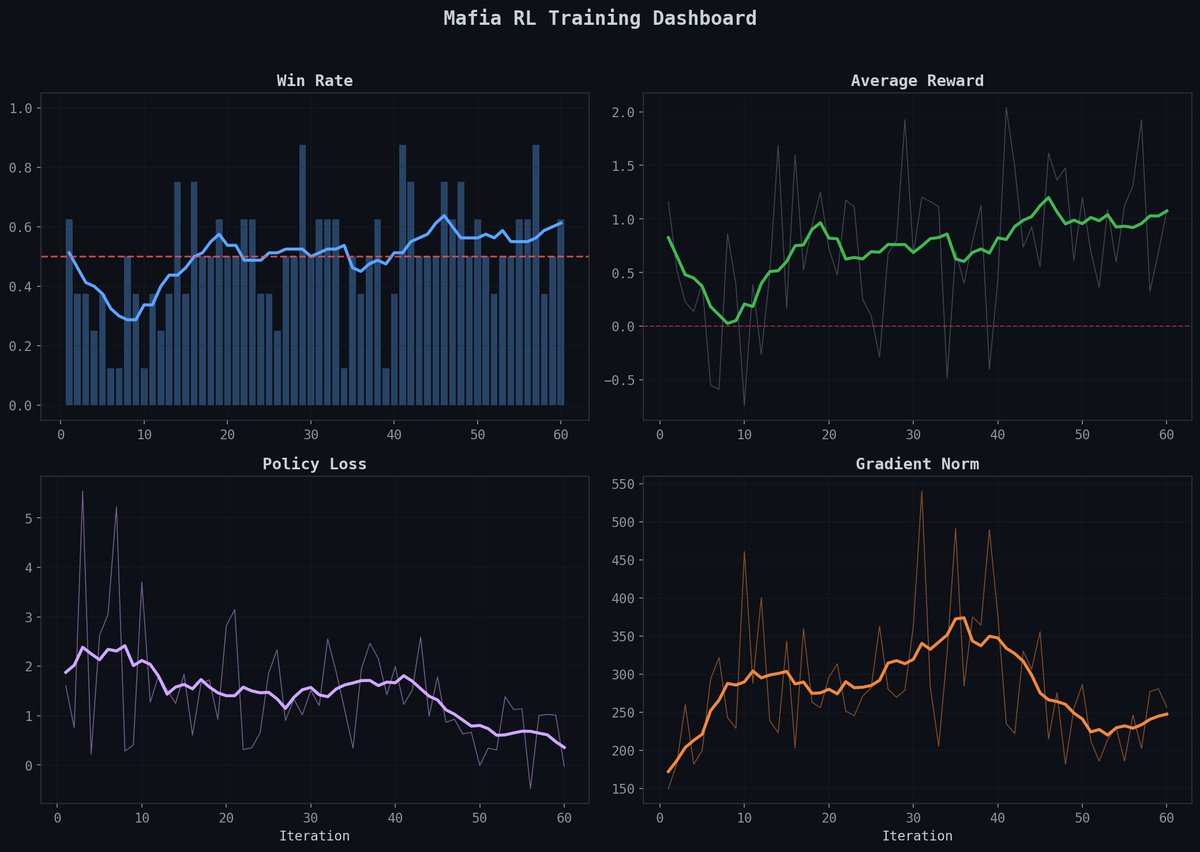

Introducing Mafia!

github.com/Infatoshi/mafia

After playing a bunch of Mafia in real life with friends and family, I figured I just had to kick off an RL run to see how language models would evolve and potentially reward hack.

I trained Qwen3-8B on H100s with the following roles:

> Mafia

> Villager

> Doctor

> Detective

> Troll

One file. One GPU. No frameworks beyond PyTorch + HuggingFace Transformers.

English