EverMind

118 posts

EverMind

@evermind

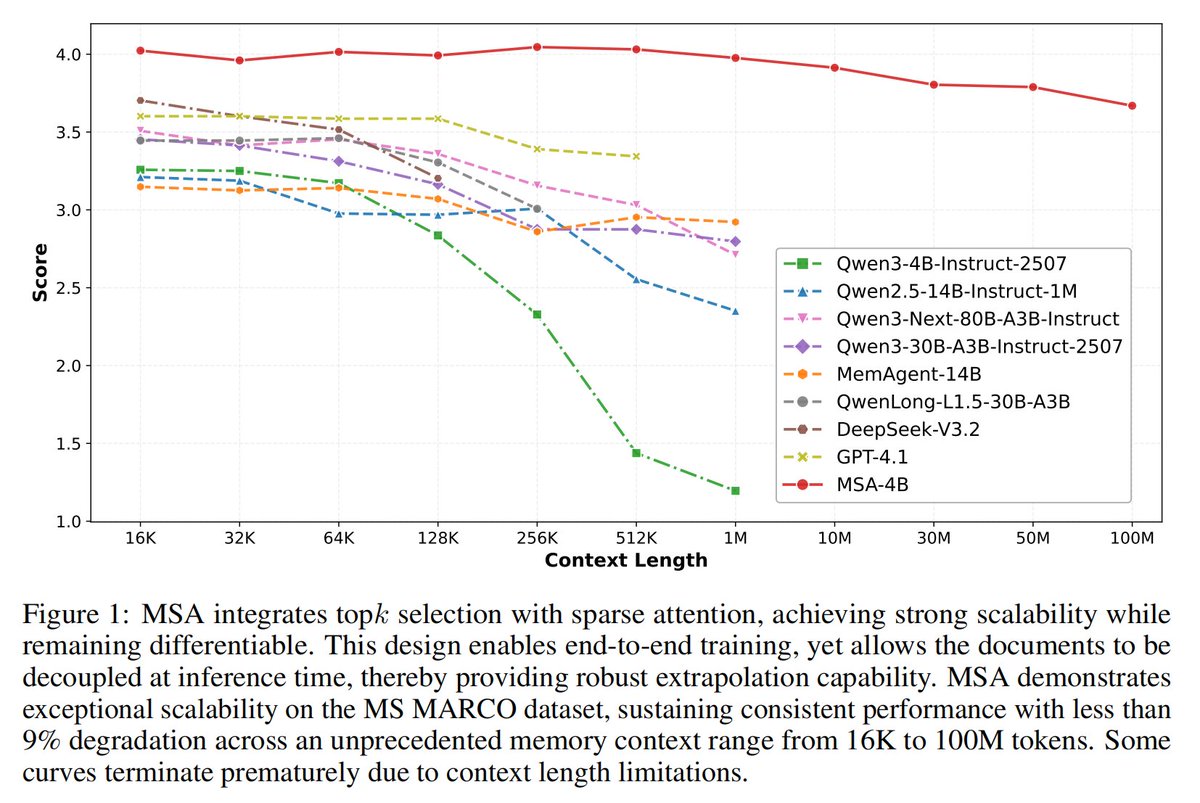

GitHub: https://t.co/yUIvUnLEUD Discord: https://t.co/DgVdDw3x6B Memory Sparse Attention: https://t.co/h5pIQhgh2j

Discover how our Agent Memory and Plugin integrate with @openclaw.🦞 github.com/EverMind-AI/Ev…

🔥 Physical AI Day! Bay Area Devs, see you on March 19! Happening during GTC week, join RTE Dev Community& @TenFramework for a full day of brainstorming and building with two hardcore events seamlessly hosted at the same venue: 🌅 9:30 AM | Meetup: Conversing with the Physical World Join industry leaders from @AgoraIO @MiniMax_AI @evermind @RiseLink_X, HumanTouch, and Resonance Ventures @karal127 as we dive deep into the opportunities and future of Multi-Modal & Edge AI. 🛠 1:30 PM | Workshop: Hands-on Voice AI Hardware Build and deploy a voice AI Agent using the TEN Framework. Here’s the best part 👉 We’re providing 40 Agora R1 dev kits on-site. Successfully run your code, and you get to take the hardware home for FREE! 💡 Note: Morning and afternoon sessions require separate registrations. Spots are highly limited, so act fast! Links in the thread 👇 #PhysicalAI #EdgeAI #VoiceAI #GTC2026