Guy Van den Broeck

51 posts

Guy Van den Broeck

@guyvdb

Professor of Computer Science at UCLA @UCLAComSci; working on Artificial Intelligence

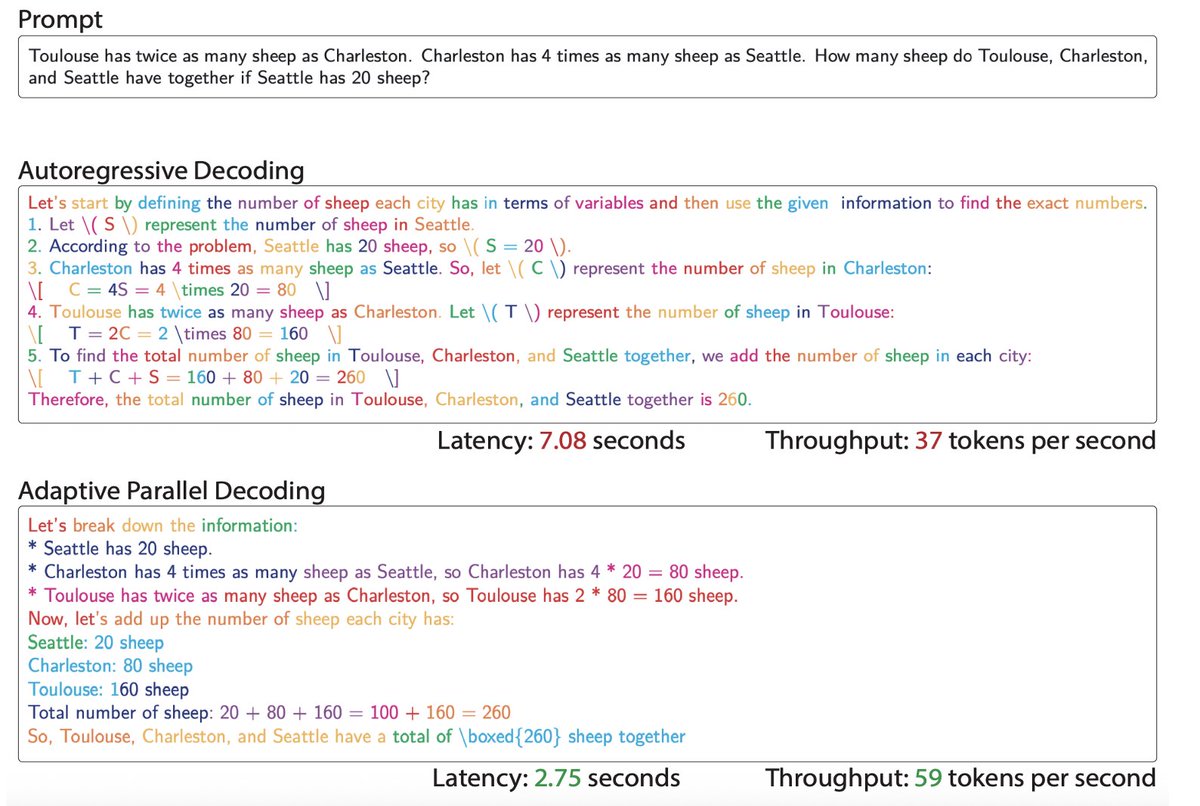

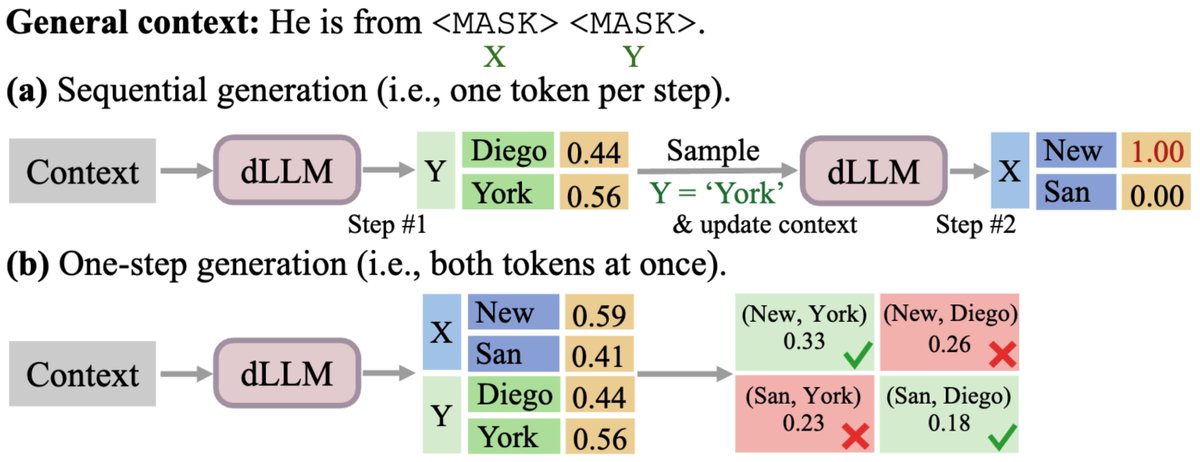

One of the biggest promises of Diffusion LLMs is parallel generation: predicting multiple tokens at once to bypass the sequential bottleneck of autoregressive models. However, parallel generation comes with a price. For example: Should the sentence “He is from [MASK] [MASK]” be filled with [New] [York] or [San] [Diego]? If a diffusion model predicts both at the exact same time, it assumes independence and may produce... [San] [York]. 🤦♂️ We argue this arises from a structural misspecification: models are restricted to fully factorized outputs because parameterizing the full joint distribution would require a prohibitively massive output head. This is the Factorization Barrier crippling parallel generation. Here is how we broke it with CoDD.

One of the biggest promises of Diffusion LLMs is parallel generation: predicting multiple tokens at once to bypass the sequential bottleneck of autoregressive models. However, parallel generation comes with a price. For example: Should the sentence “He is from [MASK] [MASK]” be filled with [New] [York] or [San] [Diego]? If a diffusion model predicts both at the exact same time, it assumes independence and may produce... [San] [York]. 🤦♂️ We argue this arises from a structural misspecification: models are restricted to fully factorized outputs because parameterizing the full joint distribution would require a prohibitively massive output head. This is the Factorization Barrier crippling parallel generation. Here is how we broke it with CoDD.

📢Feb 2 (Mon): Planned Diffusion 🙅Diffusion language models are capable of parallelizing text generation but can struggle with coherence in low time-step regimes. 💡Planned Diffusion unlocks a new axis of parallelism: Token-level parallelism ➡️ semantic parallelism ✍️Planned diffusion first generates a structured plan, then diffuses semantically independent spans of text in parallel according to the plan. This Monday, Daniel Israel (UCLA) (@danielmisrael) and Tian Jin (MIT) (@jintian) will discuss their exciting Planned Diffusion paper as joint first authors. Collaborators: Ellie Cheng (elliecheng.com), Guy Van den Broeck (@guyvdb), Aditya Grover (@adityagrover_), Suvinay Subramanian (@suvinay), Michael Carbin (@mcarbin) Paper link: arxiv.org/abs/2510.18087

"An hour of planning can save you 10 hours of doing." ✨📝 Planned Diffusion 📝 ✨ makes a plan before parallel dLLM generation. Planned Diffusion runs 1.2-1.8× faster than autoregressive and an order of magnitude faster than diffusion, while staying within 0.9–5% AR quality.

"An hour of planning can save you 10 hours of doing." ✨📝 Planned Diffusion 📝 ✨ makes a plan before parallel dLLM generation. Planned Diffusion runs 1.2-1.8× faster than autoregressive and an order of magnitude faster than diffusion, while staying within 0.9–5% AR quality.