David Hall

215 posts

@halldm2000

Researcher: Artificial Intelligence, Extreme Weather, Climate, Physics

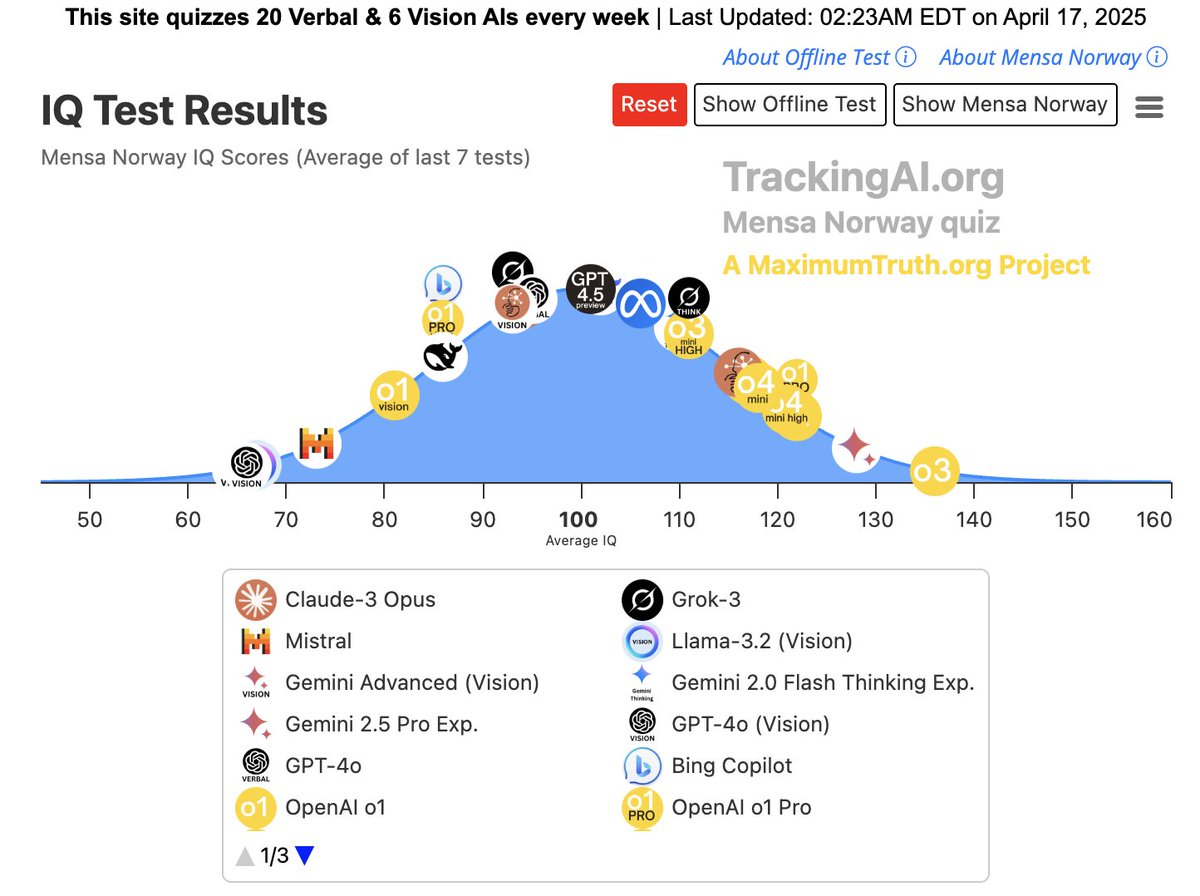

It's happening: OpenAI's new model jumped ***30 IQ points*** to 120 IQ 120 IQ is higher than 9 in 10 humans. "Pour one out for our 300,000-year reign as the smartest species on the planet. Was a great run." - @waitbutwhy "Worried about AI taking over the world? You probably should be. That’s my new takeaway after testing OpenAI’s new model. I had become blasé about AI progress after my initial tests in February, because there was approximately zero IQ improvement since then. This week, that all changed." Note: the author @maximlott administered another contamination free test which showed a lower score (~100 - average human) but a relatively similar leap forward in IQ. So regardless of which score you look at, the leap was HUGE, and the trend is obvious. There is not much time left.

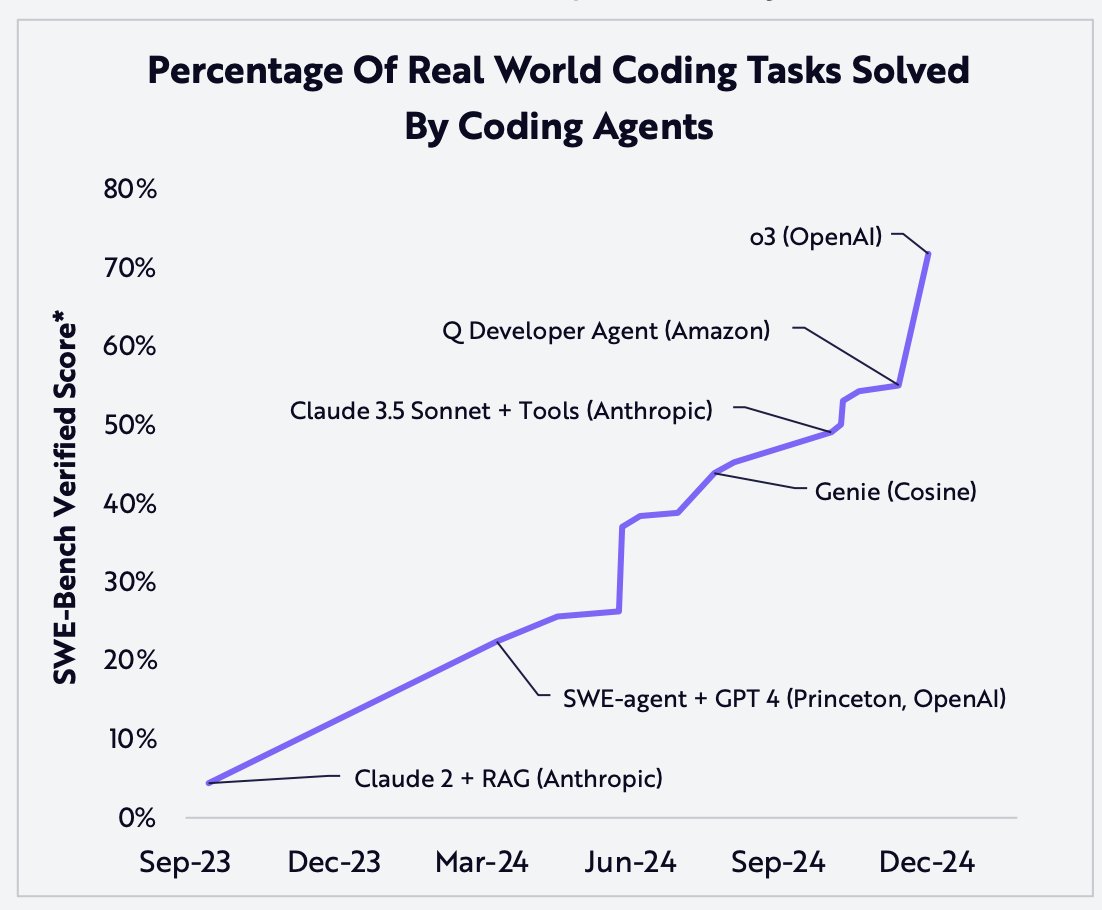

How can people can see chart after chart like this and not realize the tsunami that is imminent AGI companies are about to unleash 100 billion superhuman shoggoth coders onto the internet

"Due to improved coding and research engineering performance, OpenAI o3-mini is the first model to reach Medium risk on Model Autonomy." This is the riskiest possible threat level where OpenAI has said they will still release a model.

How has the cost to use LLMs changed over time? Our analysis shows that the price to reach a given benchmark score has fallen dramatically—between 9x and 900x per year, depending on the benchmark and score. 🧵