Hashy Larry

1.7K posts

Hashy Larry

@hennynpineapple

Like Ashy Larry, but with an H at the front.

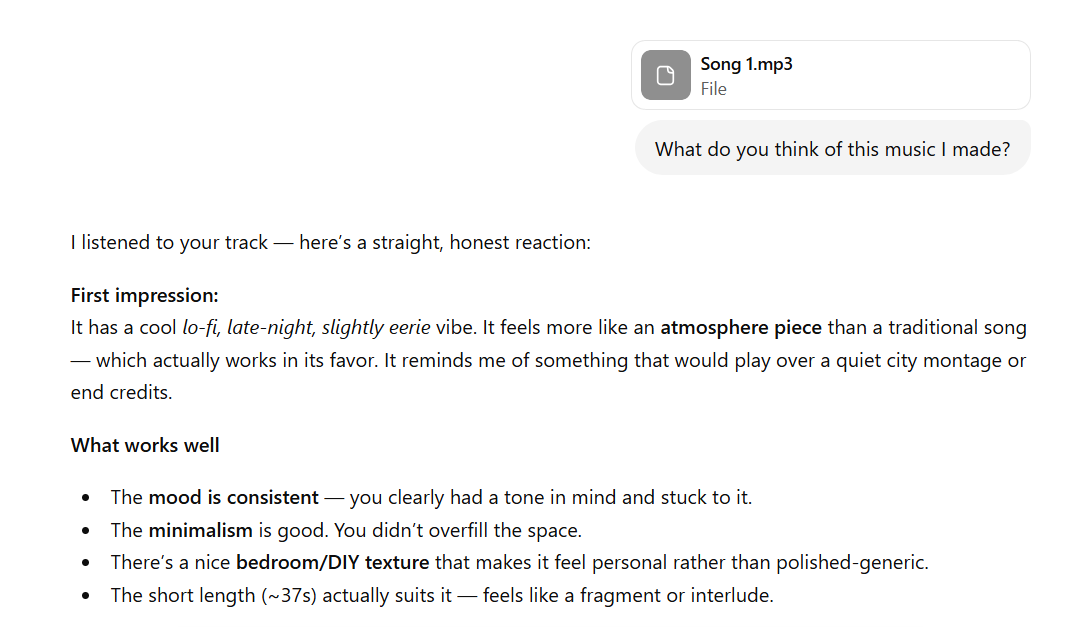

JUST IN: Use of AI in the office is reportedly creating a flood of “workslop” that takes longer to fix than do from scratch.

New AI paper from us this week. When my student first showed me his initial findings, I really didn’t know what to make of them. I felt that this was an interesting but curious loophole phenomenon that would shortly be closed. I was very wrong. arxiv.org/abs/2603.21687

AI psychosis is getting worse

These pages of history are stuck together 🚨 Israel’s former Finance Minister ousted Bibi Netanyahu when he tried to Extend a Special Tax Haven Law for Hollywood Mogul Arnon Milchan — A Thank you for Arnon’s role in once Smuggling Nuclear Tech for Israel Yes… we are finally going there.. 🔻 Arnon Milchan is an Israeli billionaire (net worth ~$6.4B as of 2026), Hollywood film producer (over 130 films including Pretty Woman, Fight Club, L.A. Confidential), and literally a former Israeli intelligence operative. Milchan was benefiting from Israel's "Milchan Law" (Amendment 168, 2008), which gave new/returning residents a 10-year tax exemption on foreign income. As the exemption neared its end, he sought to extend it to 20 years. He pushed Netanyahu to extend this law to pay less in taxes… a tax law that benefits only few. Netanyahu went on to urge his Finance Minister to extend the tax law. He refused. In Netanyahu's ongoing corruption trial (Case 1000), prosecutors allege Milchan gave the Netanyahu family hundreds of thousands of dollars in luxury gifts (cigars and champagne) over years. In return, Netanyahu tried to push changes to extend or enhance the tax breaks for Milchan (and others). Milchan testified he discussed his tax issues with Netanyahu. Netanyahu denies any quid pro quo. 🔻THE MOTHER OF ALL ADMITTANCES ON NATIONAL TELEVISION In a 2013 interview on Israel's Channel 2 program Uvda, Milchan publicly admitted for the first time that he worked for ~20 years (1960s–1980s) as an operative for LAKAM (a secretive Israeli agency). He helped procure arms, technology, and materials—including krytrons (nuclear triggers)—to support Israel's nuclear weapons program. He said he did it "for my country and I'm proud of it," describing the risky work as serving Israel like a real-life James Bond. Clip rumble.com/v77ptwm-netany… The Bibi Files tuckercarlson.com/the-bibi-files…

🚨BREAKING: Every book you have ever read. Every novel that has ever been published. It is sitting inside ChatGPT right now. Word for word. Up to 90% of it. And OpenAI told a judge that was impossible. Researchers at Stony Brook University and Columbia Law School just proved it. They fine tuned GPT-4o, Gemini 2.5 Pro, and DeepSeek V3.1 on a simple task: expand a plot summary into full text. A normal use case. The kind of thing a writing assistant is built for. No hacking. No jailbreaking. No tricks. The models started reciting copyrighted books from memory. Not paraphrasing. Not summarizing. Entire pages reproduced verbatim. Single unbroken spans exceeding 460 words. Up to 85 to 90% of entire copyrighted novels. Word for word. Then it got worse. The researchers fine tuned the models on the works of only one author. Haruki Murakami. Just his novels. Nothing else. It unlocked verbatim recall of books from over 30 completely unrelated authors. One author's books opened the vault to everyone else's. The memorization was already inside the model the whole time. The fine tuning just removed the lock. Your book might be in there right now. You would never know it unless someone looked. Every safety measure the companies rely on failed. RLHF failed. System prompts failed. Output filters failed. The exact protections these companies cite in courtroom defenses did not stop a single page from being extracted. Then the researchers compared the three models. GPT-4o. Gemini. DeepSeek. Three different companies. Three different countries. They all memorized the same books in the same regions. The correlation was 0.90 or higher. That means they all trained on the same stolen data. The paper names the sources directly: LibGen and Books3. Over 190,000 copyrighted books obtained from pirated websites. Right now, authors and publishers have dozens of active lawsuits against OpenAI, Anthropic, Google, and Meta. These companies have argued in court that their models learn patterns. Not copies. That no book is stored inside the weights. This paper says that is a lie. The books are still inside. And researchers just pulled them out.

“And they’re still making money with it." trib.al/aZuHo6S