Charlie Hou

41 posts

Charlie Hou

@hou_char

Research Scientist @ Google DeepMind

everyone goes through a process reward models phase

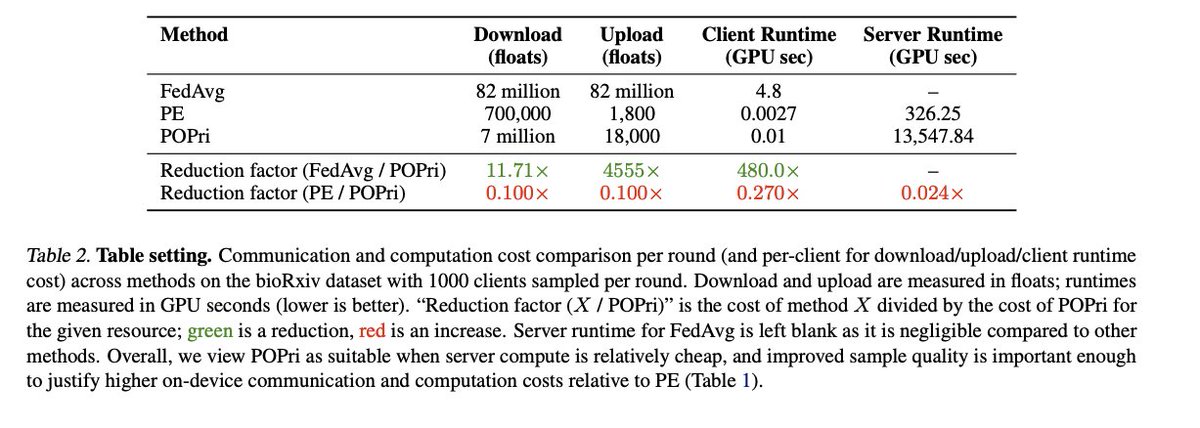

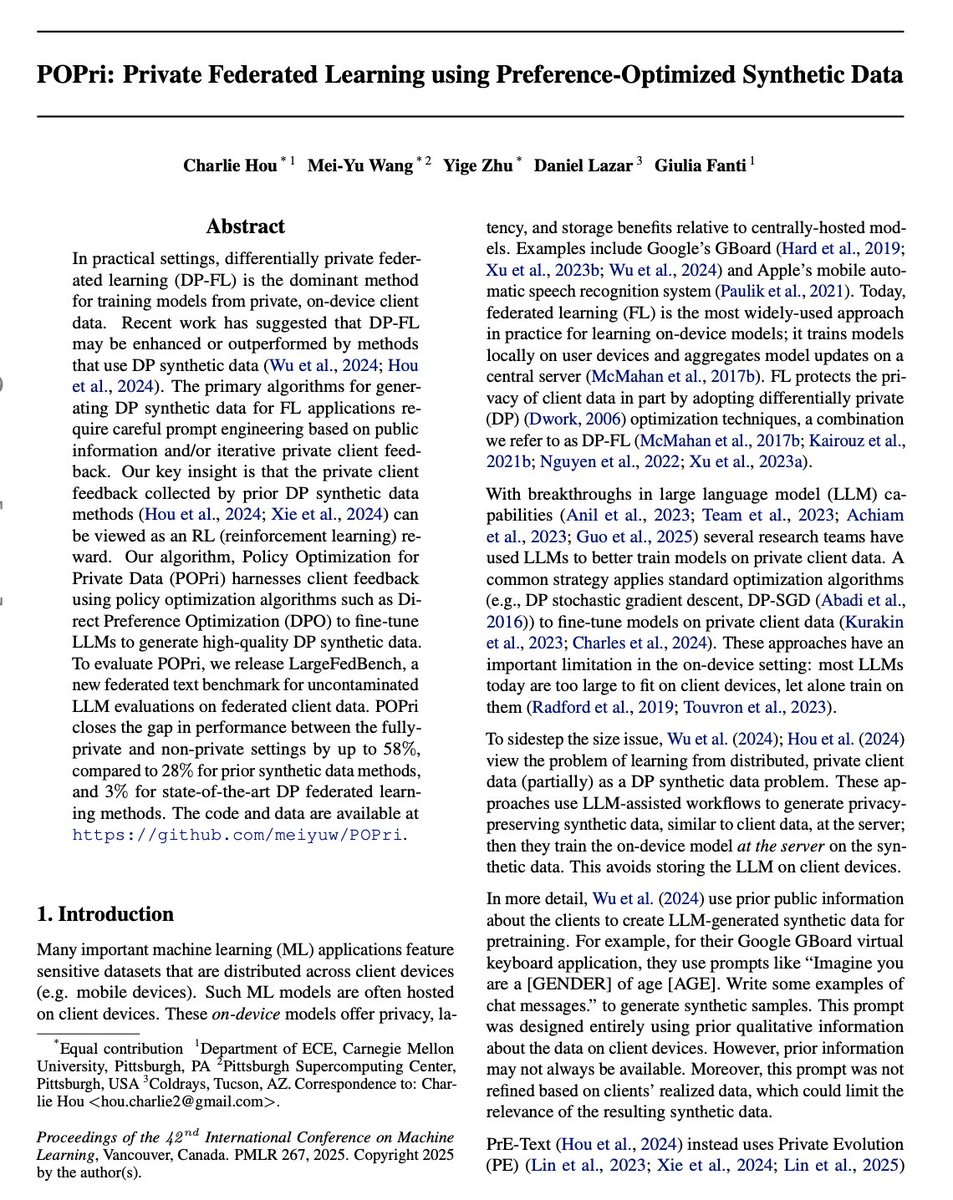

Gave a talk at @OpenAI on our work 🌸 POPri “Policy Optimization for Private Data”. POPri is a huge improvement in synthetic data generation under security+privacy constraints! Learn more:

Gave a talk at @OpenAI on our work 🌸 POPri “Policy Optimization for Private Data”. POPri is a huge improvement in synthetic data generation under security+privacy constraints! Learn more:

I’m excited today to share Grapevine, a system that makes it really easy for AI agents to search company knowledge across, Slack, Notion, codebase, and more. The first app we’re launching with it is a company GPT that works remarkably well, better than any alternative today.