Jose P. Vasquez

1.3K posts

Jose P. Vasquez

@jpvasq

Economist. @LSEManagement. My work is about international trade, labo(u)r markets, and economic development. 🇨🇷

This is a great point, @arindube , and I am really guilty of not sharing more about what I have been up to on the teaching side of things, given that this is what they pay me for! Let's get back on track with a mega post. At @LSEEcon, we've been running a series of structured experiments to figure out exactly how GenAI changes the production function of economics education. We moved past the "cheating" panic early (although we didn't really have one in our programmes) and started actively rebuilding our pedagogy around these tools. Here's what we're doing and what we're learning, and btw we will be presenting our work at CTREE 2026 in Las Vegas in late May if you are in town. The AI Economics Professor With Ronny Razin, we built a specialised, course-aligned AI tutor. The key idea Ronny had: best way to verify if a student actually understands a concept is to ask them to explain it interactively. Clearly this does not scale to the class size we have at LSE (Ronny teaches his course to 850 first-year students). But we can scale with AI! The key pedagogical principle is that the chatbot uses a Socratic framework. It refuses to hand out final answers. Instead, students are prompted with an exercise, and the chatbot asks them to identify the next step in a mathematical or logical derivation themselves, guiding them through the reasoning rather than short-circuiting it. It adapts to the students' level, for example by clarifying concepts or notation if needed. This gives students access to 24/7 personalised tutoring, levelling the playing field for those who might hesitate to speak up in small classes or office hours, and solving Bloom's "2-sigma problem" in economics education. Notice that we didn't train the bot or fine-tuned t to our course material. We just provided a system prompt embracing the Socratic approach, and the solutions to the exercise students had to solve. That's it. Off the shelf LLM model (it was Gemini 2.5 Flash). We did run a small experiment for a game theory exercise, where students had to work out strictly dominated strategies, and pure and mixed strategy Nash equilibria. The feedback we received is overwhelmingly positive: students found it useful to work through the reasoning with the chatbot, and it helped them understand the material better. We are also in the process of establishing if the use of the chatbot improves marks in the final exam, although we don't have a full analysis yet. But I can say that this was a very good year for the distribution of marks in this course, way above the average of previous years. If this proves as good as it looks, next step is to scale this to more courses, potentially expand to similar disciplines in LSE, and potentially expand to other universities. Stay tuned. AI Feedback Experiment Providing high-quality, scalable formative feedback is one of the hardest problems in our job. It's incredibly labour-intensive, and the result is that students often get too little feedback, too late to act on it. Main problem, again, is scale. Can we use AI to enhance our feedback process? We did an experiment with @MichaelGmeiner2 in one of our MSc courses. Michael is a great teacher. In his Econometrics course, he teaches students how to write referee reports, and provides feedback to each one of them on 5 submitted referee reports. We thought, why don't we provide two feedback reports for each submission, one AI-generated and one human-generated? This will allow us to evaluate how good the AI feedback is with respect to human feedback (well, Michael's feedback, which is superhuman in my view, but ok). And so we did. We didn't say which is which to students, to avoid any kind of bias. And again, we just cooked up a prompt for the LLM to generate feedback on the referee report, we provided the AI with the paper to referee, the referee report submitted by the student, and nothing more. We found out that students rated the AI-generated feedback as less useful than the human-generated, although not by a lot. Main problem with the AI-generated feedback is that it is too generic, and does not address the specific TECHNICAL issues that the student may have missed in their report. It is also too positive, and does not provide the student with the critical feedback that they need to improve. In particular, students highlighted that the AI feedback did not enhance their critical thinking, and did not address methodological problems in the research article they were refereeing. Some of these aspects can be addressed with a better prompt, and we are working on it. The technical and methodological issues can also be addressed by providing a summary of what the teacher expects students to criticise in the paper, although there may be additional challenges in this approach (what if the student finds something else to criticise that the teacher did not think of? it happens all the time). Students also mentioned they think the two pieces of feedback are complementary, and they will be happier getting both that just one of them. This points in the direction of a hybrid approach, where AI is used to enhance the human feedback process, rather than substituting it. The caveat is, of course, that we haven't used the most recent models, we didn't try with mixture-of-experts and all the tricks in the book. Teaching Python & RELAI Principles Perhaps our biggest curriculum shift: with @JADHazell we pioneered teaching AI coding tools to first-year students. In the first year macro course that Joe teaches, we introduce students to Python coding for economic analysis. This year, we decided to move in a different direction: since the advent of AI coding agents, we believe it is more important to be able to READ and ORGANISE code than writing it. It is more important to be able to explain your intent to the AI coding agent, and verify that intent has been reflected in the code, than to be able to write the code yourself and test it. But how can you teach students that have never seen a line of code to do that? Introducing Reverse Engineering Learning with AI (RELAI). Start with a full snippet of Python code. The student is told to prompt the AI to explain what the code does. Once the student understands what the code does, it can asks about the syntax and the programming concepts behind the snippet. Then can ask a study plan for those concepts, if needed. Then can try to enquire the AI about what would happen if I change this line or this parameter. Then it can experiment itself by changing the code, and debug with the help of the AI. Finally, the student can ask the AI to produce new code, based on what was learned, and the new intent. I call this the EXPLORE approach: Examine the code, eXplain what it does, Probe deeper, Link to economics, Output prediction, Recreate understanding, Extend with modification. Once students are familiar with AI coding agents, they are assessed with a challenging coursework that Joe created. The assignment has a part that is difficult to do without AI, but should be feasible with AI. There are open ended questions where students have to go beyond the simple repetition of what was learned in the course, possibly explore new datasets and questions, etc. We think this approach can help integrate AI coding agents into the curriculum in a meaningful way, and help students develop a deeper understanding of coding tools in a faster and more efficient way. Coursework is on the way, so we will be able to evaluate the impact of this approach in the next few months. I personally believe RELAI can be adapted to other topics and subjects, and can become one of the way we interact with AI when learning something new. Read more about our approach here: python-ec1b1.vercel.app AI as a productivity tool This is where you can really go nuts. I have used AI to produce new teaching material for several workshops and courses. Slides, assignments, exercises, etc. The last few exams were written with AI tools, creating a series of questions first with suggested solutions, and then choosing the most appropriate ones. I use a coding agent (@cursor_ai ) with access to my teaching materials and past exams, so that it is aware of the content and style. You get a very good exam draft in minutes, and can edit, change questions, generate new ones, etc. It used to take me days to write a good exam, now it takes me a few hours in the afternoon. I used Cursor to do deep research about a new course I wanted to design. I asked for topics, examples, current research in the field that I may not have been aware of, similar courses' syllabi, and in general what was the state of the art in the field. I got a very long list of topics that I could choose from to design my own course, based on my taste, interest and what I think my students should know. I could generate different versions of the same course for different levels (UG, MSc and PhD). Conclusion We are still at the early stages of this journey. We are learning a lot, and we are still figuring out how to best use AI to enhance our teaching. One important thing you may have noticed is that we first define our pedagogical approach and then we integrate AI tools to support it. The other principle should be, design not for the tools you have now, but the ones you will have in a few months or years. If you have comments, or have been running similar experiments, I will be happy to hear from you.

I have read a ton from economists in my TL about use of AI in their research workflow. Much less about teaching. Would love to hear what folks' experience has been on that front. (Not problems of students using AI: I mean use of AI in teaching workflow, the good and the bad.)

I was writing about land reform in West Bengal last night and was curious if it had persistent effects on the ownership distribution. So I did what anyone would do, I* wrote an academic paper on it Turns out — yes! 1/

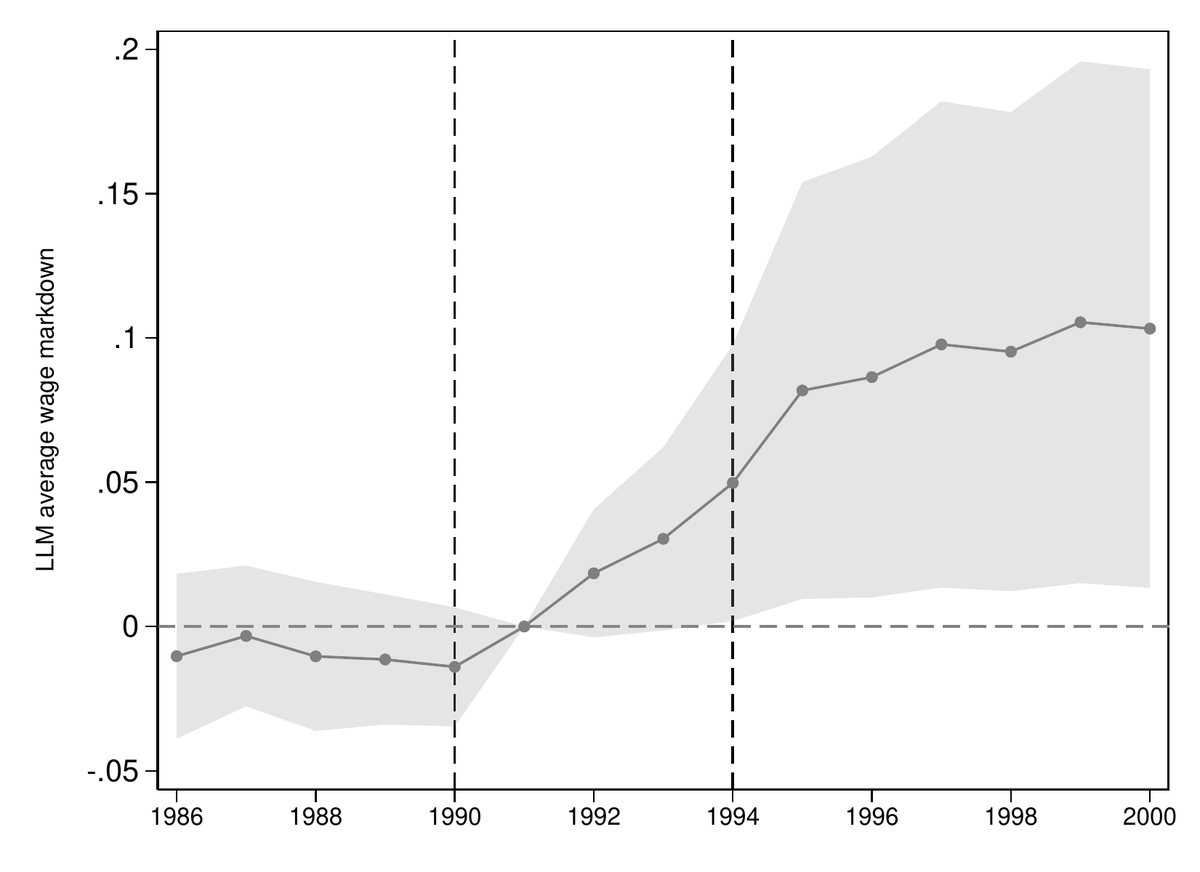

In settings with high informality, the gains from trade are significantly amplified by reductions in misallocation. During economic downturns, the informal sector acts as a buffer against unemployment but leads to larger aggregate real-income losses. econometricsociety.org/publications/e…

Hi #Econtwitter, I'd like to share the slides from the PhD Applied Econometrics course I just had the privilege to teach at @AreBerkeley Regression & causality, selection on observables, panel data, IV, RDD --- usual topics but hopefully in a modern way github.com/borusyak/are213

The November 2025 issue of the American Economic Review (115, 11) is now available online at aeaweb.org/issues/825.