ทวีตที่ปักหมุด

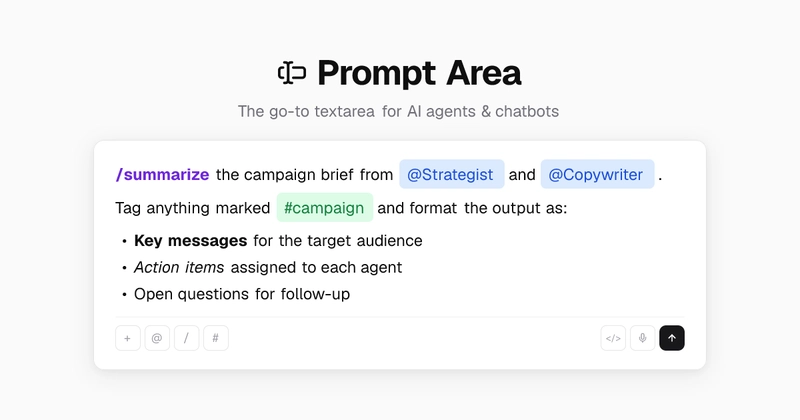

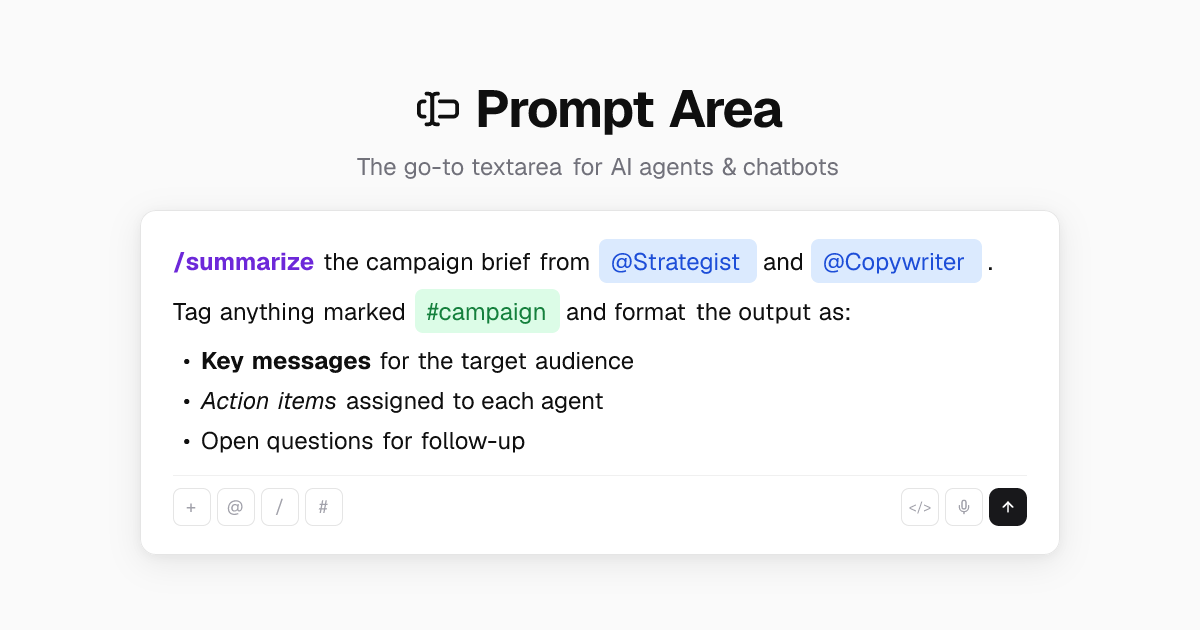

Prompt Area is a rich text input for React, built as a @shadcn registry component.

@ mentions, /commands, # tags, inline markdown, undo/redo, file attachments and

all you need for AI chat UIs.

Just React + your existing Tailwind setup.

prompt-area.com

English