Liam Liang Ding

485 posts

Liam Liang Ding

@liangdingNLP

building agentic ai @AlibabaGroup & ex-@AIstartup @JD_Corporate @TencentGlobal @Sydney_Uni. opinions are my own.

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.

me stepping down. bye my beloved qwen.

average australian house owners

AI同時通訳の体験が、また大きく進化しました。 Kotoba Techの音声基盤モデル「Koto v1.0」を「同時通訳」アプリにリリースしました🚀 、スマホ一台でポケットの中にパーソナルな同時通訳者がいるような体験に近づいています。 Kotoは音声をテキストを介さずに直接音声に翻訳するend-to-endというコンセプトで開発された生成AIモデルで、非常に低遅延で高精度な同時通訳体験を提供することができます。 今回のアップデートでは特に英語から日本語の性能が大幅に向上し、Kotoba社のベンチマーク上では精度が50%以上の向上が見られ、遅延も平均で1秒以上短縮されました。 「同時通訳」アプリを使うことで、スマホ一台でますます皆様のポケットに専属の同時通訳がいるような体験を味わうことが可能です。 「同時通訳」は無料でお試しいただけ、有料プランの前に追加で3日間の無料トライアルを行うことができます。Kotoが皆様のパーソナル同時通訳としてお力になれる事を願っています。

Openclaw hype is kinda wild to me. It’s literally just a wrapper to Claude code with more risk. The fact that it can run autonomously and call apis is the same thing Claude code can do… Not sure what I’m missing

❓ Do diffusion-LLMs truly generalize in agentic tasks? We reveal systematic failure modes in causal reasoning & tool use, and introduce DiffuAgent for comprehensive evaluation ⚡ 📄 arxiv.org/pdf/2601.12979 🔗 coldmist-lu.github.io/DiffuAgent/ #AgenticAI #LLM #EmbodiedAI #ToolUse

Today marks a moment I'll remember for the rest of my life. When we started Manus, few believed that general AI agents could work. We were told it was too early, too ambitious, too hard. But we kept building. Through the doubts, the setbacks, and the countless nights wondering if we were chasing the impossible. We weren't. This isn't just an acquisition. It's validation that the future we've been building toward is real, and it's arriving faster than anyone expected. But this is not the end. The era of AI that doesn't just talk, but acts, creates, and delivers, is only beginning. And now, we get to build it at a scale we never could have imagined. To everyone who believed in us before it was obvious: thank you. The best is yet to come.

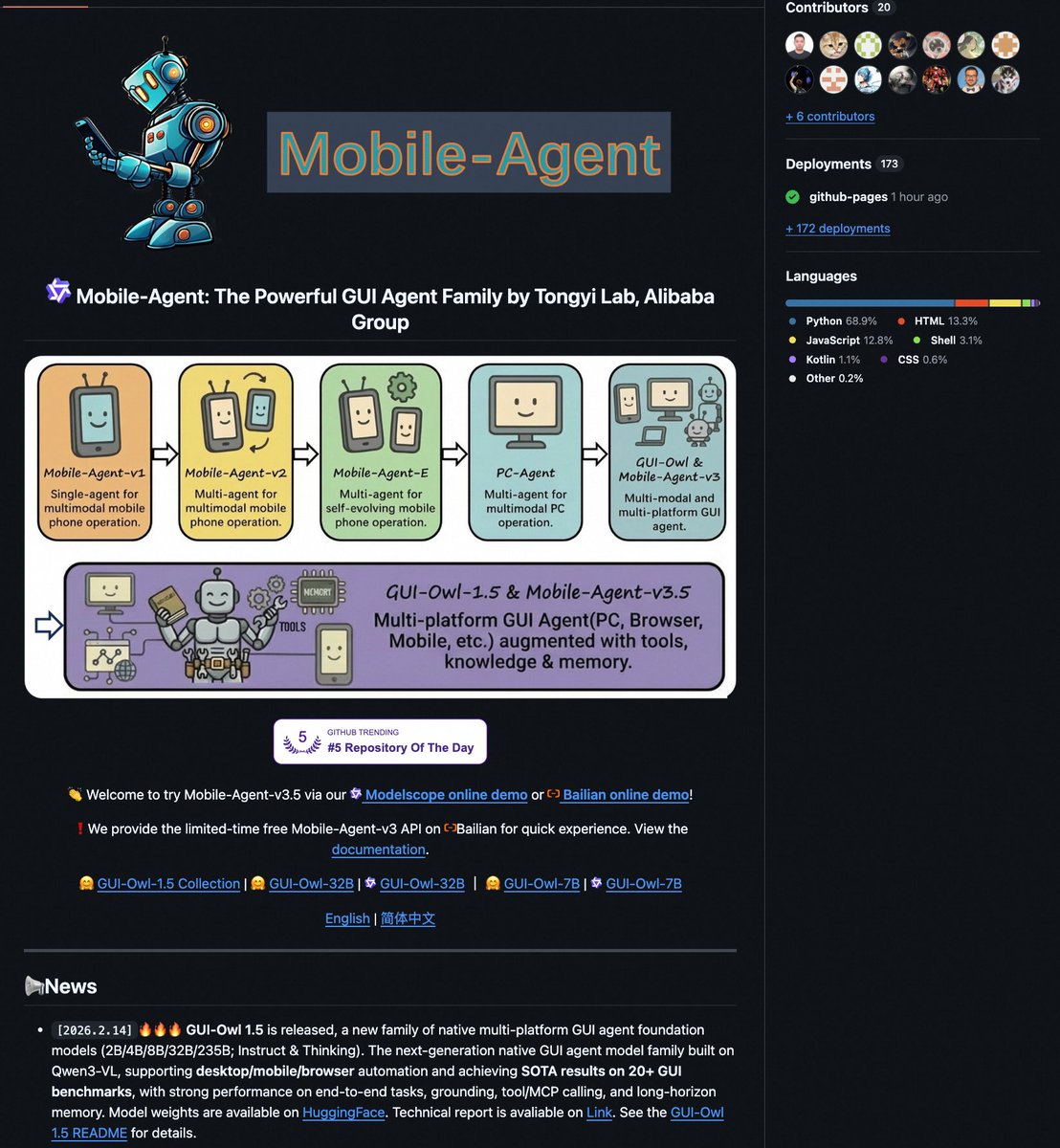

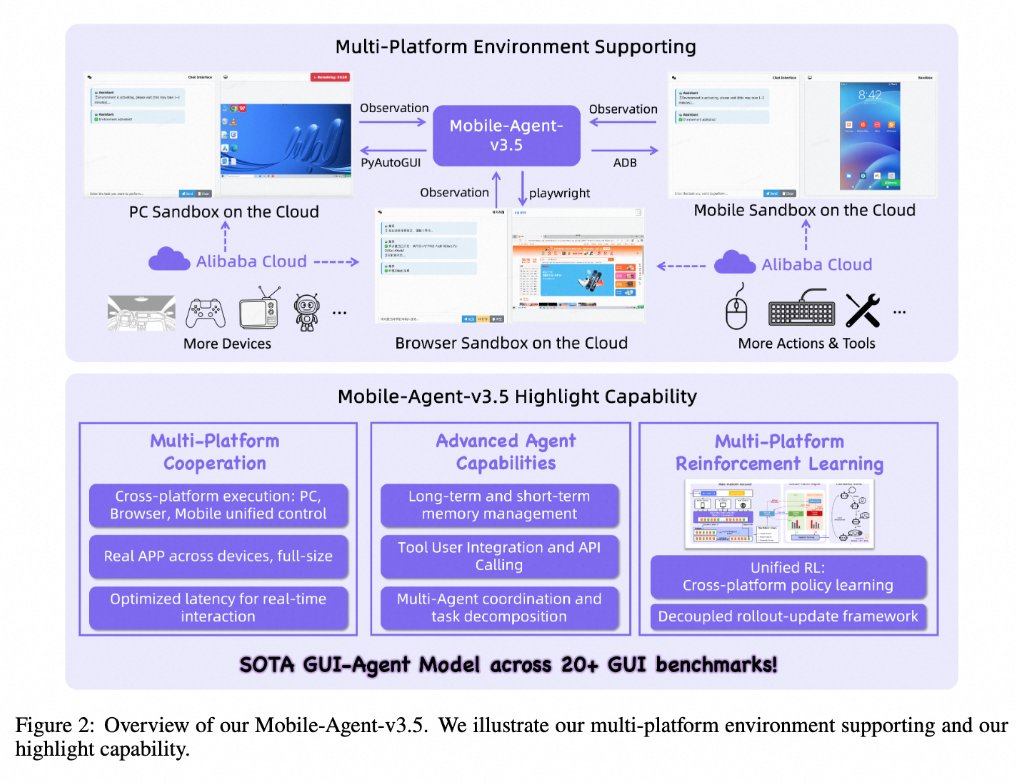

EcomBench: Towards Holistic Evaluation of Foundation Agents in E-commerce EcomBench is a comprehensive benchmark designed to evaluate the performance of foundation agents in real-world e-commerce environments by incorporating genuine user demands and dynamic market conditions across multiple task categories and difficulty levels. Rui Min, Zile Qiao, Ze Xu, Jiawen Zhai, Wenyu Gao, Xuanzhong Chen, Haozhen Sun, Zhen Zhang, Xinyu Wang, Hong Zhou, Wenbiao Yin, Xuan Zhou, Yong Jiang, Haicheng Liu, Liang Ding @liangdingNLP , Ling Zou, Yi R., Fung @May_F1_ , Yalong Li, Pengjun Xie Tongyi Lab; Alibaba Group arxiv.org/pdf/2512.08868

🚀 Launching DeepSeek-V3.2 & DeepSeek-V3.2-Speciale — Reasoning-first models built for agents! 🔹 DeepSeek-V3.2: Official successor to V3.2-Exp. Now live on App, Web & API. 🔹 DeepSeek-V3.2-Speciale: Pushing the boundaries of reasoning capabilities. API-only for now. 📄 Tech report: huggingface.co/deepseek-ai/De… 1/n