Mathurin Massias

377 posts

Mathurin Massias

@mathusmassias

Researcher @INRIA_lyon, Ockham team. Teacher @Polytechnique and @ENSdeLyon Machine Learning, Python and Optimization

Paris, France เข้าร่วม Temmuz 2018

181 กำลังติดตาม2.2K ผู้ติดตาม

ทวีตที่ปักหมุด

New paper on the generalization of Flow Matching arxiv.org/abs/2506.03719

🤯 Why does flow matching generalize? Did you know that the flow matching target you're trying to learn **can only generate training points**?

with @Qu3ntinB, Anne Gagneux & Rémi Emonet 👇👇👇

GIF

English

Mathurin Massias รีทวีตแล้ว

🚨 🔬 PhD positions at Google DeepMind in France 🇫🇷

We are advertising Master Level Intern positions at Google DeepMind within our Frontier AI Unit.

These could lead to co-advised PhD positions with Google DeepMind and French academic institutions.

job-boards.greenhouse.io/deepmind/jobs/…

English

With @Qu3ntinB we have one offer for a Marie Sklodowska-Curie postdoctoral fellowships at Inria, to work on generative models : inria.fr/en/marie-sklod…

contact me if interested! RT appreciated <3

English

There is an Associate Professor position in CS at ENS Lyon, with potential integration in my team, starting in sept 2026 : DM me in interested!

Details at ens-lyon.fr/LIP/images/Pro…

English

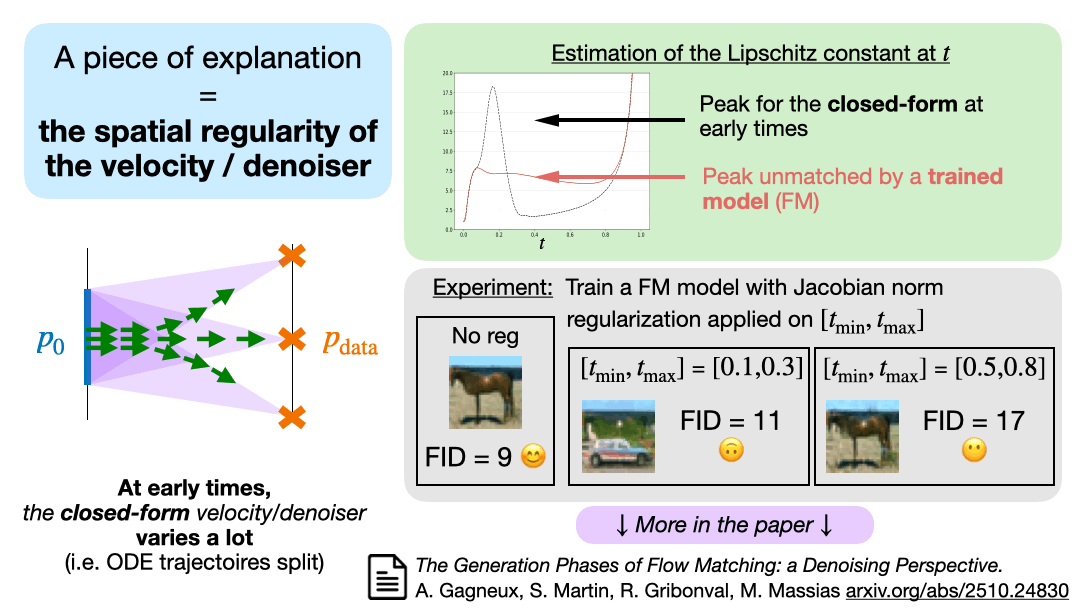

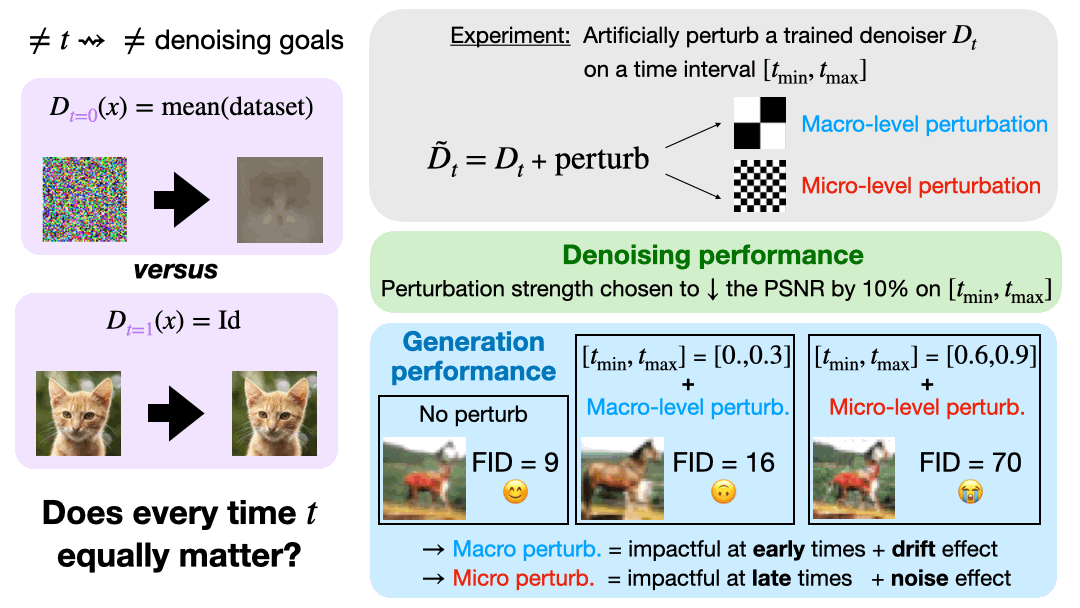

🌀New paper on the generation phases of Flow Matching arxiv.org/abs/2510.24830

Are FM & diffusion models nothing else than denoisers trained at every noise level?

In theory yes, *if trained optimally*. But in practice, do all noise level matter equally?

GIF

English

Kickstarting our workshop on Flow matching and Diffusion with a talk by Eric Vanden Eijnden on how to optimize learning and sampling in Stochastic Interpolants !

Broadcast available at gdr-iasis.cnrs.fr/reunions/model…

English

Mathurin Massias รีทวีตแล้ว

I am thrilled to announce that our work on the generalization of flow matching has been accepted to NeurIPS as an oral!!

See you in San Diego 😎

Mathurin Massias@mathusmassias

New paper on the generalization of Flow Matching arxiv.org/abs/2506.03719 🤯 Why does flow matching generalize? Did you know that the flow matching target you're trying to learn **can only generate training points**? with @Qu3ntinB, Anne Gagneux & Rémi Emonet 👇👇👇

English

One-day workshop on Diffusion models and Flow matching, October 24th at ENS Lyon

Registration and call for contributions (short talk and poster) are open at gdr-iasis.cnrs.fr/reunions/model…

English

Mathurin Massias รีทวีตแล้ว

Mathurin Massias รีทวีตแล้ว

@max_tensor @Qu3ntinB You don't even need to train a model to see that the target is, on real data, not stochastic as soon as t is not very small

See the animation in second tweet & figures 1b and 2 in arxiv.org/pdf/2506.03719, which do not involve training a model

English

@mathusmassias @Qu3ntinB Does CFM really bury stochastic targets or did it just test a tiny U-Net on CIFAR-10 & CelebA-64? Run it on ImageNet-256 with augment ON & non-Gaussian noise before declaring noise “dead.”

English

New paper on the generalization of Flow Matching arxiv.org/abs/2506.03719

🤯 Why does flow matching generalize? Did you know that the flow matching target you're trying to learn **can only generate training points**?

with @Qu3ntinB, Anne Gagneux & Rémi Emonet 👇👇👇

GIF

English

@danijarh @Qu3ntinB The closed-form minimizer u* is singular at t=1 if x is not a training point, so this reasoning does not apply: following u*, you exactly generate training points (up to a finite set).

See e.g. Thm 4.6 in arxiv.org/abs/2410.23594

English

Interesting! Since the flow is an ODE (cont. in time and space), paths can't branch or merge. Doesn't that imply that for every two generated datapoints, it's possible to generate one in between. And so the model can't purely memorize but needs to interpolate at least a bit? Curious to understand your intuition better

English

@DaniloJRezende See eg this potential explanation which was all the rage a few weeks ago x.com/johnjvastola/s…

John J. Vastola@johnjvastola

Diffusion models generalize *really* well: if you give them a million pictures of cats, they'll learn to generate reasonable-looking cats no one's ever seen before. But the weird thing is that no one knows why they work! In a theory paper accepted to #ICLR2025, I dug into this.

English

@DaniloJRezende absolutely! Our point is more 1) that the velocity has a closed-form (already observed by others) 2) that out of several hypotheses explaining why models don't learn it we rule out "target stochasticity" (see second tweet)

English

@giladturok @Qu3ntinB As you can see in the first gif, following the closed-form minimizer, different x_0 get mapped to the same x_1 (which is one of the training point)

English

@mathusmassias @Qu3ntinB Naive q: In CFM we regress a neural velocity field to a known, true velocity field.

The true velocity field at time t is computed with sample x_0 from a base dist and x_1 from a target dist.

At inference time, if we sample a diff x_0, shouldn’t we automatically get a diff x_1?

English