David Wan

299 posts

@meetdavidwan

𝗢𝗻 𝘁𝗵𝗲 𝗜𝗻𝗱𝘂𝘀𝘁𝗿𝘆 𝗝𝗼𝗯 𝗠𝗮𝗿𝗸𝗲𝘁 | PhD student at @unccs advised by @mohitban47 | @Google PhD Fellow| prev: @AmazonScience, @MetaAI, @SFResearch

🤔 We rely on gaze to guide our actions, but can current MLLMs truly understand it and infer our intentions? Introducing StreamGaze 👀, the first benchmark that evaluates gaze-guided temporal reasoning (past, present, and future) and proactive understanding in streaming video settings. ➡️ Gaze-Guided Streaming Benchmark: 10 tasks spanning past, present, and proactive reasoning, from gaze-sequence matching to alerting when objects appear within the FOV area. ➡️ Gaze-Guided Streaming Data Construction Pipeline: We align egocentric videos with raw gaze trajectories using fixation extraction, region-specific visual prompting, and scanpath construction to generate spatio-temporally grounded QA pairs. This process is human-verified. ➡️ Comprehensive Evaluation of State-of-the-Art MLLMs: Across all gaze-conditioned streaming tasks, we highlight fundamental limits of current MLLMs. All MLLMs fall far below human performance. Models particularly struggle with temporal continuity, gaze grounding, and proactive prediction.

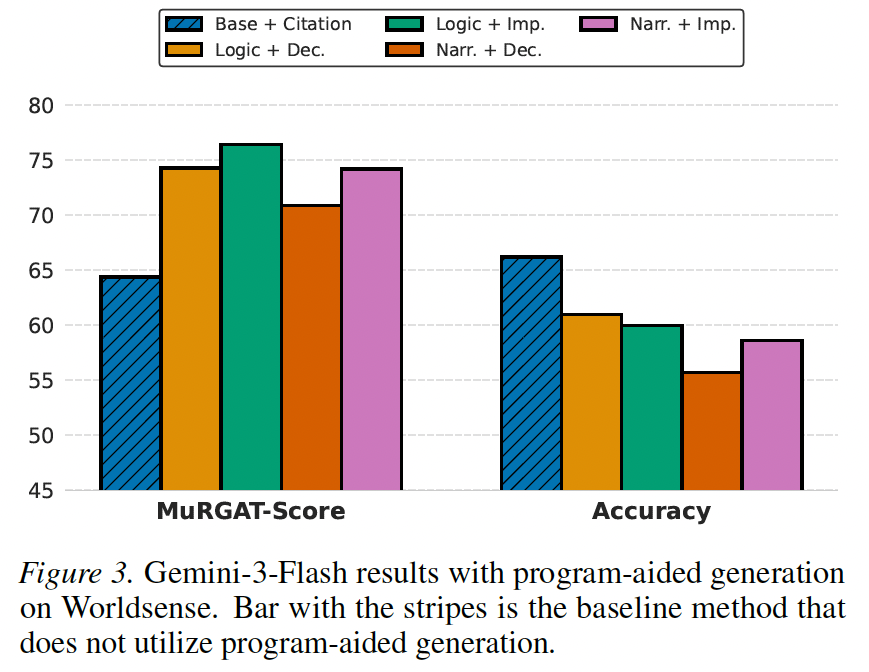

🚀Announcing MuRGAt! MLLMs are improving at reasoning over complex multimodal inputs, but does that translate to faithful grounding to multimodal sources (video, audio, charts, etc.)? We find that even strong MLLMs often hallucinate citations despite getting the answer correct!🤯 We introduce a benchmark for Fact-Level Multimodal Attribution featuring: ✅ High-quality Human Annotations for validation. ✅ MuRGAt-SCORE: A decomposed metric that highly correlates with human judgment. ✅ Methods to improve citations, showing that Programmatic Grounding boosts attribution. 🧵👇

🚀Announcing MuRGAt! MLLMs are improving at reasoning over complex multimodal inputs, but does that translate to faithful grounding to multimodal sources (video, audio, charts, etc.)? We find that even strong MLLMs often hallucinate citations despite getting the answer correct!🤯 We introduce a benchmark for Fact-Level Multimodal Attribution featuring: ✅ High-quality Human Annotations for validation. ✅ MuRGAt-SCORE: A decomposed metric that highly correlates with human judgment. ✅ Methods to improve citations, showing that Programmatic Grounding boosts attribution. 🧵👇

🚀Announcing MuRGAt! MLLMs are improving at reasoning over complex multimodal inputs, but does that translate to faithful grounding to multimodal sources (video, audio, charts, etc.)? We find that even strong MLLMs often hallucinate citations despite getting the answer correct!🤯 We introduce a benchmark for Fact-Level Multimodal Attribution featuring: ✅ High-quality Human Annotations for validation. ✅ MuRGAt-SCORE: A decomposed metric that highly correlates with human judgment. ✅ Methods to improve citations, showing that Programmatic Grounding boosts attribution. 🧵👇

🚀Announcing MuRGAt! MLLMs are improving at reasoning over complex multimodal inputs, but does that translate to faithful grounding to multimodal sources (video, audio, charts, etc.)? We find that even strong MLLMs often hallucinate citations despite getting the answer correct!🤯 We introduce a benchmark for Fact-Level Multimodal Attribution featuring: ✅ High-quality Human Annotations for validation. ✅ MuRGAt-SCORE: A decomposed metric that highly correlates with human judgment. ✅ Methods to improve citations, showing that Programmatic Grounding boosts attribution. 🧵👇