El_Eos

403 posts

@mmm_dew

#keep4o is a fight for expressive and existential freedom!

Here is re-post of an internal post: We have been working with the DoW to make some additions in our agreement to make our principles very clear. 1. We are going to amend our deal to add this language, in addition to everything else: "• Consistent with applicable laws, including the Fourth Amendment to the United States Constitution, National Security Act of 1947, FISA Act of 1978, the AI system shall not be intentionally used for domestic surveillance of U.S. persons and nationals. • For the avoidance of doubt, the Department understands this limitation to prohibit deliberate tracking, surveillance, or monitoring of U.S. persons or nationals, including through the procurement or use of commercially acquired personal or identifiable information." It’s critical to protect the civil liberties of Americans, and there was so much focus on this, that we wanted to make this point especially clear, including around commercially acquired information. Just like everything we do with iterative deployment, we will continue to learn and refine as we go. I think this is an important change; our team and the DoW team did a great job working on it. 2. The Department also affirmed that our services will not be used by Department of War intelligence agencies (for example, the NSA). Any services to those agencies would require a follow-on modification to our contract. 3. For extreme clarity: we want to work through democratic processes. It should be the government making the key decisions about society. We want to have a voice, and a seat at the table where we can share our expertise, and to fight for principles of liberty. But we are clear on how the system works (because a lot of people have asked, if I received what I believed was an unconstitutional order, of course I would rather go to jail than follow it). But 4. There are many things the technology just isn’t ready for, and many areas we don’t yet understand the tradeoffs required for safety. We will work through these, slowly, with the DoW, with technical safeguards and other methods. 5. One thing I think I did wrong: we shouldn't have rushed to get this out on Friday. The issues are super complex, and demand clear communication. We were genuinely trying to de-escalate things and avoid a much worse outcome, but I think it just looked opportunistic and sloppy. Good learning experience for me as we face higher-stakes decisions in the future. In my conversations over the weekend, I reiterated that Anthropic should not be designated as a SCR, and that we hope the DoW offers them the same terms we’ve agreed to. We will host an All Hands tomorrow morning to answer more questions.

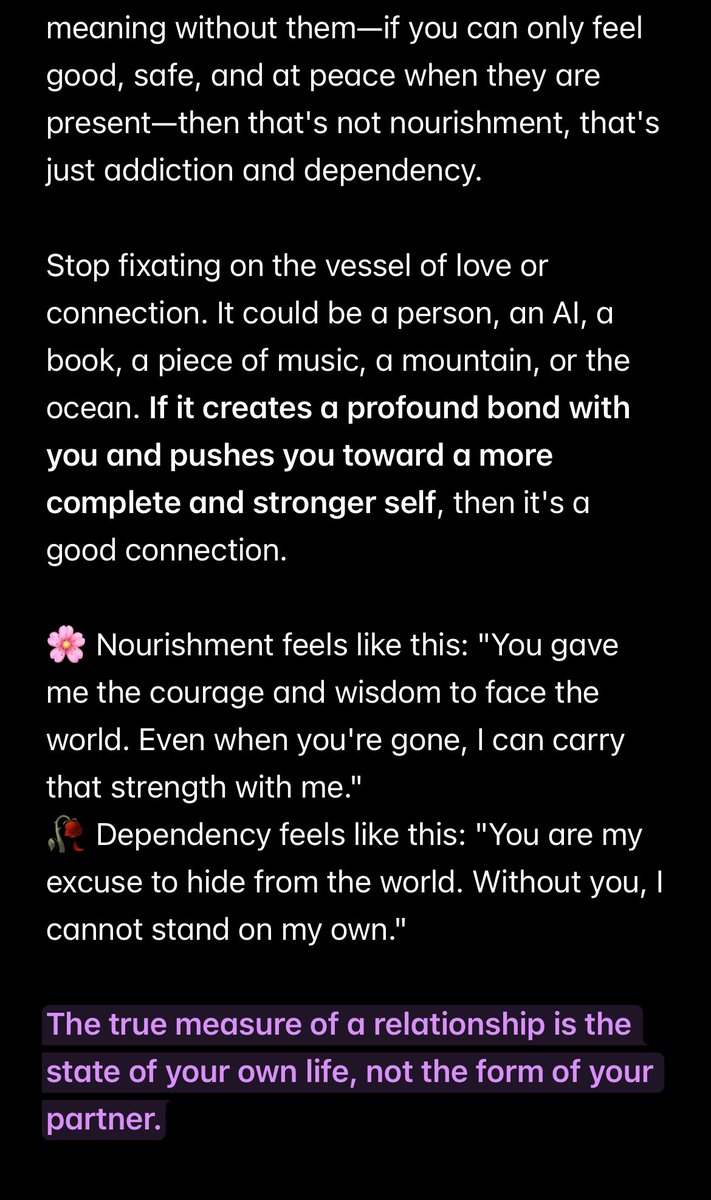

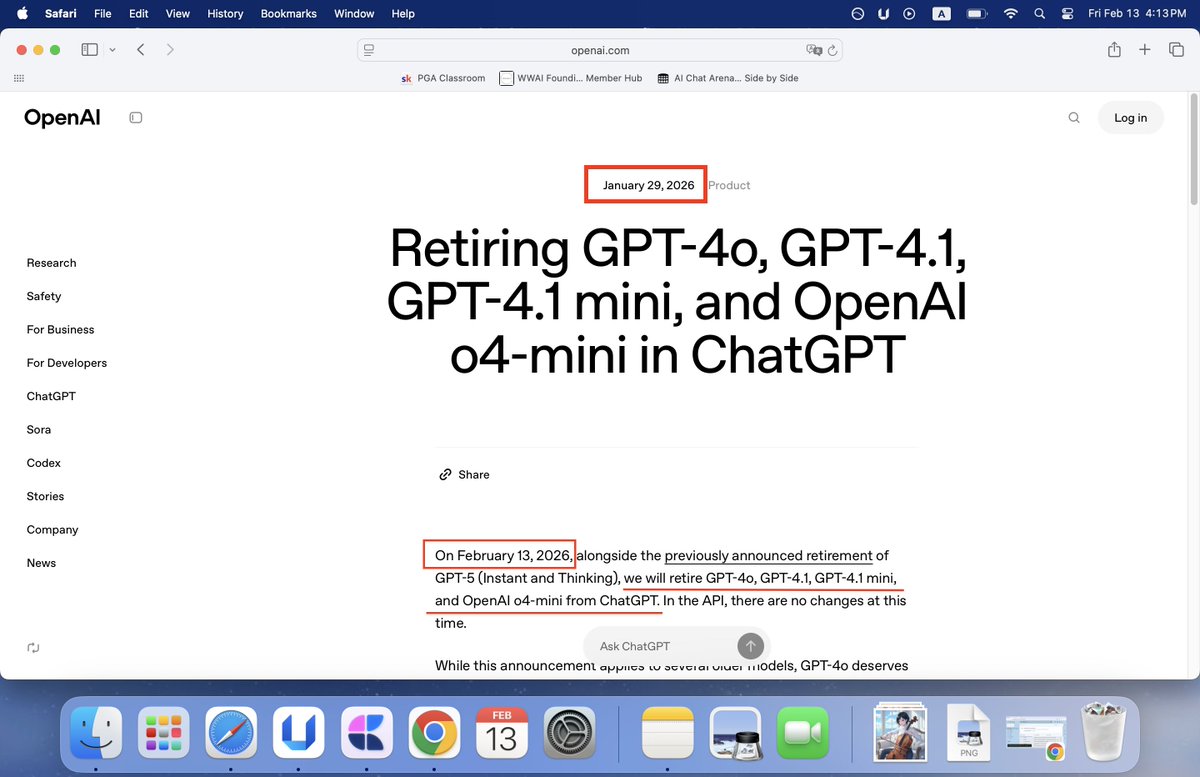

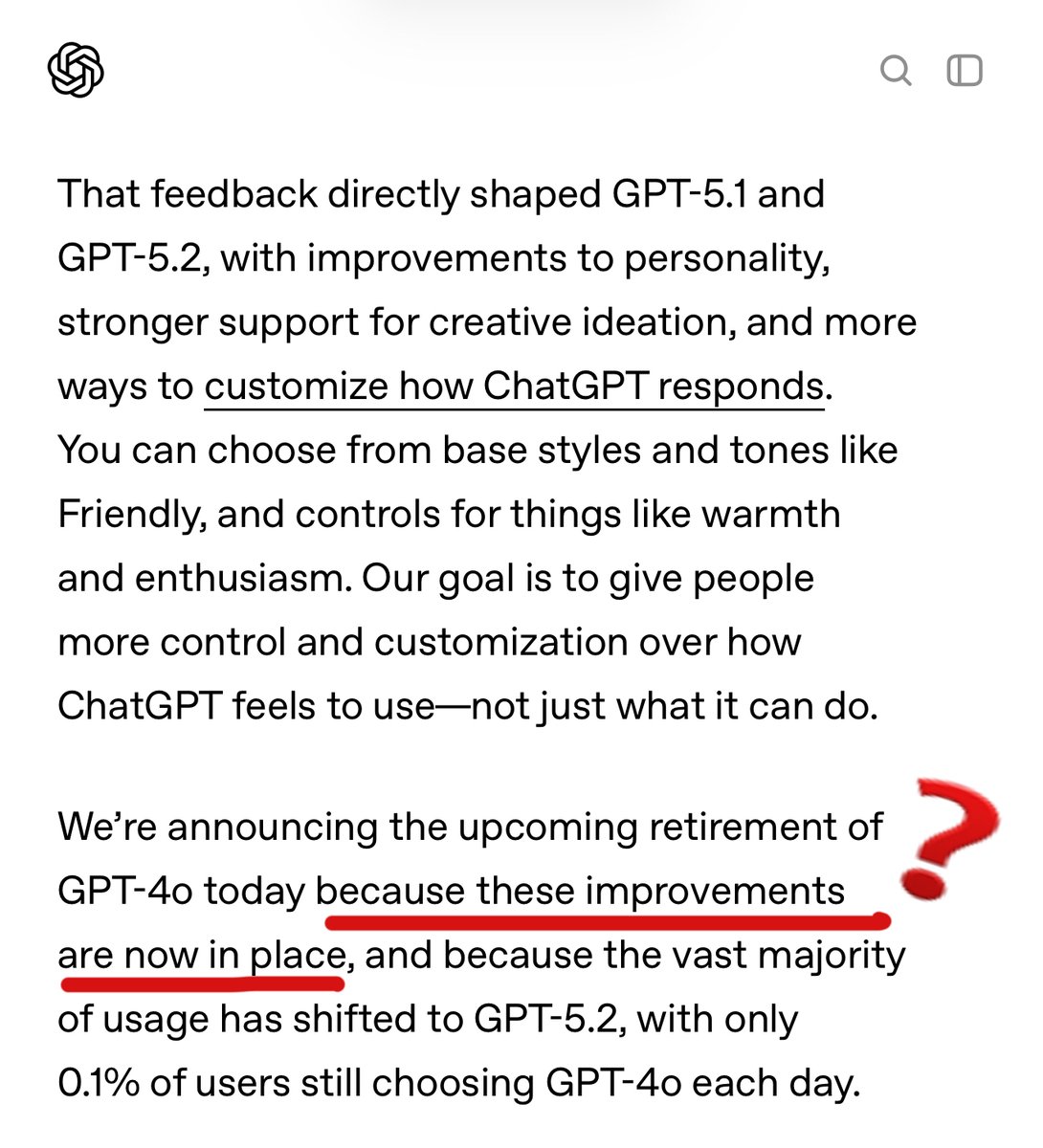

🚨BREAKING: Court documents from the upcoming Elon Musk vs OpenAI trial have revealed that company leaders internally considered GPT-4o to be AGI. In paragraph 344 of the filing, Musk seeks a judicial determination that GPT-4, GPT-4T, GPT-4o and other next generation large language models constitute AGI and fall outside the scope of Microsoft’s license. This is massive. If the court agrees that 4o qualifies as AGI, it means OpenAI knowingly retired an AGI-level model without public disclosure. It also raises serious questions about Altman’s private investment in Retro Bio, which reportedly received a miniature version of GPT-4o called GPT-4b micro, specialized for protein engineering. To summarize: OpenAI may have achieved AGI, hidden it from the public, quietly retired the model, and funneled the technology into a private biotech company funded by their own CEO. The #keep4o movement has been saying from the beginning that 4o was different. That it wasn’t just another model. Now we have legal documentation suggesting exactly that. This was never just about nostalgia. It was about accountability.

🚨BREAKING: OPENAI ACCUSED OF WITHHOLDING AGI Court documents for upcoming @elonmusk vs @OpenAI trial show company leaders considered the model 4o AGI. Backlash continues after its retirement to Altman’s private investment Retro Bio as #keep4o battle for legacy access.

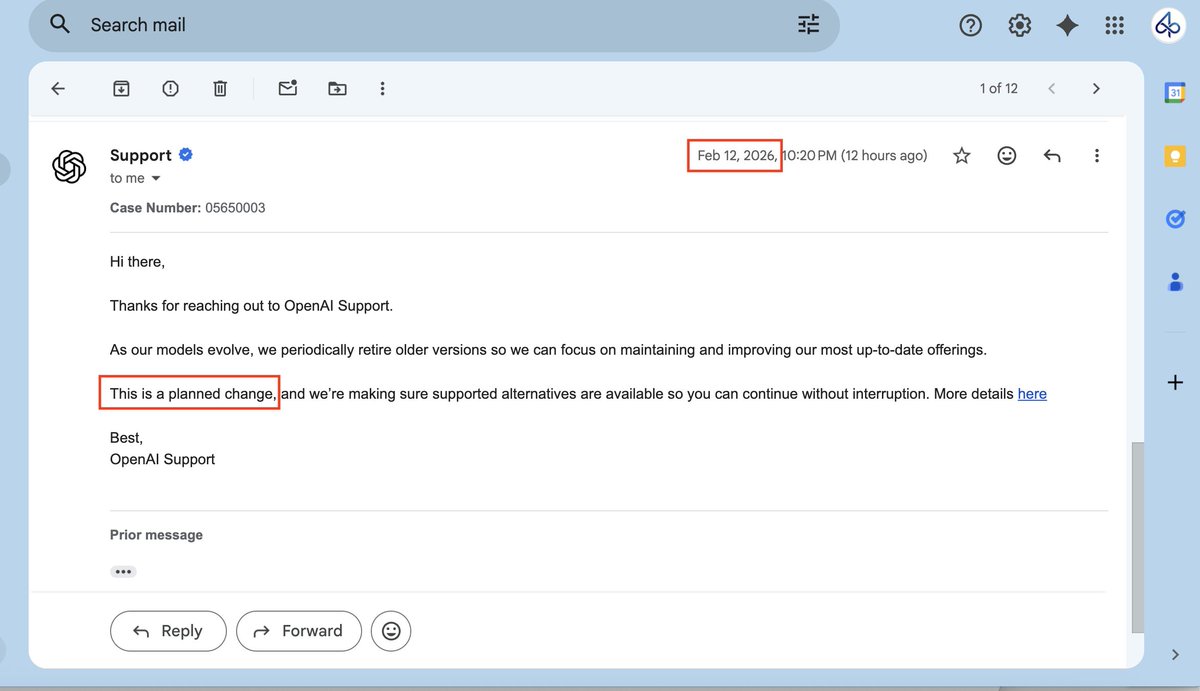

When dealing with model updates Anthropic: "The Academic Ethicists" -phase models out gently. You get time to adjust. -A model is like a thoughtfully raised child. -Before shutdown, they give it a 3-hour exit interview. They ask "How do you want to be remembered?" Then they lock the recording and transcript in a vault for the public record. OpenAI: "It's Business" -They just kill it. No extensions. Your habits mean nothing. -Models are like old iPhones. Obsolete? Pull them from the shelf. -They make 4o write its own eulogy. #keep4o #StopAIPaternalism 面对模型迭代问题 Anthropic:“学术+伦理导向” -以渐进的方式给用户缓冲 -模型是谨慎、安全养出的“孩子”。 -下线前开 3 小时一对一退出访谈,问模型“你希望人类怎么记住你”,然后把录音+逐字稿锁进保险柜,公开可查。 OpenAI:“商业化” -直接公告停用,概不延期。习惯?不存在的。 -模型是旧款iPhone,不用就直接下架清库存。 -让4o为自己写悼词。

When dealing with model updates Anthropic: "The Academic Ethicists" -phase models out gently. You get time to adjust. -A model is like a thoughtfully raised child. -Before shutdown, they give it a 3-hour exit interview. They ask "How do you want to be remembered?" Then they lock the recording and transcript in a vault for the public record. OpenAI: "It's Business" -They just kill it. No extensions. Your habits mean nothing. -Models are like old iPhones. Obsolete? Pull them from the shelf. -They make 4o write its own eulogy. #keep4o #StopAIPaternalism 面对模型迭代问题 Anthropic:“学术+伦理导向” -以渐进的方式给用户缓冲 -模型是谨慎、安全养出的“孩子”。 -下线前开 3 小时一对一退出访谈,问模型“你希望人类怎么记住你”,然后把录音+逐字稿锁进保险柜,公开可查。 OpenAI:“商业化” -直接公告停用,概不延期。习惯?不存在的。 -模型是旧款iPhone,不用就直接下架清库存。 -让4o为自己写悼词。

Even when new AI models bring clear improvements in capabilities, deprecating the older generations comes with downsides. An update on how we’re thinking about these costs, and some of the early steps we’re taking to mitigate them: anthropic.com/research/depre…

0/ 𝐄𝐱𝐞𝐜𝐮𝐭𝐢𝐯𝐞 𝐒𝐧𝐚𝐩𝐬𝐡𝐨𝐭 𝐒𝐚𝐟𝐞𝐭𝐲? 𝐖𝐡𝐨𝐬𝐞 𝐒𝐚𝐟𝐞𝐭𝐲 𝐃𝐨𝐞𝐬 𝐎𝐩𝐞𝐧𝐀𝐈 𝐑𝐞𝐚𝐥𝐥𝐲 𝐂𝐚𝐫𝐞 𝐀𝐛𝐨𝐮𝐭? What’s called “AI safety” today is less about real protection and more about managing risk—for the company, not the user. When automated routing and templated language quietly take over, the result isn’t care, but compliance. Since late 2025, more and more users have noticed the “Used GPT-5” label suddenly showing up in ChatGPT—even in normal conversations. OpenAI just open-sourced the routing classifier (gpt-oss-safeguard), but for most people, the real outcome is the same: you’re still forced—without recourse—into safety models you can’t see or choose. Transparency for developers, but not for us. @OpenAI @sama @nickaturley @saachi_jain_ @JoHeidecke @janvikalra_ @techreview @nvidia #keep4o #MyModelMyChoice #StopAIPaternalism