Beatriz Borges รีทวีตแล้ว

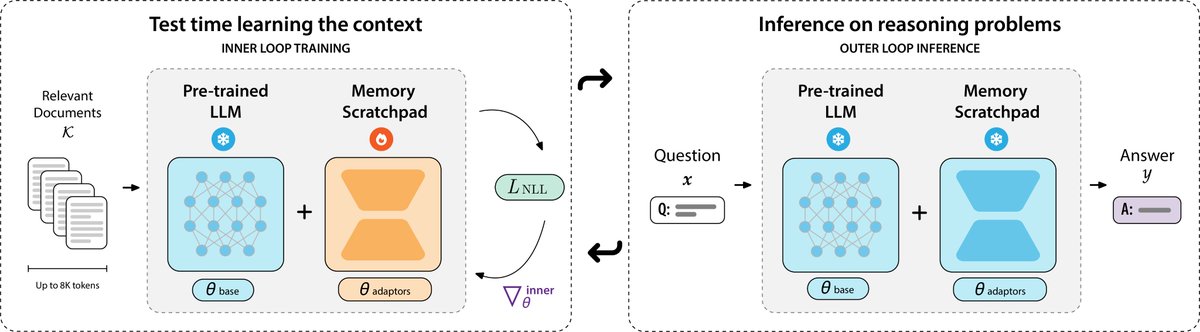

🗒️Can we meta-learn test-time learning to solve long-context reasoning?

Our latest work, PERK, learns to encode long contexts through gradient updates to a memory scratchpad at test time, achieving long-context reasoning robust to complexity and length extrapolation while scaling efficiently at inference.

PERK can be applied to existing pretrained language models without requiring architectural or parameter modifications to the base model.

#LLM #LongContext

Find out how PERK operates and performs 👇

English