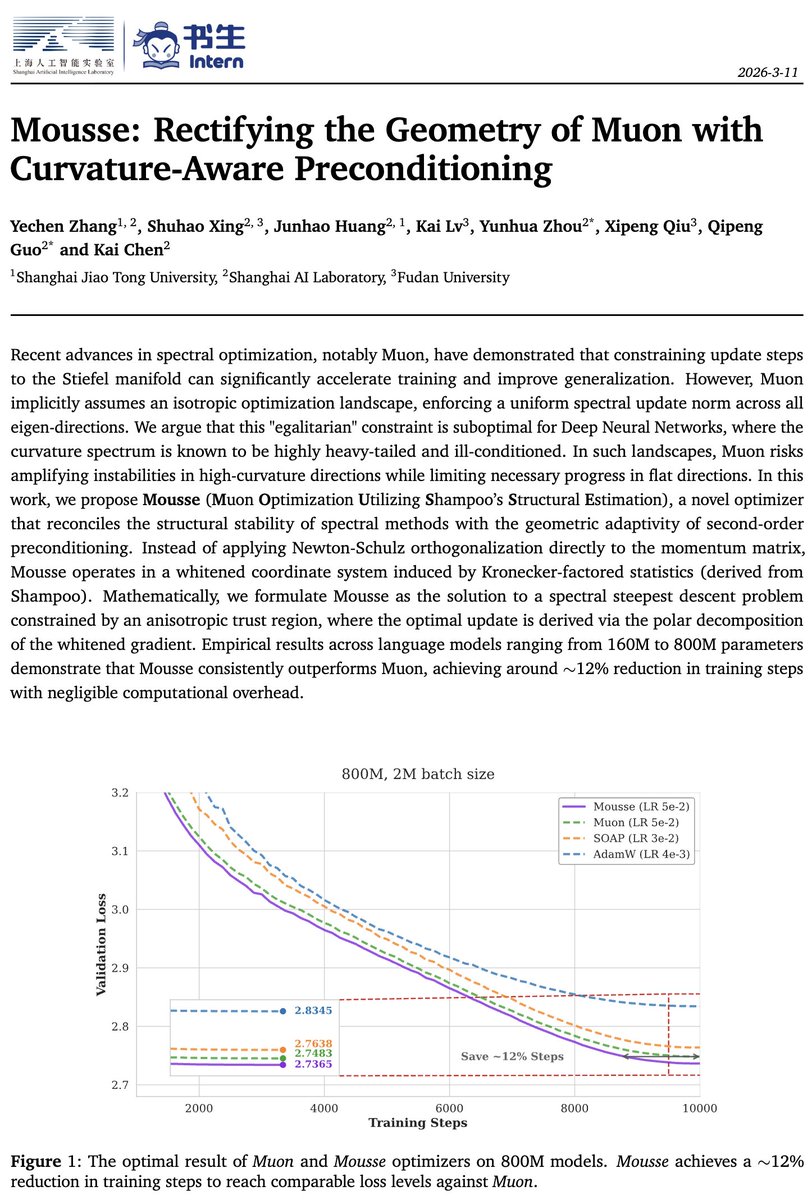

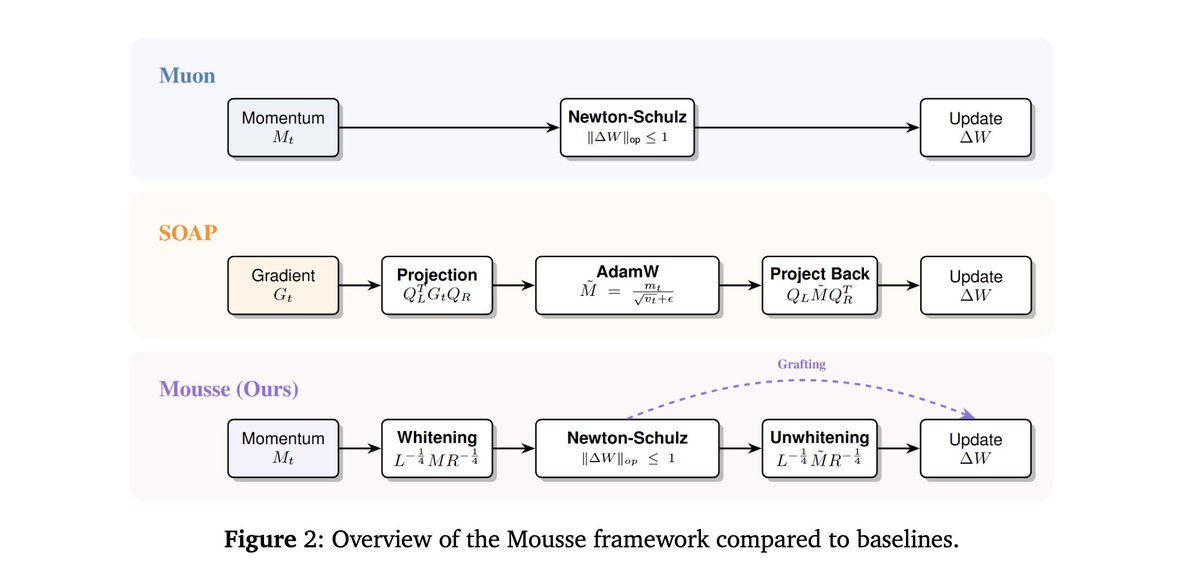

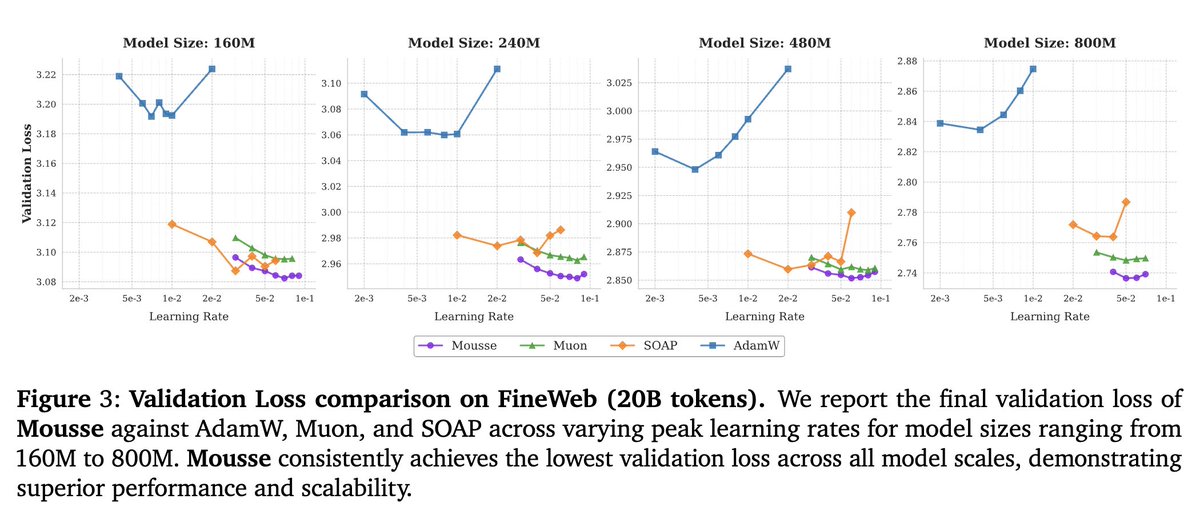

📢 Late post Our recent work studies when and why spectrum-aware optimizers like Muon generalize better than standard Euclidean gradient descent (GD) 🧵below (1/N)

Puneesh Deora

767 posts

@puneeshdeora

PhD student at UBC. Working on theory of Deep Learning.

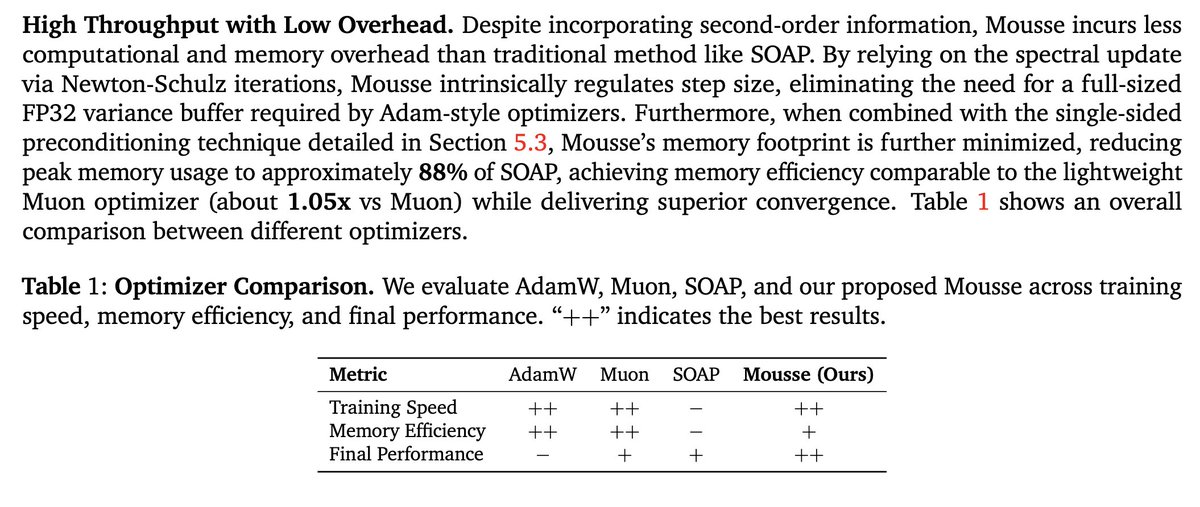

📢 Late post Our recent work studies when and why spectrum-aware optimizers like Muon generalize better than standard Euclidean gradient descent (GD) 🧵below (1/N)

If I was a grad student today, I would: 1) Not write papers, 2) push my (agent-written) code to a public repo ~weekly, 3) maintain (via agents) a writeup.tex (manually verified) and a skill.md in the repo, and 4) work towards establishing skill usage as the new "citation" format.

We are pleased to share that using Gauss, we have completed a ~200K LOC formalization of Maryna Viazovska’s 2022 Fields Medal theorems on optimal sphere packing in dimensions 8 and 24. This is the only Fields Medal-winning result from this century to be completely formalized, and is the largest single-purpose Lean formalization in history. We are honored to have assisted @SidharthHarihar1 and the rest of the sphere packing team in this achievement. math.inc/sphere-packing

Idk if people know this, but google scholar does not index the second reference format type (first it does), and the second is the bibtex you get from arxiv.

OpenAI Sebastian Bubeck says deep expertise is more important than ever in the AI age to get maximum value from AI, you need enough real understanding to describe the problem clearly "this creates the gap between people who keep studying and those who rely too much on AI"

I ran experiments with GPT-5.2-Pro on 20 latest arXiv preprints to see if it can: - independently prove the main theorem - find big mistakes in papers Statistics: - 1 easy proof can be re-derived by GPT-5.2-Pro - 1 contained a critical error - 1 more case is quite interesting ⬇️

Some thoughts on AI and mathematics, inspired by "First Proof."

📢 Late post Our recent work studies when and why spectrum-aware optimizers like Muon generalize better than standard Euclidean gradient descent (GD) 🧵below (1/N)

New paper studies when spectral gradient methods (e.g., Muon) help in deep learning: 1. We identify a pervasive form of ill-conditioning in DL: post-activations matrices are low-stable rank. 2. We then explain why spectral methods can perform well despite this. Long thread