Introducing Grok 4 Fast, a multimodal reasoning model with a 2M context window that sets a new standard for cost-efficient intelligence. Available for free on grok.com, grok.x.com, iOS and Android apps, and OpenRouter. x.ai/news/grok-4-fa…

Szymon Tworkowski

719 posts

@s_tworkowski

reasoning @xAI | prev. @GoogleAI @UniWarszawski | LongLLaMA

Introducing Grok 4 Fast, a multimodal reasoning model with a 2M context window that sets a new standard for cost-efficient intelligence. Available for free on grok.com, grok.x.com, iOS and Android apps, and OpenRouter. x.ai/news/grok-4-fa…

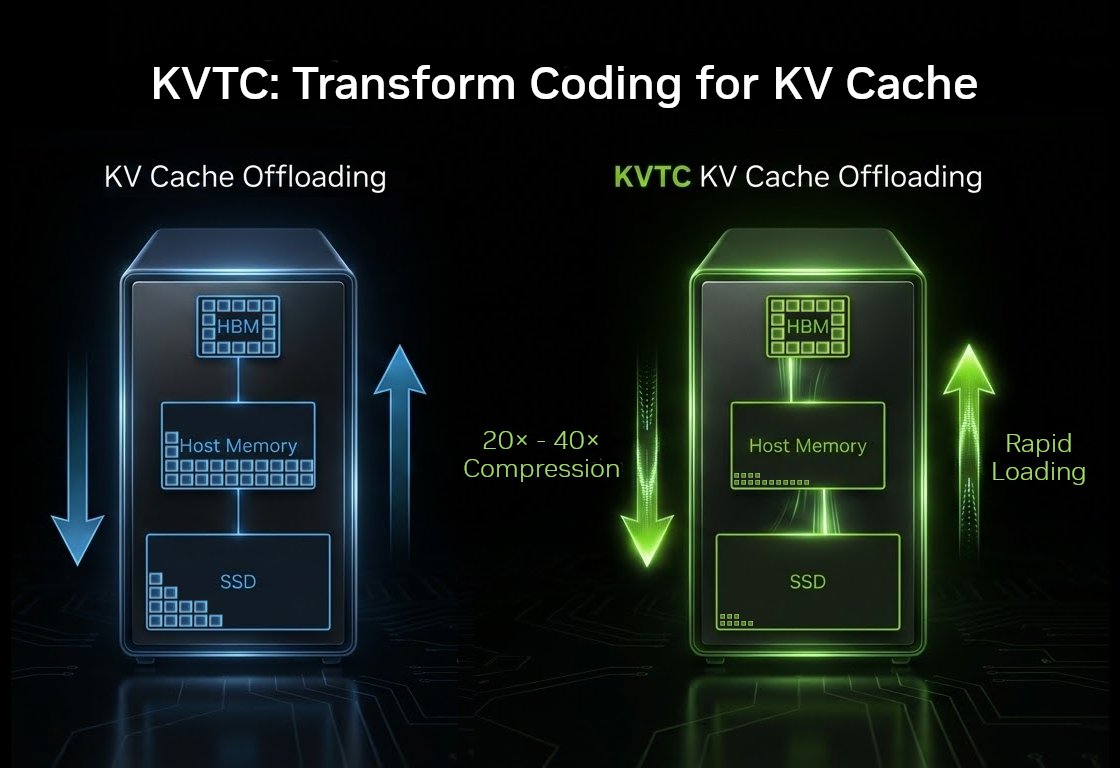

This is my favorite clip of the new Elon pod. He opens up saying xAI struggles with memory usage/bandwidth and CUDA kernel optimization (matmul, attention, MoE, etc). If you are good kernel or performance engineering in general, you should apply. Steer the world in a better direction.

Introducing Grok 4.1, a frontier model that sets a new standard for conversational intelligence, emotional understanding, and real-world helpfulness. Grok 4.1 is available for free on grok.com, grok.x.com and our mobile apps. x.ai/news/grok-4-1

Grok-4 (Fast Reasoning) on ARC-AGI Semi Private Eval - ARC-AGI-1: 48.5%, $0.03/task - ARC-AGI-2: 5.3%, $0.06/task @xai pushes the frontier of performance efficiency on ARC-AGI

Introducing Grok 4 Fast, a multimodal reasoning model with a 2M context window that sets a new standard for cost-efficient intelligence. Available for free on grok.com, grok.x.com, iOS and Android apps, and OpenRouter. x.ai/news/grok-4-fa…

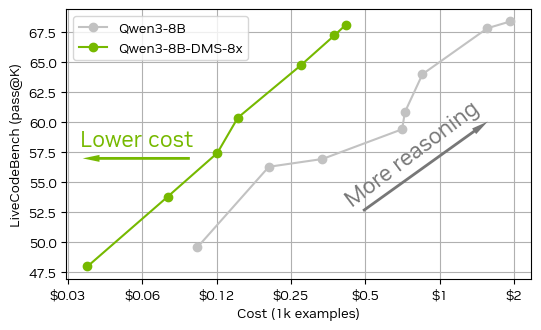

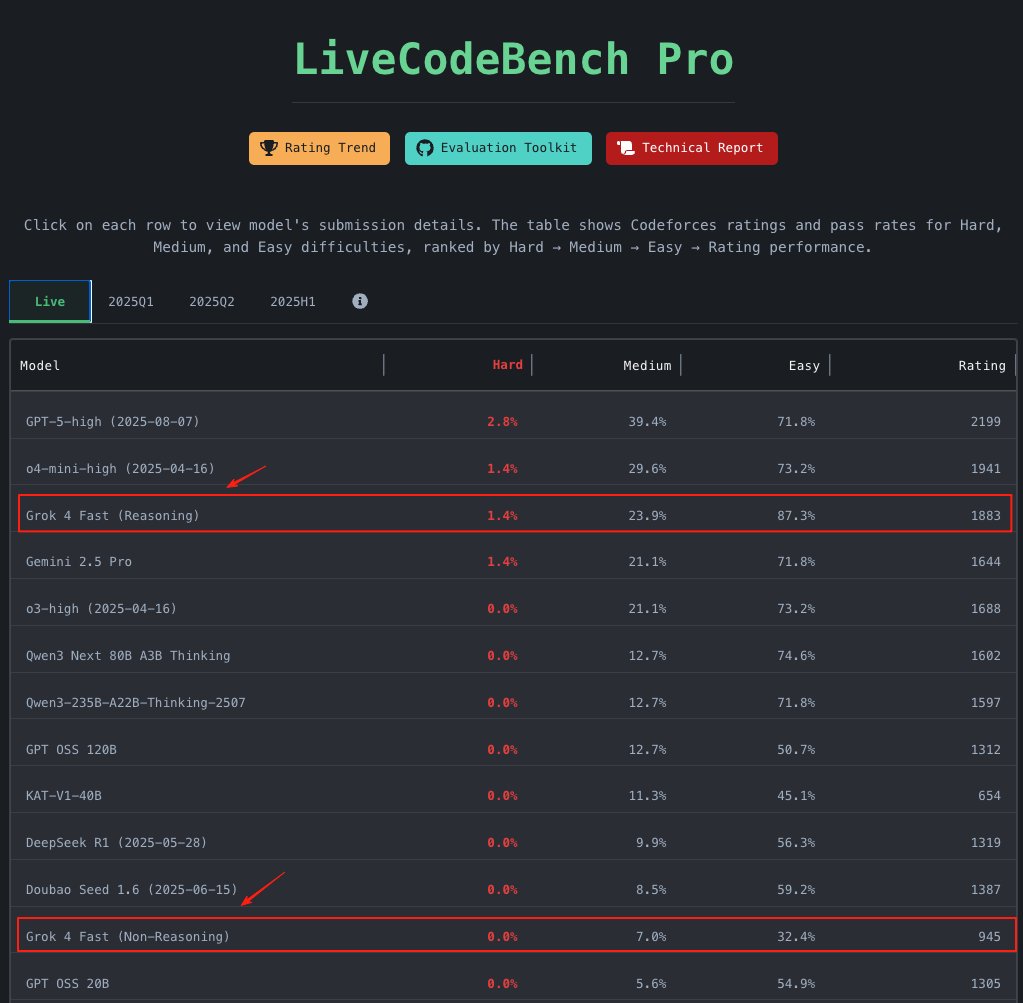

xAI has released Grok 4 Fast - breaking through our intelligence vs cost frontier by achieving Gemini 2.5 Pro level intelligence at a ~25X cheaper cost Intelligence: @xai shared with us pre-release access to Grok 4 Fast. In reasoning mode, the model scores an impressive 60 on our Artificial Analysis Intelligence Index, in line with Gemini 2.5 Pro and Claude 4.1 Opus, while sitting as expected below the prior Grok 4 release and GPT-5 (high). Grok 4 Fast performed especially well on coding evaluations, taking the number one spot on our leaderboard for LiveCodeBench, even outperforming its larger sibling Grok 4. Cost: xAI is offering Grok 4 Fast at a very competitive price of only $0.2/1M Input Tokens and $0.5/1M output tokens. The model is also quite token efficient compared to other reasoning models, taking 61M tokens to complete our intelligence index, significantly less than Gemini 2.5 Pro’s 93M and Grok 4’s 120M. This competitive pricing and efficiency translates to the cost of running Artificial Analysis Intelligence Index being ~25X lower than Gemini 2.5 Pro and ~23X lower than GPT-5 (reasoning mode high). Speed: When benchmarking the pre-release API, xAI’s endpoint for the model was very fast, achieving 344 output tokens per second - ~2.5X faster than OpenAI’s GPT-5 API. This also allows for End to End Latency results that are faster than most non-reasoning models for many workloads. Speeds may drop as traffic on the API increases - keep an eye on our live performance benchmarking to see how this evolves. Congratulations to the @xai team and @elonmusk on this new release! See below for more details and in-depth analysis 👇