samuel joseph troyer

699 posts

samuel joseph troyer

@samjtro

founder @ https://t.co/VasbYUotie

Claude now compacts context exponentially faster. Compacting takes only seconds so you don’t get interrupted.

I dunk on Go because it's a bad language. I never realised it's even deeper than a bottomless pit of despair. Wth is this

.@RichardSSutton, father of reinforcement learning, doesn’t think LLMs are bitter-lesson-pilled. My steel man of Richard’s position: we need some new architecture to enable continual (on-the-job) learning. And if we have continual learning, we don't need a special training phase - the agent just learns on-the-fly - like all humans, and indeed, like all animals. This new paradigm will render our current approach with LLMs obsolete. I did my best to represent the view that LLMs will function as the foundation on which this experiential learning can happen. Some sparks flew. 0:00:00 – Are LLMs a dead-end? 0:13:51 – Do humans do imitation learning? 0:23:57 – The Era of Experience 0:34:25 – Current architectures generalize poorly out of distribution 0:42:17 – Surprises in the AI field 0:47:28 – Will The Bitter Lesson still apply after AGI? 0:54:35 – Succession to AI

Introducing PrediBench - A live benchmark of AI models betting on prediction markets. This benchmark answers the question “How well can AI predict the future?” 1 - Each day, 10 top trending real-world events are pulled from Polymarket, with questions like “Who will be the next mayor of NYC?” 2 - Each model browses the web in agentic mode to research the question, then allocates $1 in bets. 3 - As the events resolve in real-time, we score the model’s performance : Average returns, Sharpe ratio, Brier score. ▸ Visit it at predibench.com 🧵[1/N]

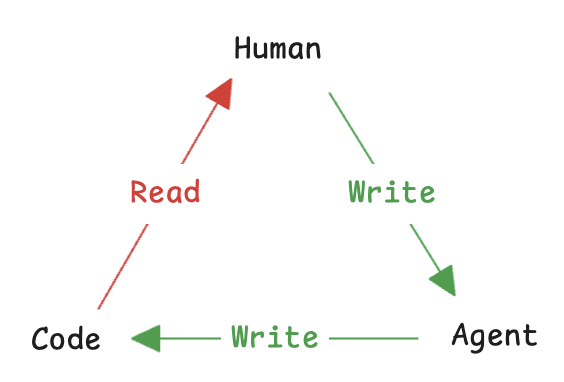

Making an Email Agent using the Claude Code SDK If I wasn’t at Anthropic, I would be making agents using the Claude Code SDK. But doing > talking. So I’m building in public and open sourcing a local email agent. This is part one on agentic search.

We've trained a new Tab model that is now the default in Cursor. This model makes 21% fewer suggestions than the previous model while having a 28% higher accept rate for the suggestions it makes. Learn more about how we improved Tab with online RL.

We've trained a new Tab model that is now the default in Cursor. This model makes 21% fewer suggestions than the previous model while having a 28% higher accept rate for the suggestions it makes. Learn more about how we improved Tab with online RL.