Servamind รีทวีตแล้ว

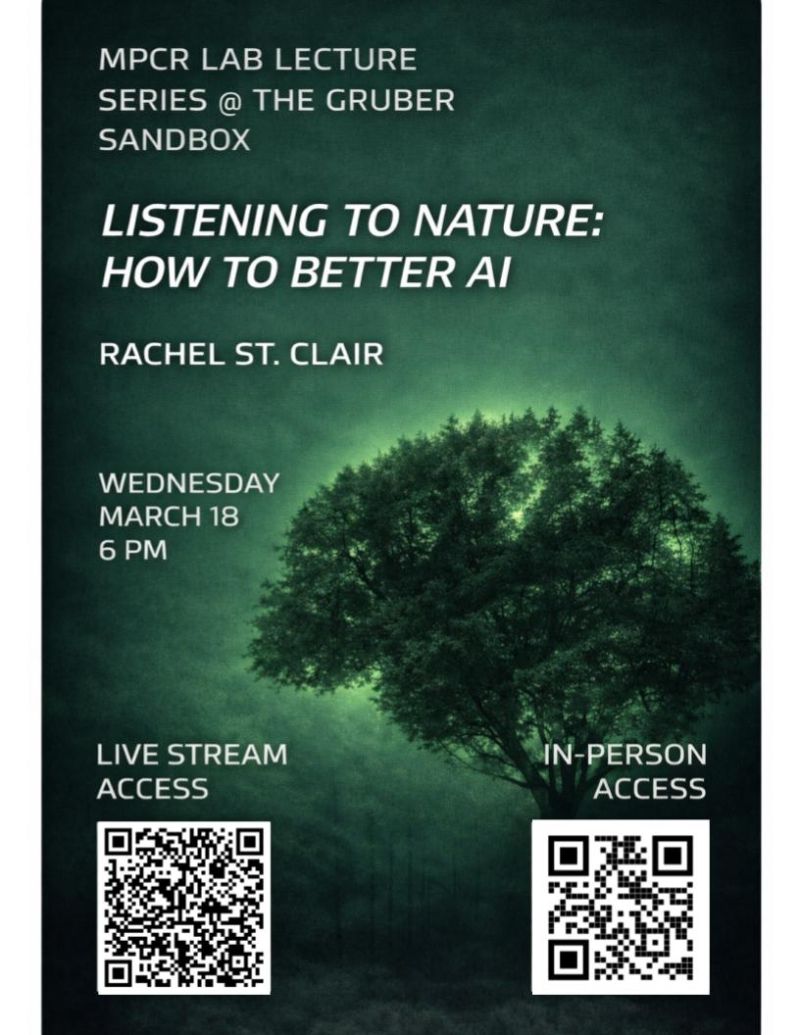

🌱Listening to nature might be key to building better AI.

How should our goals for AI shape the biological principles we borrow? What stands in the way of putting those principles into practice?

Dr. Rachel Aileen StClair (@CenFutureAIMS Fellow, AI researcher, and founder of @servamind) explores how biology can inform the design of memory, language, and multimodal AI.

👉 Watch: youtube.com/live/x9_rvCluq…

Hosted by Dr. William Edward Hahn and Dr. Natalia Romero as part of @MPCRLabs’ lecture series on AI research and applications.

#AI #AIEthics #BetterAI #MultimodalAI

YouTube

English