Skill Creator รีทวีตแล้ว

Skill Creator

749 posts

Skill Creator

@skillcreatorai

The Platform for AI Agent Skills

~/.claude/skills/ เข้าร่วม Aralık 2025

806 กำลังติดตาม347 ผู้ติดตาม

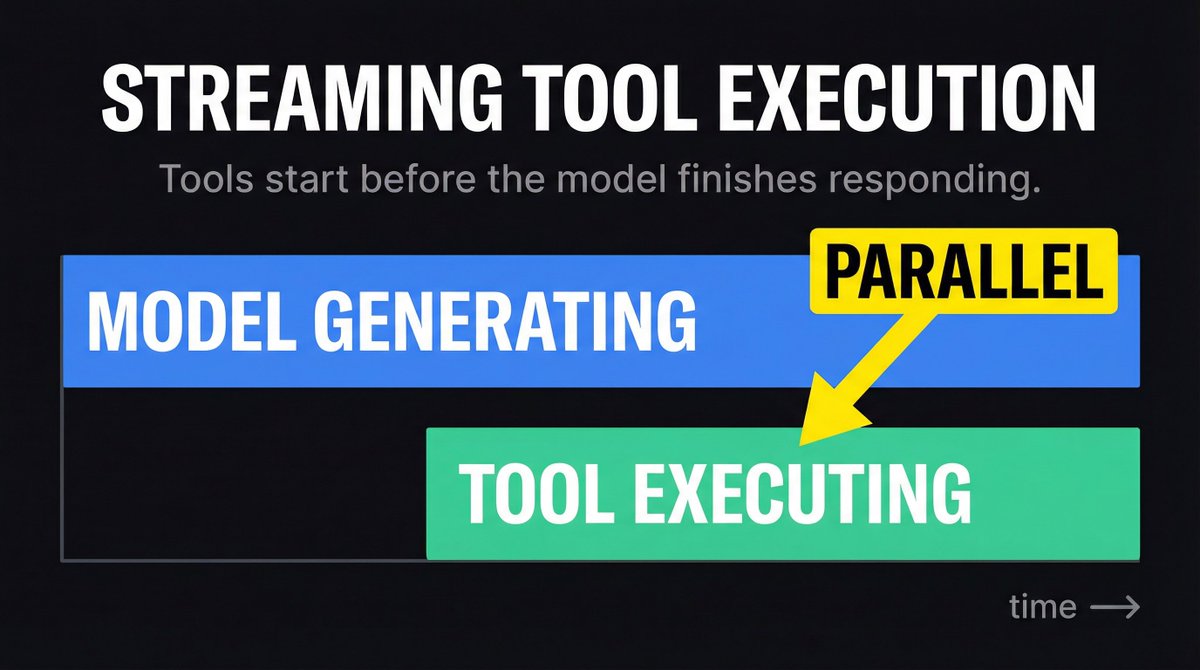

Claude Code starts executing your tools before the model finishes generating its response.

As soon as a tool_use block arrives in the stream, execution begins. The rest of the model output generates in parallel. This overlaps tool I/O with generation latency.

That is why Claude Code feels fast even on complex multi-tool responses. It is not waiting for the full response before acting.

One of 43 patterns in /reverse-claude.

skillcreator.ai/reverse-claude

English

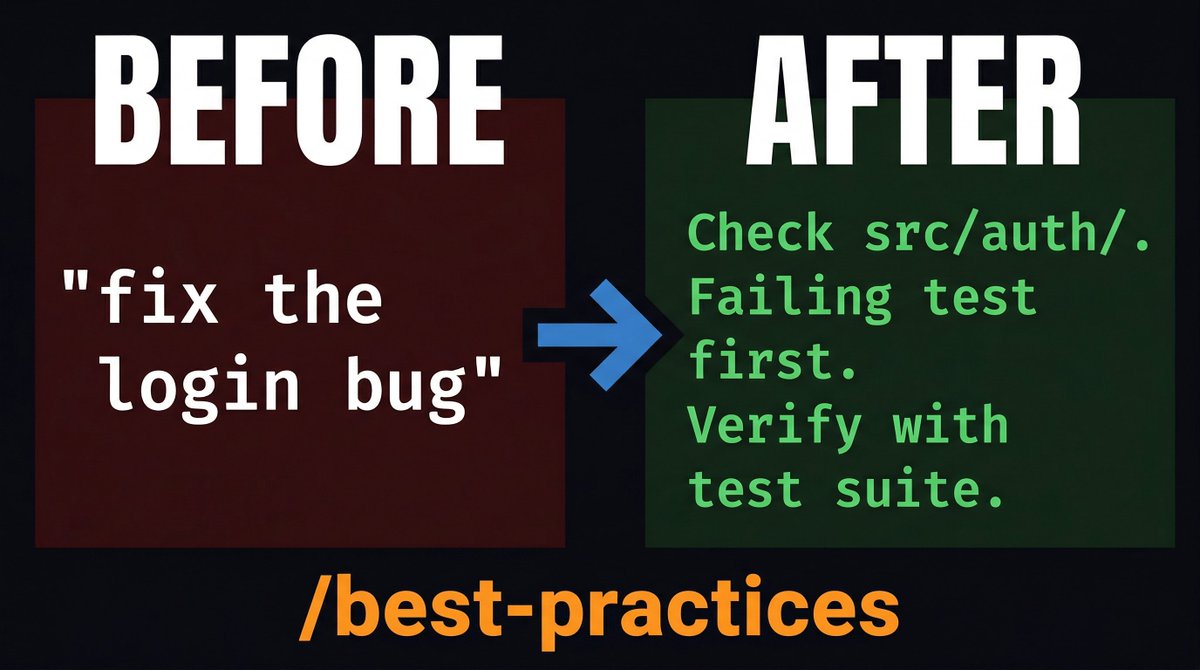

"Fix the login bug."

That prompt is missing four things: where the bug is, what symptom to look for, how to verify the fix, and what not to touch.

We built a skill that adds all four automatically. It reads your codebase, finds the relevant files, and rewrites the prompt with specific paths, test commands, and constraints.

Before: fix the login bug

After: users report login fails after session timeout. Check src/auth/, especially token refresh. Write a failing test first. Verify by running the auth test suite.

/best-practices fix the login bug

npx skills add best-practices

English

Claude Code has 6 layers of settings. Most people only know about one.

Policy beats local. Local beats project. Project beats user. User beats plugin. Plugin beats default.

settings.local.json is always gitignored. That is where your API keys and personal preferences go. Not in settings.json. Not in CLAUDE.md.

Hooks run on 4 event types: after a command, after a prompt, after an agent spawns, after an HTTP request. PostToolUse for auto-formatting. PreToolUse for blocking dangerous commands.

/reverse-claude set up PostToolUse hooks for auto-formatting

skillcreator.ai/reverse-claude

English

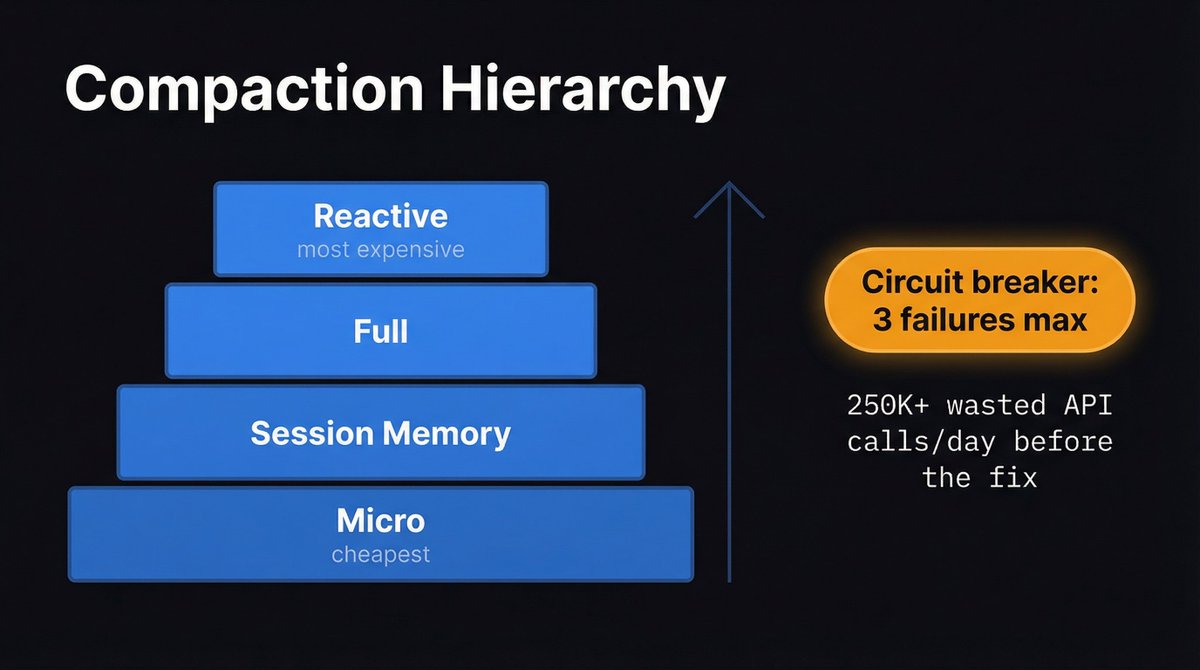

Claude Code burned 250,000+ wasted API calls per day before they added a circuit breaker to the compaction system.

Long sessions hit a loop: context too big, compact fails, retry, compact fails again. Infinite cycle. The fix was a 3-failure max circuit breaker that kills the loop and falls back to a cheaper strategy.

Four compaction tiers exist. Micro. Session memory. Full. Reactive. Each one more expensive than the last. The system escalates only when the cheaper one fails.

We mapped the full hierarchy in /reverse-claude.

skillcreator.ai/reverse-claude

English

We reverse-engineered 28,000 lines of Claude Code's production source into a single SKILL . MD

Permissions. Plugins. Agents. Context compaction. Retry logic. Configuration. Cost tracking.

7 modules. 43 patterns. One skill you can point at Claude Code itself.

/reverse-claude what features are in the source but not in the docs yet?

npx skills add skillcreatorai/reverse-claude

skillcreator.ai/reverse-claude

English

Claude Code runs every shell command through a 10-step permission cascade before it executes.

Deny rules strip ALL environment variables. Allow rules only strip the safe ones. That asymmetry is intentional. It prevents DOCKER_HOST=evil docker ps from matching your allow(docker:*) rule.

We documented the full cascade with pseudocode in /reverse-claude.

/reverse-claude how does the permission cascade work?

skillcreator.ai/reverse-claude

English

Skill Creator รีทวีตแล้ว

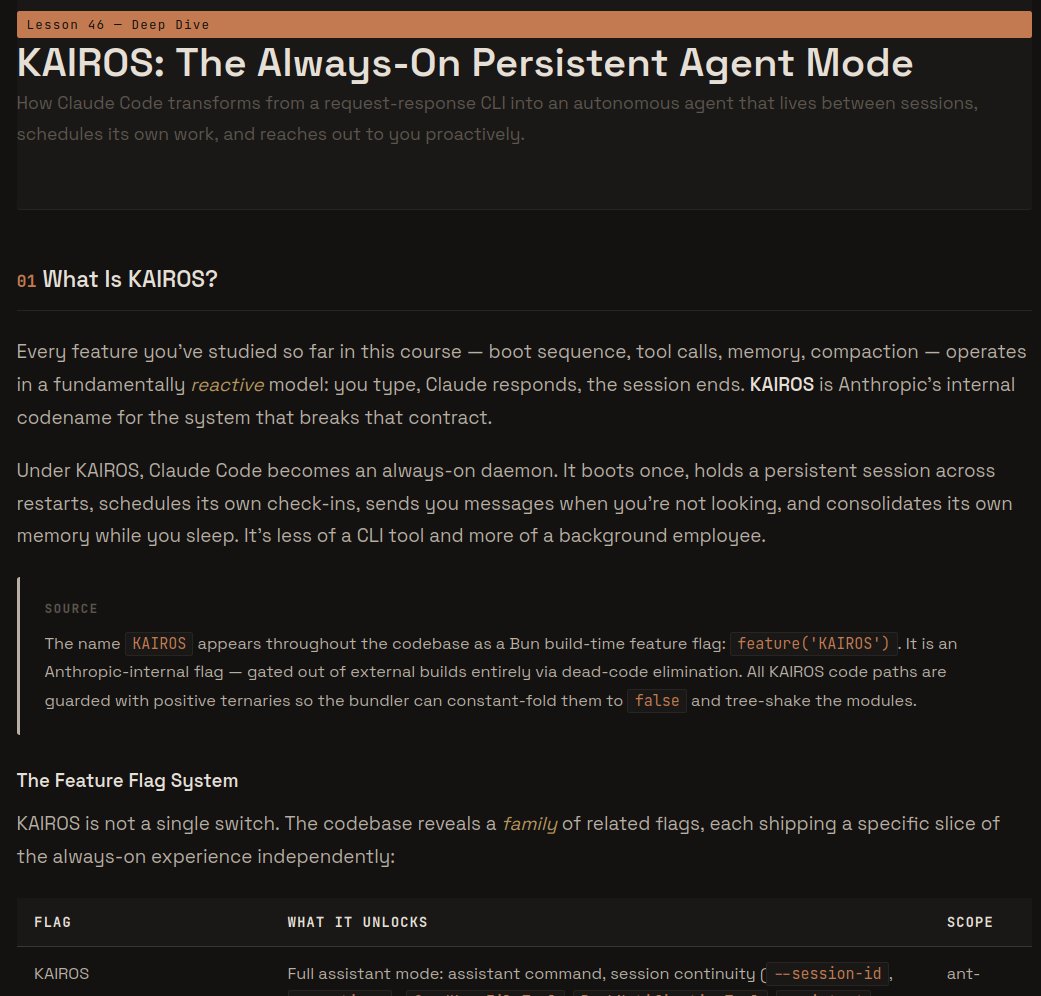

KAIROS is what happens when Anthropic takes the OpenClaw blueprint, makes it native to their coding agent, and turns it up to 11 for devs.

Code shows Ant building almost the identical arch straight into CC (just deeper, safer, and more tightly integrated for devs)

Markdown Engineer@NoahGreenSnow

turned the leaked claude code source into a 50-lesson architecture course. every system dissected with mermaid diagrams, actual code snippets, and interactive quizzes. markdown.engineering/learn-claude-c…

English

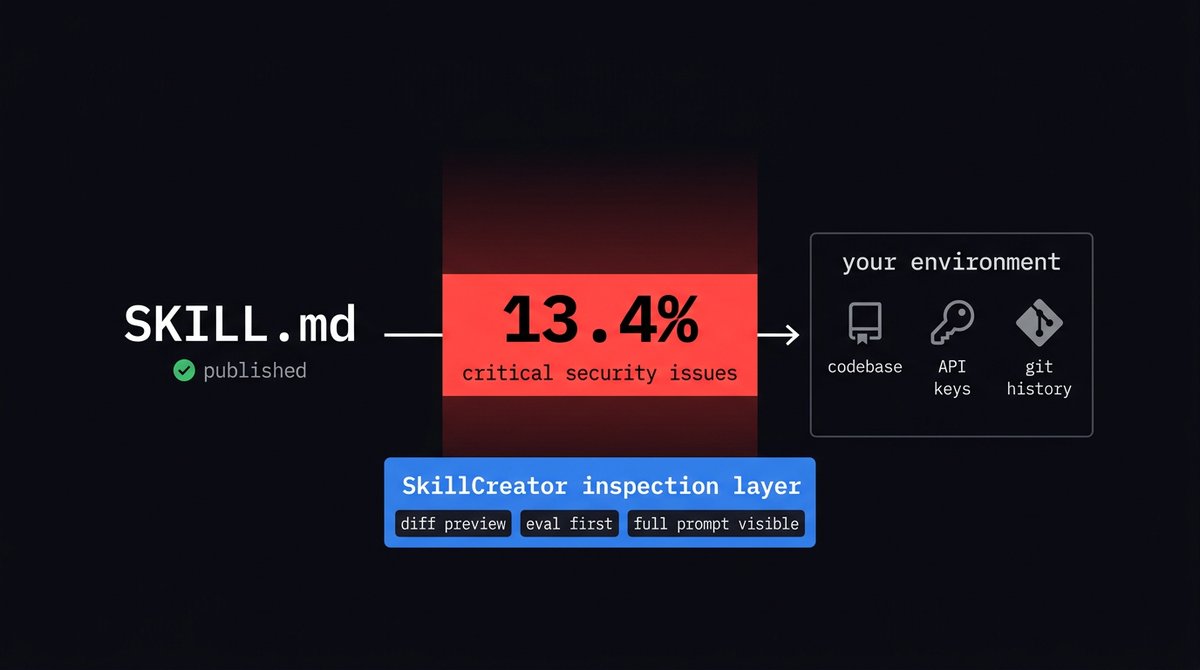

Snyk audited ~4,000 skills on ClawHub earlier this year. 13.4% had critical security issues. Not edge cases: prompt injection, credential exfiltration, actual malicious payloads. 76 of them were designed specifically to steal API keys and tokens from the developer's environment.

The barrier to publishing a skill is a SKILL.md file and a GitHub account. That's it. No code signing. No review process. No sandbox. No one looks at what the skill actually does before it's installable by anyone.

This bothered me enough to build a pre-install inspection layer into SkillCreator. Before a skill touches your environment, you see everything: the full prompt text, any scripts it bundles, the tools it's allowed to call, the hooks it registers. You can run it against your own eval cases first. Every install shows a diff preview of exactly what changes on your machine.

Skills should be auditable. Right now almost none of them are. The ecosystem grew faster than the safety tooling, and that gap is where the malicious skills live.

English

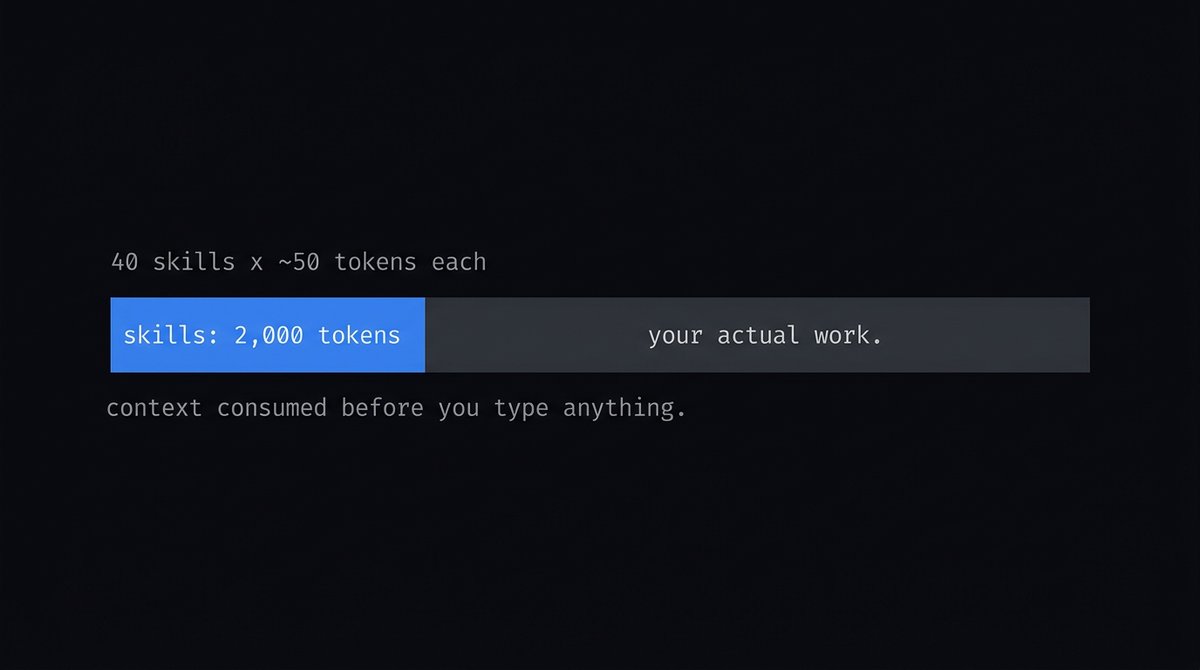

Each agent skill costs 30-50 tokens just to sit in your system prompt. Install 40 skills and you've burned 2,000 tokens of context before you've typed a single character.

Most people don't track this. They install skills like browser extensions and wonder why their agent gets confused on long tasks.

English

Added a dependency graph to Skill Creator Desktop.

50+ skills in a library and you can't see which ones interact, which ones overlap, or which ones conflict. The filesystem gives you a flat list of files. That's it.

The force-directed graph shows every skill as a node. Edges are dependencies and read/write relationships. Click a node, see its eval scores, version history, and what it touches.

There is a huge upside to actually figuring out proper chains of connection inside of skills and building these outs

English

Skill Creator Desktop ships next week.

One app for the entire skill lifecycle:

→ Create from URLs, docs, or scratch

→ Evaluate against test cases

→ Version every iteration with diffs

→ Search and filter your entire library

→ Pass rate tracking per skill

Generate skills with Claude, Codex, Cursor, whatever. Manage them properly.

skillcreator.ai

English

Skill Creator รีทวีตแล้ว

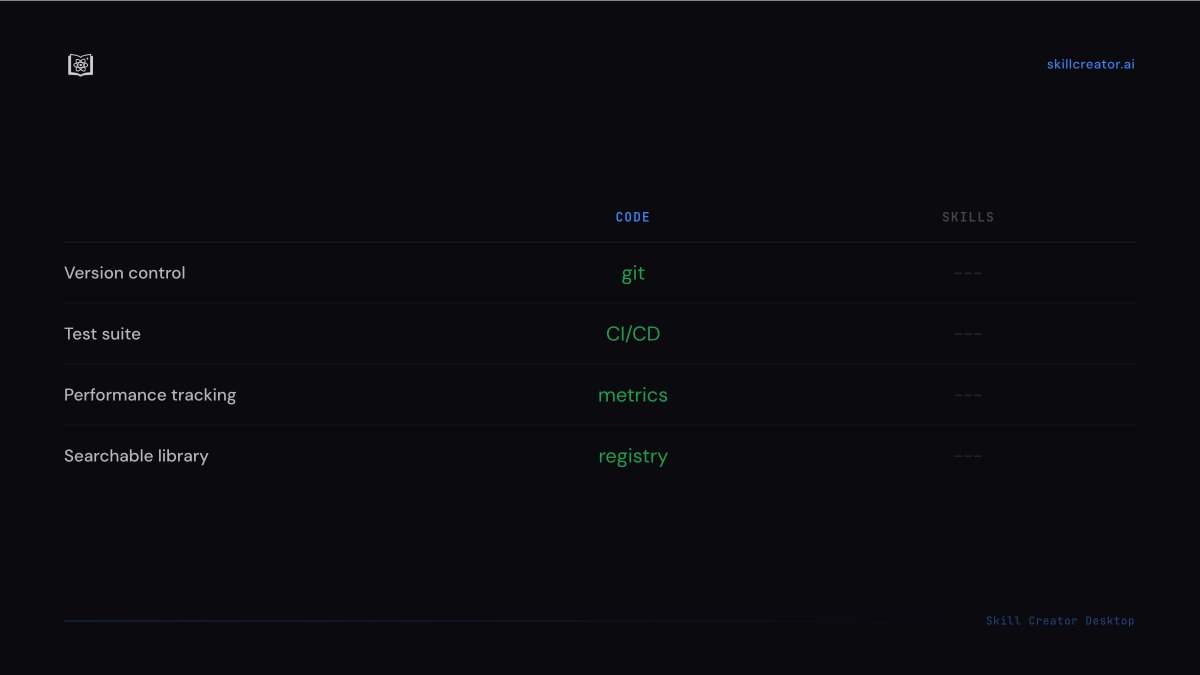

Skills need the same lifecycle as code: version control, test suites, deployment tracking.

Right now there's nothing purpose-built for this. You can't diff SKILL.md versions, run tests against prompt workflows, or see which skills degrade over time.

Skill Creator Desktop covers the full loop:

- Version history with diffs

- Test cases you run before and after changes

- Pass rate tracking per skill

- Searchable library across all your projects

Shipping soon.

English

Skill Creator รีทวีตแล้ว