ทวีตที่ปักหมุด

Stephen Witt

107 posts

Stephen Witt

@stephenwitt

The Thinking Machine, my book on Nvidia, goes on sale April 8. https://t.co/QXTUsGsVgk

เข้าร่วม Temmuz 2011

332 กำลังติดตาม2.9K ผู้ติดตาม

@ATabarrok @Pete_Monahan_JD Alex, why does the market fail here? Most insurance is competitively priced.

My take is that purchasers of $1MM+ assets become insensitive to add-ons ("What's another 4 grand?") introduced at the end of the buying process.

But perhaps there's more going on?

English

@Pete_Monahan_JD product idea is fine, way overpriced - see payout rates

English

Stephen Witt รีทวีตแล้ว

The editors @timesculture asked a few of us to pick our best read of the year.

I went for @stephenwitt's riveting biography of Jensen Huang, which helped me (sort of) understand chips but is also an extraordinary human study

thetimes.com/culture/books/…

English

For the New York Times, I wrote about ChatGPT and the race to build the world's best friend.

nytimes.com/2025/12/20/opi…

English

@stephenwitt You've come a long way. This award is a testament to your career transformation.

English

@khoomeik To be fair, Nvidia's tensor cores do take a n-dimensional tensor and reduce it to many smaller two-dimensional mathematical objects for processing. That part is correct.

Where I erred is that it is incorrect to label the resulting matrix multiplication as "two-dimensional."

English

ok i'm gonna officially claim the honor of ending the @stephenwitt 2d matmul saga

thanks to my evidently goated teaching skills which he read at 1:43pm, he retracted his statements at 1:45pm

call me the yung karpathy or smth 😤😎

English

@ben_golub I'm right about this. Tensors are downscaled to 2-D when the operation is executed in the computer. Read about it here:

developer.nvidia.com/blog/programmi…

English

After a scientist tries to explain that high-dimensional matrix multiplication is beautiful in some ways, New Yorker writer doubles down -- that's interesting, but recall that matrices have TWO dimensions.

Can't make it up.

Open the schools!!!!

Stephen Witt@stephenwitt

I agree, although I believe most matrix multiplies for AI are done in two dimensions. Anyway, I stand by my statement. Matmuls are an effective piece of mathematical machinery, but the mechanics of calculating them are headache-inducing. Indeed, it's exactly their computationally cumbersome nature that's propelling the data center boom. That point seems beyond argument.

English

@boazbaraktcs @aidanprattewart Actually, I really appreciate your time and insight. Thank you Boaz

English

@stephenwitt @aidanprattewart Glad we can come to understanding! Really didn't mean to send at you the matmul mafia with pitchforks. I guess we are a sensitive bunch..

English

@boazbaraktcs @aidanprattewart Okay, I formally retract this one. This would not be a two-dimensional multiply.

English

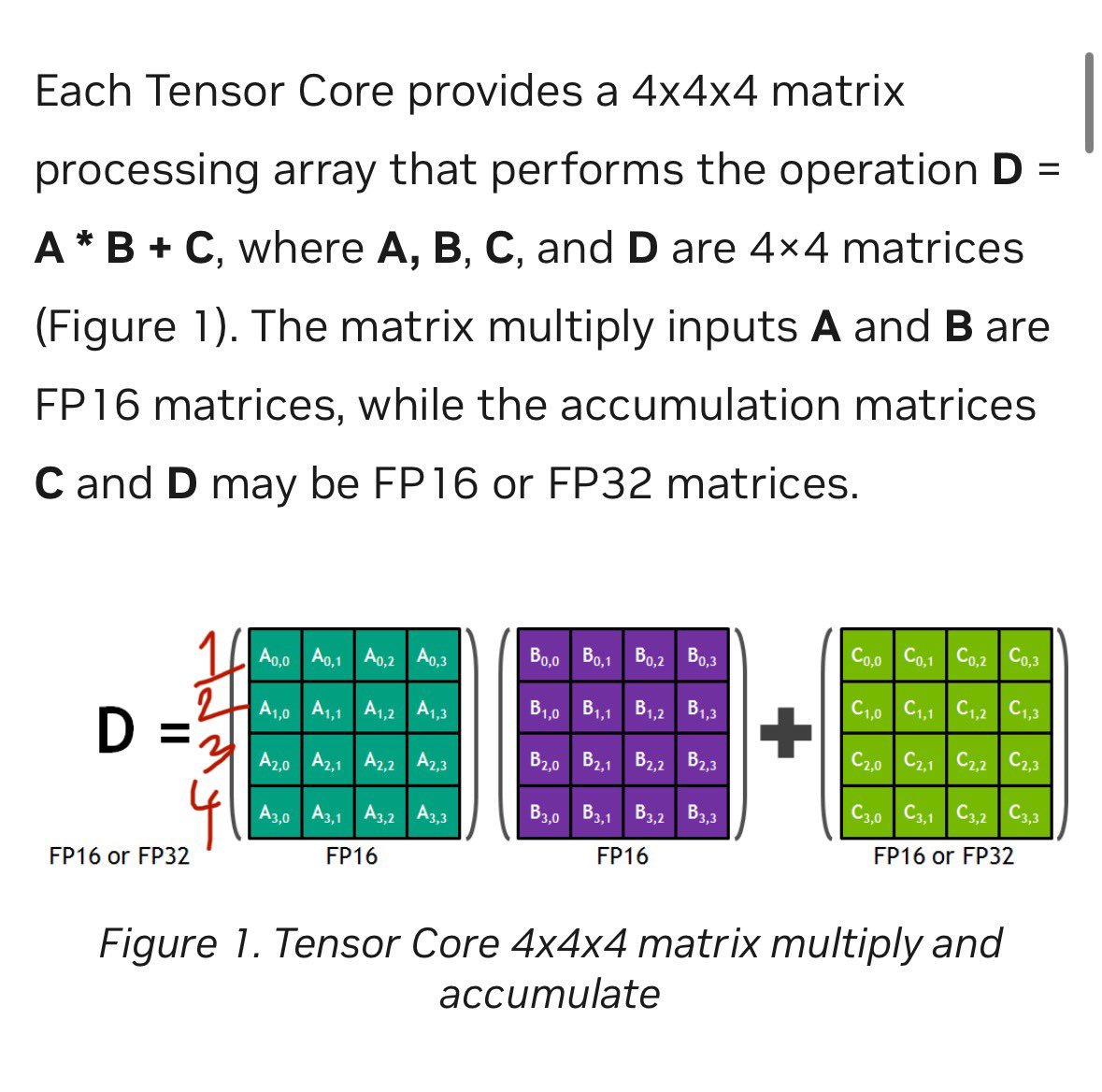

@boazbaraktcs @aidanprattewart Yes, sure, I mean it's all atoms and electrons in the end. But don't Nvidia's tensor cores operate at exactly the level of abstraction I'm discussing? Aren't they just little machines for producing infinity amounts of 4x4 matrix multiplies? Of simple, single-variable matrices?

English

@stephenwitt @boazbaraktcs @aidanprattewart a tensor core performs a matmul of 2 4x4 matrices

a 4x4 matrix is a mapping from a 4d space to another 4d space

this is what we mean when we talk about the dimensionality of a space

English

@boazbaraktcs @aidanprattewart Look, if you say I'm wrong—well, I believe you.

But it still seems to me that processing the operation at the tensor core level happens in 2-d? I must be missing something.

English

Really no need to double down. It's OK if a couple of sentences in a good article are poorly phrased.

I still think you are confusing two different notions of dimensionality - the dimensionality of the matrix itself, and the fact that any matrix - no matter how big - has two axes and so can be thought as a 2D object.

I actually think there is something fundamental about the matrices being big - the fact that square matrix multiplication takes k^3 operations but the matrices themselves are only k^2 numbers is in fact crucial to ensure data movement is smaller than number of flops which is important since memory is slower than computation. See discussion here

#understand-perf" target="_blank" rel="nofollow noopener">docs.nvidia.com/deeplearning/p…

English

The point I'm trying to make is that if you ask a data center to do a higher-dimensional tensor operation, it will break it apart and downscale it to 2-D at the level of the tensor core. So in this sense, most matrix multiplies *in the data center* are 2-D, regardless of what they look like to a programmer at a workstation.

Now, maybe that's too reductive! Or maybe, alternatively, it's not even reductive enough. After all, below this level of abstraction the operations are even more primitive. But it seems worth noting that Nvidia's empire is built on this specific operation, at this specific level of abstraction. That's the theme of this article.

English

@stephenwitt @aidanprattewart 2D here simply means that it is a matrix and not a tensor - that it has rows and columns. It’s not at all about the dimensionality of the vectors it operates on.

English

I don't dismiss it! It's probably the single-most important mathematical operation of our time. We are building nuclear power plants to do it.

But it's hard, cumbersome and unwieldy. The reason Nvidia has a $5 trillion market capitalization is because the complexity of the operation grows by the cube of the elements.

Now admittedly, non-commutativity is not *why* it's hard, but also: I didn't say that!

English

@stephenwitt @ben_golub Reread your article. You’re referring to “matrix multiplication” generally. And you dismiss it because it’s not commutative. 🤯

English

@aidanprattewart @boazbaraktcs Again, tensor cores do everything in 2-D read about it here:

developer.nvidia.com/blog/programmi…

English

@stephenwitt @boazbaraktcs > ‘I have a degree in Mathematics’

> confuses the fact that matrices are square with their dimensionality

English

I guess the question is what level of abstraction do you want to focus on. I think for programmers at workstations this stuff is really elegant. I think if you have a Ph.D. in linear algebra you can see this as truly beautiful.

But that's not what this article is about! It's about what's being executed *inside* the data center. At that level, it's essentially tensor cores spamming 2-d matrix multiplies to infinity. (And yes, below that, it's all one-d. And below that, it's electrons being pushed around.)

It's like a building made of brick. The building is beautiful. The individual brick? Well... you kind of have to squint

English

@stephenwitt @ben_golub kind of but not really. all matrixes are actually converted into 1 d arrays when they're executed because CPU / GPU memory is linear.

the representation in code / math is an abstraction, and that's where the beauty is.

English

@ben_golub (note that the somewhat confusing 4x4x4 terminology refers to four 4x4 matrices, not one 4x4x4 matrix)

English

@ben_golub Big reading comp fail here. Matrix multiplies for AI are indeed executed in two-d, regardless of the number of dimensions in the software framework.

The operation below is the cogwheel of capitalism.

English

@johnmark_taylor I think this is the point people are confused about. Linear algebra is elegant and beautiful, but AI math doesn't really take advantage of most of that. It doesn't even calculate determinants. It takes the most unwieldy calculation in the entire domain and spams it to infinity

English

@stephenwitt You find no elegance at all in linear algebra?

English