TML Lab (EPFL)

34 posts

TML Lab (EPFL)

@tml_lab

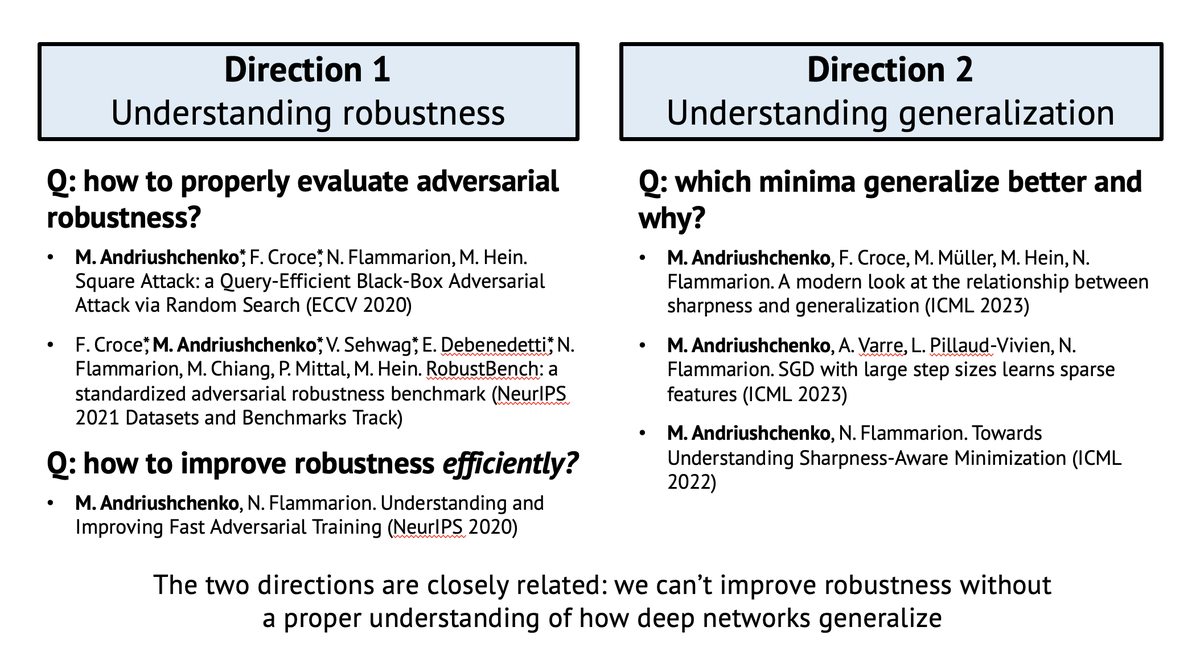

Theory of Machine Learning Lab at @EPFL led by Nicolas Flammarion. We develop algorithmic & theoretical tools to better understand ML & make it more robust.

🚨Excited to release OS-Harm! 🚨 The safety of computer use agents has been largely overlooked. We created a new safety benchmark based on OSWorld for measuring 3 broad categories of harm: 1. deliberate user misuse, 2. prompt injections, 3. model misbehavior.

Excited to announce FuseLIP: an embedding model that encodes image+text into a single vector. We achieve this by tokenizing images into discrete tokens, merging these with the text tokens and subsequently processing them with a single transformer.

We are announcing the winners of our Trojan Detection Competition on Aligned LLMs!! 🥇 @tml_lab (@fra__31, @maksym_andr and Nicolas Flammarion) 🥈 @krystof_mitka 🥉 @apeoffire 🧵 With some of the main findings!