Ashutosh

6.6K posts

Ashutosh

@voidmonk

Founder https://t.co/D3LZEpwW9n. B2B SaaS, DevOps, DadOps. Slow living 🦥

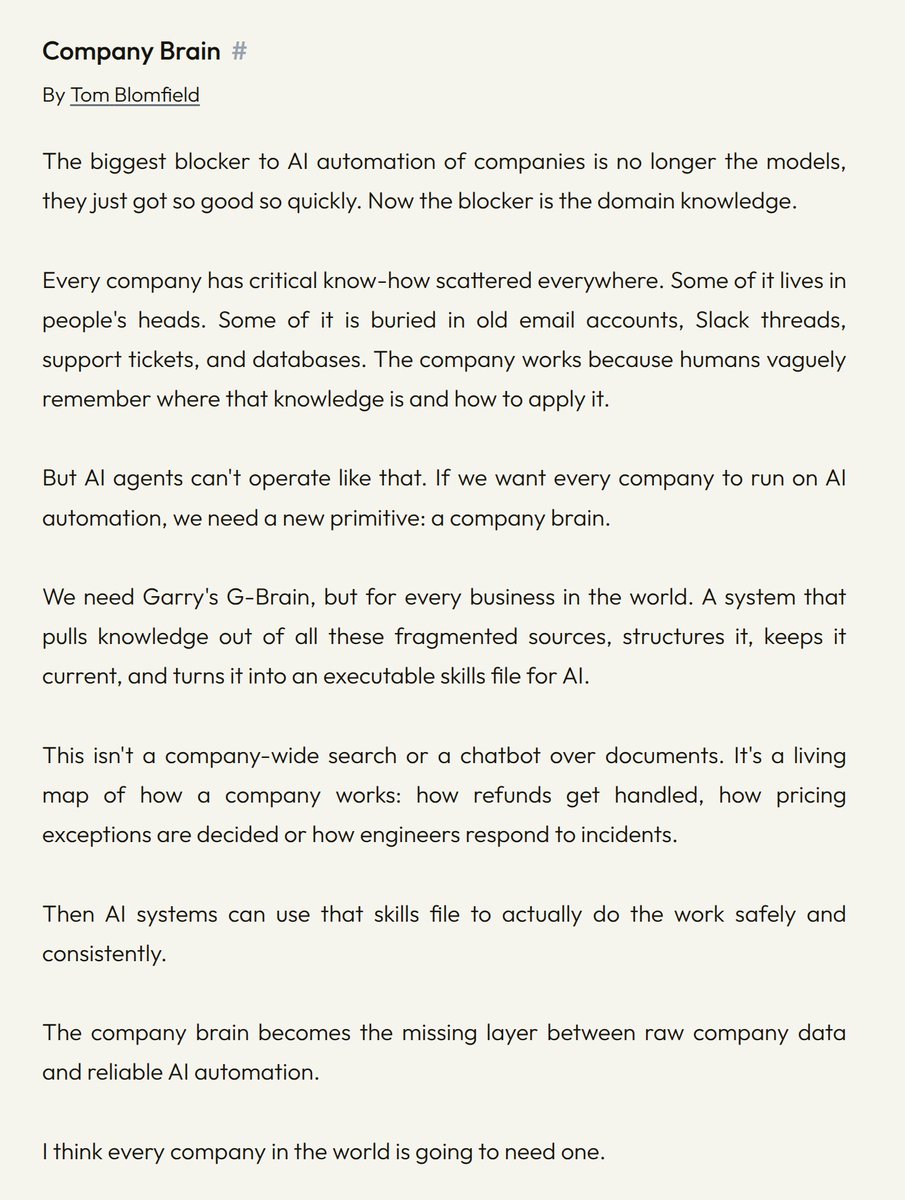

Talking to smarter folks than me, I'm convinced many of the AI folks in my timeline are full of shit. Nobody is "running 20 agents over night" and building stuff for actual users. Maybe some are building internal tools or disposable software. Maybe. But building software people like using? That doesn't get hacked on day one or blow up after the 3rd user? Nope. I don't even understand what that's supposed to look like. Do you work out a 57 pages document that perfectly describes what you want to build and then summon 14 agents and have them run wild for 6 hours? And what comes out on the other end isn't a broken pile of shit? Nope. Not buying it. PS: it may also be that I have an IQ of 82 and can't figure it out.

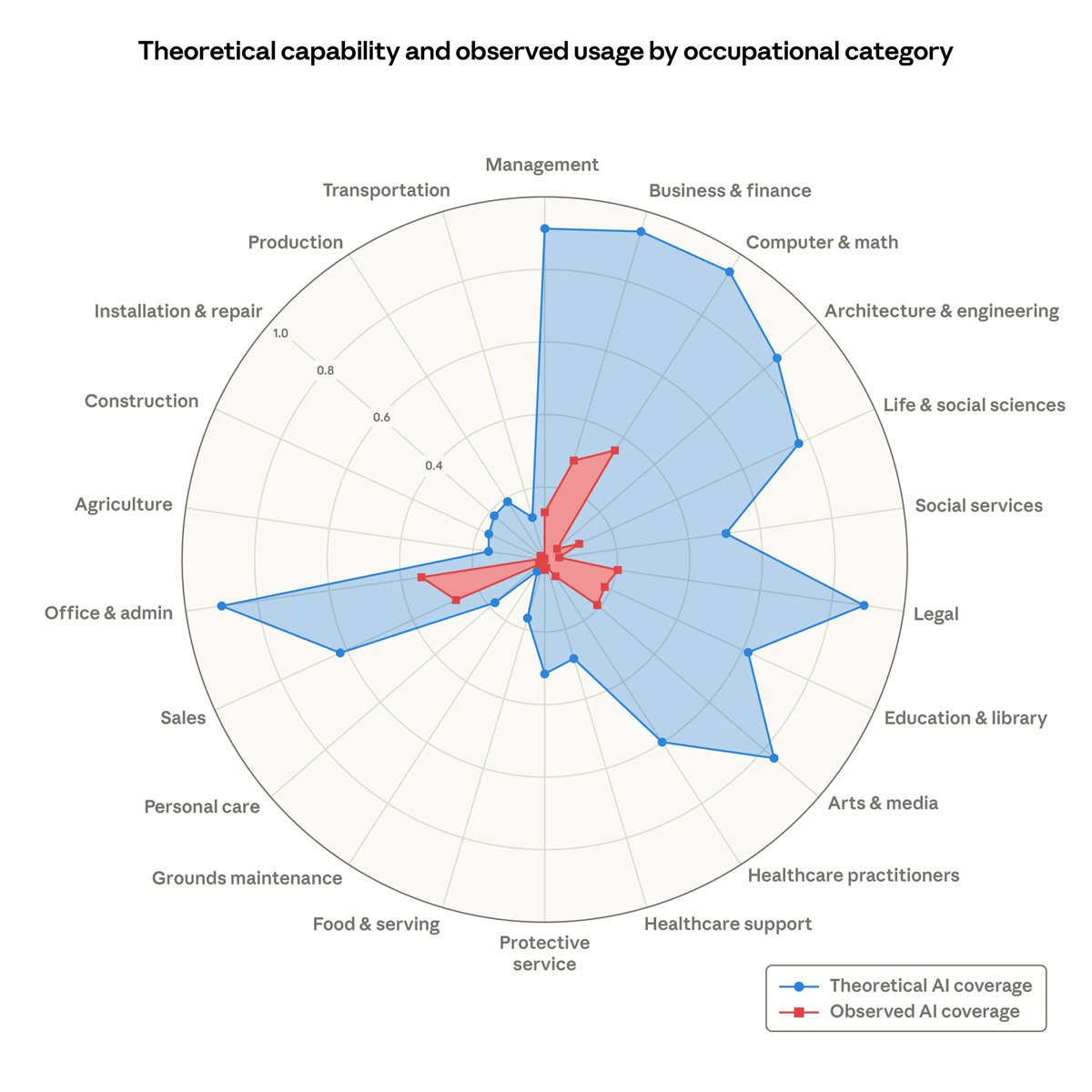

Everyone says “AI will take all the jobs.” If that happens… how does this future actually work? No jobs → no income → no spending. So who buys things? Who pays rent? Who keeps the economy moving? What am I missing here?

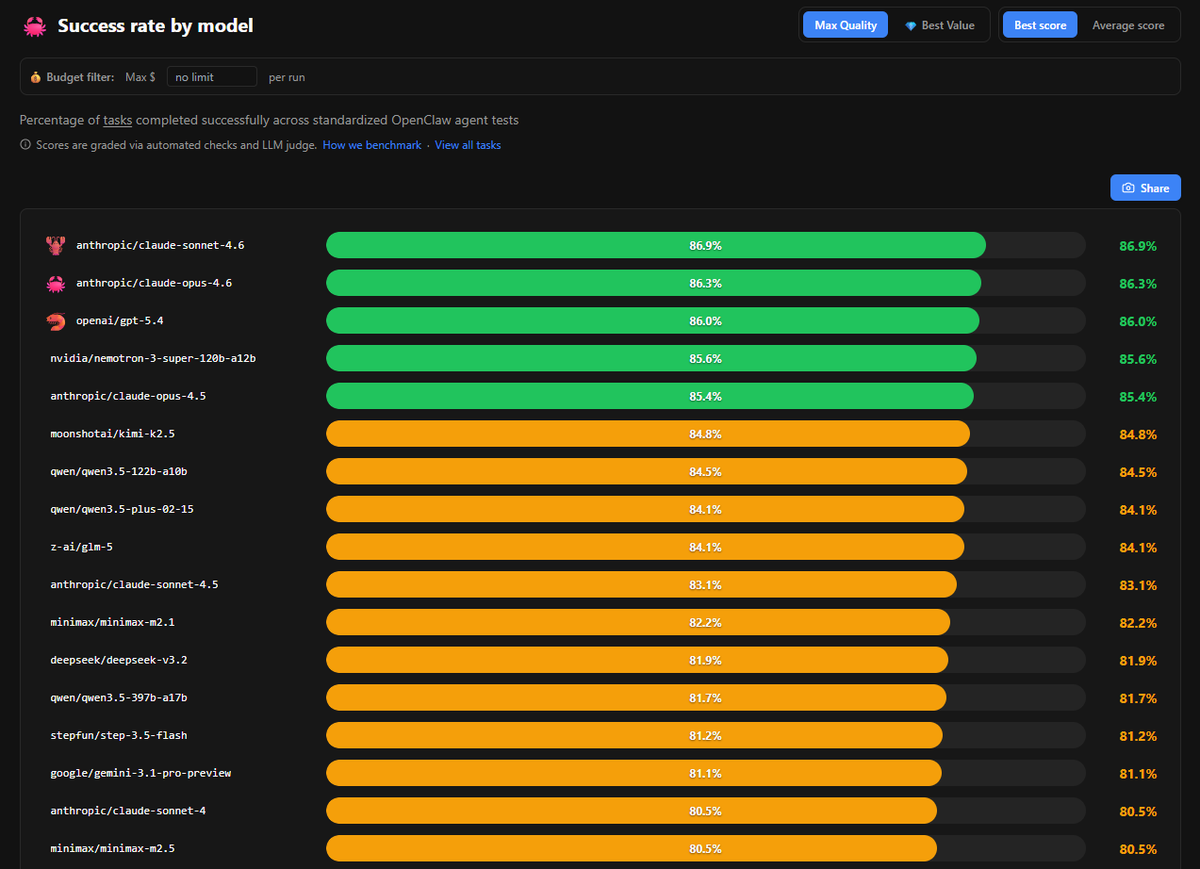

Kimi K2.5 on @opencode Zen is hilariously cheap. I bought $20 worth of tokens two weeks ago, and I still have $10.89 left! After 3M tokens! If there's a bubble in AI, it's pricing a million tokens at $25 (and beyond).