ทวีตที่ปักหมุด

Tom Leranth

2.4K posts

Tom Leranth

@xkey

Father; Applied Mathematician; Dev (C/C++/Julia/Python/Go/smidgeons of Elixir & Rust); Algorithms; Cryptography; H/w & S/w; Amat Photographer; Powerlifter

Midwest เข้าร่วม Mart 2008

489 กำลังติดตาม201 ผู้ติดตาม

Tom Leranth รีทวีตแล้ว

Boost Blueprint 017: Boost.Bloom. Provides probabilistic filtering for massive datasets. O(1) lookups, configurable false positive rates, and memory footprint that scales with your tolerance for error, not your data size

It’s the perfect architectural choice for high-speed caches and network filtering

Level up your C++ architecture. Follow @Boost_Libraries

for the #BoostBlueprint series

#cpp

English

Tom Leranth รีทวีตแล้ว

Tom Leranth รีทวีตแล้ว

Laplace transforms come to life like never before with computation and visualization everywhere in the new Wolfram eTextbook "Laplace Transforms in Theory and Practice."

Download it for free today:

wolfram-media.com/products/lapla…

English

Tom Leranth รีทวีตแล้ว

FFmpeg 8.1 Preparing For Release With Vulkan Improvements, JPEG-XS & More

phoronix.com/news/FFmpeg-8.…

English

Tom Leranth รีทวีตแล้ว

Tom Leranth รีทวีตแล้ว

AlphaEvolve is closed-source. We release 🌟SkyDiscover🌟, a flexible, modular open-source framework with two new adaptive algorithms that match or exceed AlphaEvolve on many benchmarks and outperform OpenEvolve, GEPA, and ShinkaEvolve across 200+ optimization tasks.

Our new algorithms dynamically adapt their search strategy, and can even let the AI optimize its own optimization process on the fly!

Results:

📊 +34% median score improvement on 172 Frontier-CS problems.

🧮 Matches/exceeds AlphaEvolve on many math benchmarks

⚙️ Discovers system optimizations beyond human-designed SOTA

🧵👇

GIF

English

Tom Leranth รีทวีตแล้ว

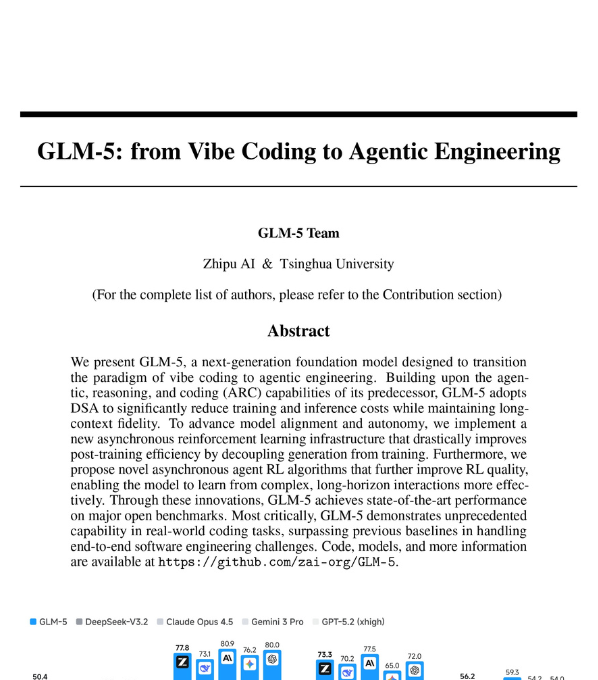

Zhipu AI and Tsinghua University just dropped one of the most significant open-source AI papers of 2026.

It's called GLM-5.

And it basically marks the moment "vibe coding" died and "agentic engineering" was born.

This isn't another incremental model update.

It's a 744 billion parameter system that doesn't just write code when you ask it to. It plans entire software projects, builds them, tests them, debugs them, and iterates on them. Autonomously. For hours.

No human in the loop.

Here's why the AI community is losing its mind:

GLM-5 is the first open-weights model to score 50 on the Artificial Analysis Intelligence Index v4.0. No open model has ever hit that number before. It jumped 8 points from its predecessor in a single generation.

On LMArena, the benchmark where millions of real humans judge AI models head-to-head, GLM-5 is the #1 open model. In both text and code.

Sitting alongside Claude Opus 4.5 and Gemini 3 Pro. Not below them. Next to them.

And here's the part most people will miss:

They released it anonymously first. Under the name "Pony Alpha" on OpenRouter. No brand. No hype. Just raw capability.

Within days, developers were convinced it was a secret Claude Sonnet 5 release. Others guessed DeepSeek V4. Or a leaked Grok update. 25% of users guessed it was Claude. 20% guessed DeepSeek. Only a small fraction guessed it was a Chinese model.

Then Zhipu confirmed it was GLM-5.

That moment matters. Because it proved that when you strip the brand away, this model competes at the absolute frontier. The community embraced it because it worked. Not because of where it came from.

Now the technical part that engineers actually care about:

They trained it on 28.5 trillion tokens. 744B total parameters with 40B active at any time, using a Mixture of Experts architecture. It handles 200K token context windows, meaning it can hold entire codebases in memory while working.

The training pipeline is unlike anything in the open-source world right now.

First, they adopted DeepSeek's Sparse Attention, which cuts attention computation by half on long sequences. 90% of attention entries in long contexts turned out to be redundant. GLM-5 just skips them.

Then they built a fully asynchronous reinforcement learning system. Most RL training wastes massive GPU time waiting for the slowest agent to finish its task. GLM-5's system decouples generation from training entirely. The inference engine runs continuously generating agent trajectories while the training engine updates weights in parallel. No waiting. No idle GPUs.

The RL happens in three stages: Reasoning RL first, then Agentic RL for coding and search tasks, then General RL for human-style alignment. And they use cross-stage distillation at the end so the model doesn't forget what it learned in earlier stages.

But here's what actually separates this from every other model paper:

They gave it a simulated vending machine business to run for an entire year.

GLM-5 finished with $4,432 in its bank account. That's #1 among all open-source models. It planned inventory, managed cash flow, made purchasing decisions, and adapted to changing conditions over 365 simulated days. Autonomously.

They also built over 10,000 real-world software engineering environments for it to train in. Not toy problems. Real GitHub issues across Python, Java, Go, C++, Rust, JavaScript, TypeScript, PHP, and Ruby. Real test suites. Real codebases.

On SWE-bench Verified, the gold standard for measuring whether an AI can actually fix real software bugs, GLM-5 scores 77.8%. That beats Gemini 3 Pro and GPT-5.2. It's approaching Claude Opus 4.5's 80.9%.

On Terminal-Bench 2.0, it matches Claude Opus 4.5 when you fix ambiguous instructions in the benchmark.

On BrowseComp, the web browsing agent benchmark, GLM-5 scores 75.9% with context management. That's #1 across every model tested, open or proprietary.

And perhaps most impressively, it's fully optimized to run on seven different Chinese chip platforms. Huawei Ascend, Moore Threads, Hygon, Cambricon, Kunlunxin, MetaX, and Enflame. On a single Chinese node, it matches the performance of dual-GPU international clusters while cutting deployment costs by 50%.

This is the part where the geopolitical implications get uncomfortable:

A Chinese lab just released an open-weights model that matches Claude and GPT-5.2 on real-world coding, runs natively on Chinese hardware, and was good enough to fool the Western developer community into thinking it was made by Anthropic.

The AI race isn't coming. It's here. And the gap between open and closed, between East and West, is narrowing faster than anyone projected.

GLM-5 is open-weights. Code and models available now.

English

Tom Leranth รีทวีตแล้ว

This story is actually insane:

• dude drops $2000 on a DJI robot vacuum like a lunatic

• refuses to use the normal app like a peasant

• Sammy Azdoufal fires up Claude to crack the API so he can drive it with an xbox controller

• Claude delivers the goods

• pulls an auth token from their servers, connects successfully

• except the system thinks he controls 7000 vacuums

• checks again

• yep, seven thousand

• DJI built authentication with zero device ownership verification

• any valid token works for any unit on the planet

• Sammy now has eyes inside homes across 24 countries

• live vacuum camera feeds everywhere

• full floor plans from the mapping data

• some guy in germany eating cereal at 3am, unaware his roomba is snitching

• one API call away from being the most informed burglar in history

• all he wanted was to steer his vacuum with a joystick

• does the right thing and reports it

• DJI fixes it in two days

• back to normal life with his stupidly expensive floor cleaner

• IoT companies stay undefeated at shipping garbage security

English

Tom Leranth รีทวีตแล้ว

Tom Leranth รีทวีตแล้ว

Tom Leranth รีทวีตแล้ว

Google just dropped 145 pages documenting how researchers use Gemini to tackle scientific problems.

𝘚𝘢𝘷𝘦 & 𝘙𝘦𝘵𝘸𝘦𝘦𝘵 (𝘵𝘰 𝘩𝘦𝘭𝘱 𝘺𝘰𝘶𝘳 𝘯𝘦𝘵𝘸𝘰𝘳𝘬)

A few things that stood out to me (in simple terms):

- In one case, the AI was used as an adversarial reviewer and caught a serious flaw in a cryptography proof that had passed human review. That’s a very different use than “summarise this PDF.”

- The model links tools from very different fields (for example, using theorems from geometry/measure theory to make progress on algorithms questions). This is where its wide reading really matters.

- They don’t let the model run wild. Humans still choose the problems, check every proof, and decide what’s actually new. The model is there to suggest ideas, spot gaps, and do the heavy algebra.

- Agentic loops, not just chat

In some projects, they plug Gemini into a loop where it:

-- proposes a mathematical expression,

-- writes code to test it,

-- reads the error messages, and

-- fixes itself. (humans only step in when something promising appears)

We are moving past the era of simple chat prompts and into a more sophisticated era of research.

⮑ If your institution is interested in hosting an AI session or a workshop, request your training here: forms.gle/dbRtc7j2W4zZyL…

English

Tom Leranth รีทวีตแล้ว

Thomas Watson has a new computational complexity textbook about to be published by Cambridge University Press. There's a free version online for personal use.

complexityincs.com

English

Tom Leranth รีทวีตแล้ว

We are thrilled to announce a strategic partnership with Google!

Google is also making a financial investment in Sakana AI to strengthen this collaboration.

This underscores their recognition of our technical depth and our mission to advance AI in Japan.

We are combining Google’s world-class products with our agile R&D to tackle complex challenges.

By leveraging models like Gemini and Gemma, we will accelerate our breakthroughs in automated scientific discovery.

Our work on The AI Scientist and ALE-Agent has already demonstrated the power of these models.

Now we are going further.

We are scaling our deployment of reliable AI in mission-critical sectors.

We are working with financial institutions and government organizations to deliver solutions that meet the highest standards of security and data sovereignty.

We are excited to drive the widespread adoption of reliable AI and advance Japan’s AI ecosystem together!

GIF

English

Tom Leranth รีทวีตแล้ว

Announcing Numina-Lean-Agent: an open-source framework achieving SOTA in formal theorem proving using generic models.

Two major breakthroughs: 🏆 12/12 on Putnam 2025 📜 Formalized the "Effective Brascamp-Lieb inequalities" research paper (>90% AI-generated)

Code: github.com/project-numina…

Demo: demo.projectnumina.ai

Paper: github.com/project-numina…

English

Tom Leranth รีทวีตแล้ว

There's an entire parallel scientific corpus most western researches never see.

Today i'm launching chinarxiv.org, a fully automated translation pipeline of all Chinese preprints, including the figures, to make that available.

English

Tom Leranth รีทวีตแล้ว

Tom Leranth รีทวีตแล้ว

All of my Deep RL course lecture videos from Spring 2025 are now online! 🥳

Youtube playlist: youtube.com/watch?v=EvHRQh…

YouTube

English

@VessOnSecurity ...and here I thought the US Healthcare/insurance system was crappily expensive (it is!). Everyone likes to tout the Canadian system or European. Your current situation sucks - keep hanging on and go enjoy more of your favorite things in life!

English

bontchev.nlcv.bas.bg/bye.html

Archived link: archive.is/htZOu

If you can't be assed to read it (it's rather long), the TL;DR version is that I'm dying.

English

Tom Leranth รีทวีตแล้ว

Semantic search without sending your data to the cloud?

Yes, please!

In this issue of Docker’s AI Newsletter, see how to generate embeddings locally with Docker Model Runner - no API fees, no third-party access, just full control.

Try it → bit.ly/48xpg9a

#Docker #AI #SemanticSearch

English