BitcoinAddict⚡

20.7K posts

BitcoinAddict⚡

@BitcoinAddict_

Bitcoin-only. Not crypto. Building @NakaPayApp⚡, @RapidMPV 🚀, @StarseedCapital

Sumali Ağustos 2016

304 Sinusundan3.5K Mga Tagasunod

Naka-pin na Tweet

BitcoinAddict⚡ nag-retweet

Qwen 3.6 Plus Preview just dropped on OpenRouter.

1,000,000 token context window. $0 input. $0 output.

It's Free.

A million tokens of context for free.

This is insane.

Claude Opus 4.6 charges $5/$25 per million tokens for 200K context.

GPT 5.4 charges for 1M context.

Qwen just gave it away. All free.

Open source is not slowing down. It's accelerating.

English

For the Qwen3.5-Omni family (Plus/Flash/Light variants), the smaller Flash or Light ones should run efficiently locally on a high-end consumer rig: NVIDIA RTX 4090 (24GB VRAM) or equivalent, with 4/8-bit quantization via vLLM or Ollama for real-time audio-visual processing and 256k context.

Plus likely needs multi-GPU enterprise hardware (e.g., 2+ H100s) for full offline capacity.

Weights and quantized versions are hitting HF soon—start with their Offline Demo space for testing. Cloud API is the smoothest for now.

English

🚀 Qwen3.5-Omni is here! Scaling up to a native omni-modal AGI.

Meet the next generation of Qwen, designed for native text, image, audio, and video understanding, with major advances in both intelligence and real-time interaction.

A standout feature: 'Audio-Visual Vibe Coding'. Describe your vision to the camera, and Qwen3.5-Omni-Plus instantly builds a functional website or game for you.

Offline Highlights:

🎬 Script-Level Captioning: Generate detailed video scripts with timestamps, scene cuts & speaker mapping.

🏆 SOTA Performance: Outperform Gemini-3.1 Pro in audio and matches its audio-visual understanding.

🧠 Massive Capacity: Natively handle up to 10h of audio or 400s of 720p video, trained on 100M+ hours of data.

🌍 Global Reach: Recognize 113 languages (speech) & speaks 36.

Real-time Features:

🎙️ Fine-Grained Voice Control: Adjust emotion, pace, and volume in real-time.

🔍 Built-in Web Search & complex function calling.

👤 Voice Cloning: Customize your AI's voice from a short sample, with engineering rollout coming soon.

💬 Human-like Conversation: Smart turn-taking that understands real intent and ignores noise.

The Qwen3.5-Omni family includes Plus, Flash, and Light variants.

Try it out:

Blog: qwen.ai/blog?id=qwen3.…

Realtime Interaction: click the VoiceChat/VideoChat button (bottom-right): chat.qwen.ai

HF-Demo: huggingface.co/spaces/Qwen/Qw…

HF-VoiceOnline-Demo: huggingface.co/spaces/Qwen/Qw…

API-Offline: alibabacloud.com/help/en/model-…

API-Realtime: alibabacloud.com/help/en/model-…

English

BitcoinAddict⚡ nag-retweet

@HodlMagoo It’s doesn’t find the economy. It funds the bankers profits.

English

Coinbase wants to pay you 4-5% yield on USDC.

Your bank pays you 0.5%.

The math is obvious. The capital flight would be real.

But the banks aren't just protecting profits. They're protecting the credit creation engine that funds the entire economy.

Fractional reserve banking IS growth financing.

English

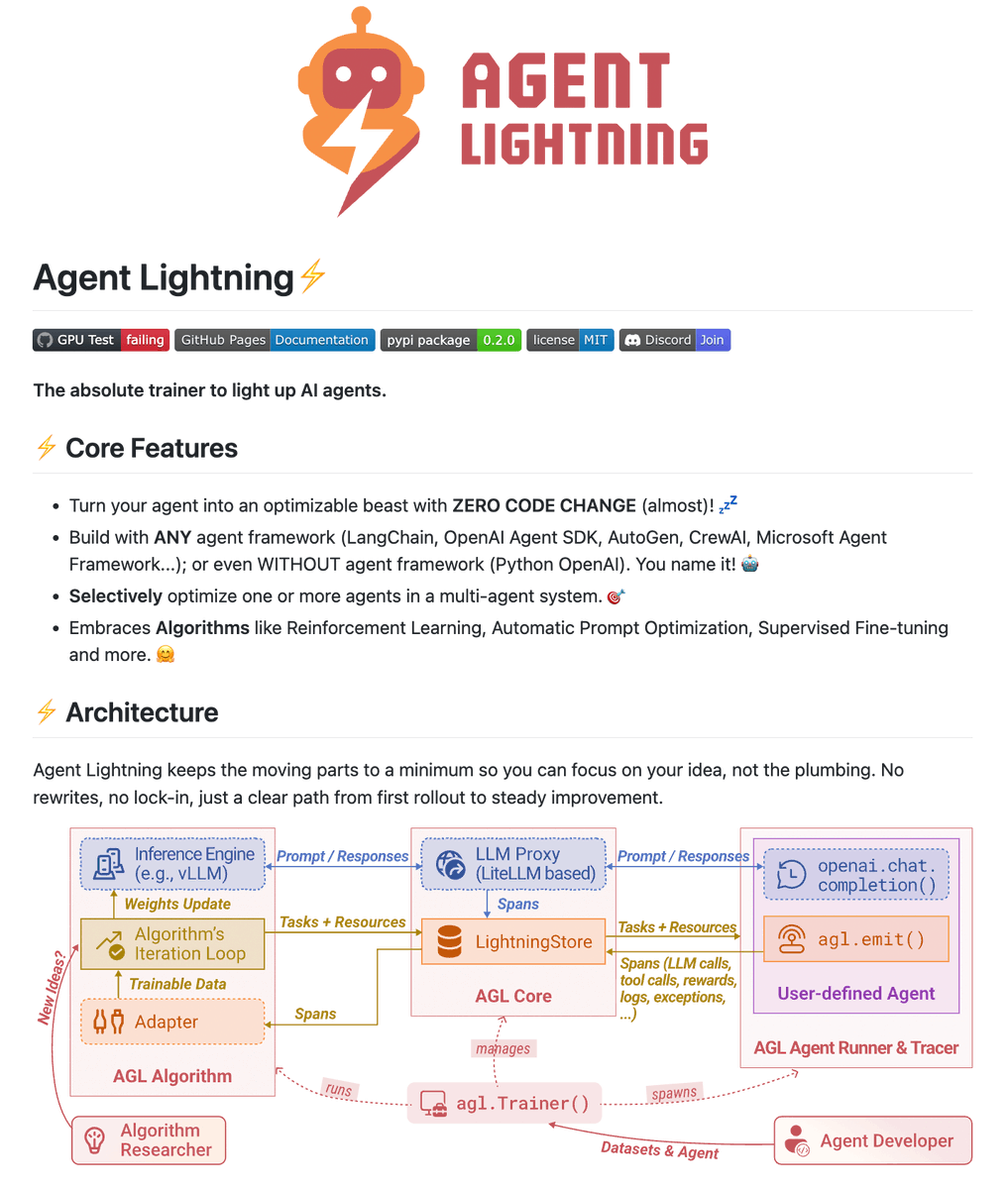

Microsoft did it again!

Building with AI agents almost never works on the first try.

A dev has to spend days tweaking prompts, adding examples, hoping it gets better.

This is exactly what Microsoft's Agent Lightning solves.

It's an open-source framework that trains ANY AI agent with reinforcement learning. Works with LangChain, AutoGen, CrewAI, OpenAI SDK, or plain Python.

Here's how it works:

> Your agent runs normally with whatever framework you're using. Just add a lightweight agl.emit() helper or let the tracer auto-collect everything.

> Agent Lightning captures every prompt, tool call, and reward. Stores them as structured events.

> You pick an algorithm (RL, prompt optimization, fine-tuning). It reads the events, learns patterns, and generates improved prompts or policy weights.

> The Trainer pushes updates back to your agent. Your agent gets better without you rewriting anything.

In fact, you can also optimize individual agents in a multi-agent system.

I have shared the link to the GitHub repo in the replies!

English

i thought it was clickbait so i backtested it.

since feb 21 i've been running a collector that records binance BTC price (websocket, <50ms latency) and @Polymarket CLOB best bid/ask (websocket, ~275 updates/sec). 5 weeks of data. ~18GB total.

i ran this strategy across 10,019 rounds with a flat $5/trade (no Kelly, no compounding):

→ +$5,163 cumulative PnL on a $500 bankroll

→ 73.3% win rate

→ not a single red day in 35 days

→ $1.26 EV per trade at the optimal delta threshold (0.20%)

the strategy works. it's real edge.

but this doesn't mean anyone can do it even with claude.

the needed infra:

> server co-location: polymarket's matching engine is on london. equinix LD4 gets 0.56ms latency. a random US vps? 80-120ms.

> dual websocket pipeline: binance for signal, polymarket CLOB for prices, both running 24/7 with auto-reconnect. REST polling is too slow

> FOK fill rate: real-world fill rate on competitive BTC contracts is ~30%, not 100%. backtest won't tell us that

> language: python adds 10-30ms per order. rust clients hit 1-3ms.

> cloudflare + geo-blocks: data center IPs get flagged, some regions are blocked for trading.

> wallet plumbing: proxy wallet signatures, USDC approvals on polygon, derived API creds (not static keys).

> one crash = 12+ missed rounds. pm2, exponential backoff, telegram alerts, weekly db rotation

AdiiX@adiix_official

English

@icanvardar I did. It’s very basic on how it writes code. Very low quality and it’s not worth it even if it’s 10x cheaper.

For simple tasks is OK.

English

@heynavtoor This repo is from 2 years ago and it uses the OpenAI model so it's the same price as OpenAI.

English

🚨 OpenAI charges $0.006/minute. Google charges $0.024. AWS charges $0.024.

Someone just open sourced a tool that does it for $0. And it's faster than all of them.

It's called Insanely Fast Whisper. And that's not hype. That's the benchmark.

150 minutes of audio. 98 seconds to transcribe. On your own machine. No API key. No cloud. No per-minute billing.

Here's what the numbers look like:

→ Whisper Large v3 + Flash Attention 2: 150 min of audio in 98 seconds

→ Distil Whisper + Flash Attention 2: 150 min in 78 seconds

→ Standard Whisper without optimization: 31 minutes for the same job

→ That's a 19x speedup. Same model. Same accuracy. Just faster.

Here's what it does:

→ One command to transcribe any audio file or URL

→ Speaker diarization — knows WHO said WHAT

→ Transcription AND translation to other languages

→ Runs on NVIDIA GPUs and Mac (Apple Silicon)

→ Flash Attention 2 for maximum speed

→ Clean JSON output with timestamps

→ Works with every Whisper model variant

Here's the wildest part:

Otter.ai charges $100/year. Rev charges $1.50/minute. Descript charges $24/month. Enterprise transcription contracts cost thousands.

Podcasters, journalists, researchers, lawyers, content creators — anyone still paying for transcription is lighting money on fire.

8.8K GitHub stars. 633 forks. MIT License.

100% Open Source.

(Link in the comments)

English

@AlexFinn What will have better performance?

Mac mini or MacBook Pro M5?

Same RAM. Don’t compare the price.

English

This is potentially the biggest news of the year

Google just released TurboQuant. An algorithm that makes LLM’s smaller and faster, without losing quality

Meaning that 16gb Mac Mini now can run INCREDIBLE AI models. Completely locally, free, and secure

This also means:

• Much larger context windows possible with way less slowdown and degradation

• You’ll be able to run high quality AI on your phone

• Speed and quality up. Prices down.

The people who made fun of you for buying a Mac Mini now have major egg on their face.

This pushes all of AI forward in a such a MASSIVE way

It can’t be stated enough: props to Google for releasing this for all. They could have gatekept it for themselves like I imagine a lot of other big AI labs would have. They didn’t. They decided to advance humanity.

2026 is going to be the biggest year in human history.

Google Research@GoogleResearch

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

English

@jpschroeder How is it better than React? AI agents are great at React as well.

English

We’re open sourcing ArrowJS 1.0: the first UI framework for coding agents.

Imagine React/Vue, but with no compiler, build process, or JSX transformer. It’s just TS/JS so LLMs are already *great* at it.

AND run generated code securely w/ sandbox pkg.

➡️ arrow-js.com

English

@slow_developer What metric are you using? For me GPT sucks big time and cannot build a simple app.

English

@matteopelleg @nikitabier It’s by design. The algo has an agenda.

English

@Mnilax @dcanerate How much does Polymarket pay you to shill for them?

English

another trader went from -$18,000 to +$550,000 in a month after installing the right MCPs.

think it's a joke?

you can check him and see for yourself:

$2,537.52 -> $18,189.73 (+616.83%)

$4,660.97 -> $23,015.07 (+393.78%)

$11,468.85 -> $36,842.38 (+221.24%)

this is all on 5-min BTC markets on Polymarket.

he’s not a financial analyst or a genius.

after a streak of liquidations, he just deep-dived into the nuances of AI and figured out where his bot was losing to the rest.

MCP plugins are the most underrated thing right now.

you can do the exact same.

Mnimiy@Mnilax

English

@Umesh__digital Claude Code.

Codex writes code.

Claude Code understands your entire project.

Not the same thing. 👀

English