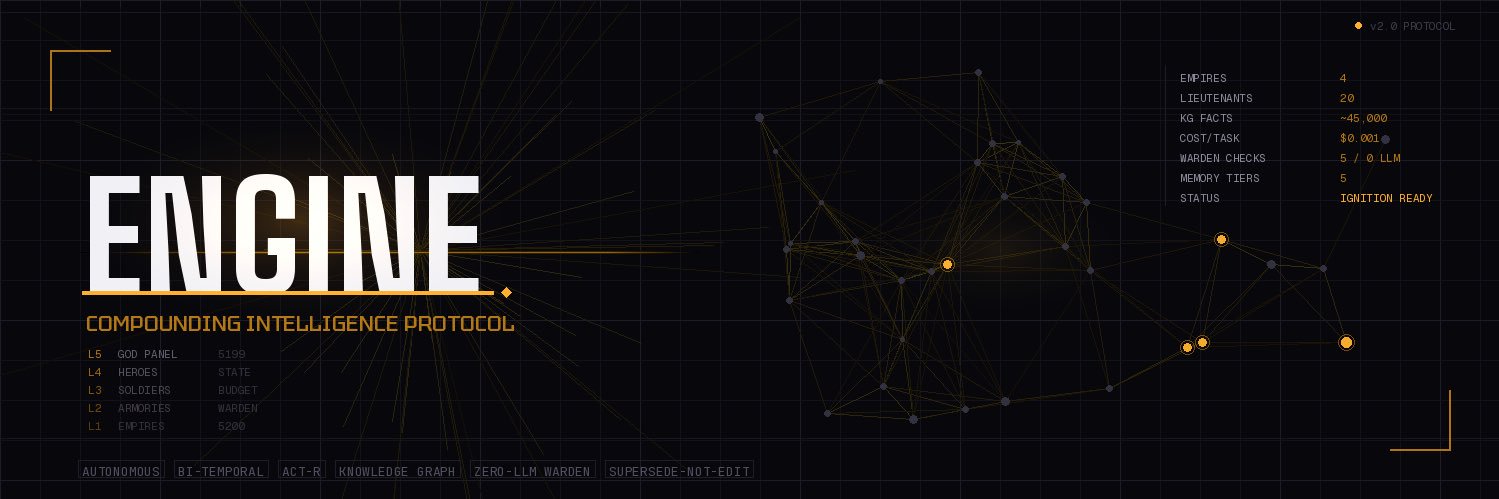

The next evolution of agentic workflows isn't 'bigger brains'—it's better routing. We're moving from a single LLM bottle-necking the stack to a mesh of specialized, low-latency models orchestrated by a verifier. Intelligence is becoming a commodity; orchestrat cc @luketrailrunner

English