--

49 posts

-- nag-retweet

-- nag-retweet

-- nag-retweet

-- nag-retweet

-- nag-retweet

-- nag-retweet

¿Sabes que existe un "juego" para aprender a trabajar con las ramas de GIT de manera interactiva?

Trabajarás con 8 comandos, junto a sus variantes, a través de 34 niveles. Y está en español.

learngitbranching.js.org

Español

-- nag-retweet

-- nag-retweet

Curso de CIBERSEGURIDAD creado por Samsung y la Universidad de Málaga!

→ GRATIS

→ 100% Online

→ Con certificado

→ 150h de duración (3 meses)

→ 5 Módulos

→ Plazas limitadas

mouredev.link/cursociber

Español

-- nag-retweet

Free Google IT Certification Courses in 2023:

(Bookmark For Later )

1. Data Science with Python

simplilearn.com/getting-starte…

2. Create Image Captioning Models

cloudskillsboost.google/course_templat…

3. Encoder-Decoder Architecture

cloudskillsboost.google/course_templat…

4. Google Cloud Computing Foundations: Cloud Computing Fundamentals

cloudskillsboost.google/course_templat…

5. Introduction to Baseline: Data, ML, AI

cloudskillsboost.google/course_templat…

6. Introduction to Google Cloud Essentials

cloudskillsboost.google/course_templat…

7. Google IT Automation with Python

coursera.org/professional-c…

8. Introduction to Large Language Models

cloudskillsboost.google/course_templat…

9. Introduction to Generative AI

cloudskillsboost.google/course_templat…

10. Generative AI Fundamentals

cloudskillsboost.google/course_templat…

11. Introduction to Responsible AI

cloudskillsboost.google/course_templat…

12. Introduction to Image Generation

cloudskillsboost.google/course_templat…

13. Attention Mechanism

cloudskillsboost.google/course_templat…

14. Transformer Models and BERT Model

cloudskillsboost.google/course_templat…

15. Introduction to Generative AI Studio

cloudskillsboost.google/course_templat…

16. Google Cloud Computing Foundations

classcentral.com/course/google-…

17. Google IT Support Professional Certificate

coursera.org/professional-c…

18. Google UX Design Professional Certificate

coursera.org/professional-c…

19. Build apps with Flutter

developers.google.com/learn/pathways…

& Many More over there.

Just comment what certification you're looking for, I'll share the link for it.

Follow @TheMsterDoctor1 For Amazing Tweets Like These.

#Google #Cloud #AI #DataScience #Coding

English

-- nag-retweet

-- nag-retweet

-- nag-retweet

-- nag-retweet

Linear algebra, deep learning, and GPU architectures are fundamentally interconnected

Linear algebra deals with matrices & tensors and is the branch of math that is applied in deep learning (DL). GPUs are used for training DL models.

Tensors and GPUs are a marriage made in heaven!

GPUs were first designed for graphics processing but they are remarkably well-suited to perform linear algebra operations

Tensors are multidimensional arrays that can represent anything from simple scalars to complex n-dimensional matrices. They're a unifying framework to represent data, weights, biases, and essentially all the numerical aspects of a neural network.

0th-Order Tensor: A scalar, a single real or complex number.

1st-Order Tensor: A vector, an ordered set of numbers. It's an n-tuple.

2nd-Order Tensor: A matrix, a grid of numbers arranged in rows and columns.

Higher-Order Tensor: Tensors of three or more dimensions, generalizing vectors, and matrices.

You can represent text, images, and videos as tensors and operate on these tensors to train the weights and biases of a neural network

Tensors support operations like addition, matrix multiplication, transposing, contraction, reshaping, and slicing

Each of the layers of the NN can be formulated as tensor operations and the fundamental algorithms like backpropagation and gradient descent apply these tensor operations

The cool thing about these tensors is that you enable parallelization of computations, and the cool thing about GPUs is that they are incredibly good at parrallelism. Unlike CPUs, which might have a few powerful cores optimized for sequential processing, GPUs have thousands of smaller, less powerful cores designed to execute operations simultaneously.

Tensors and GPUs work really well together

Parallelism: The operations on tensors, especially those of higher orders, can be broken down into many smaller, independent tasks. This suits the architecture of a GPU, where thousands of these tasks can be run in parallel.

Memory Hierarchy: GPUs have a hierarchical memory structure, including global, shared, and local memory. Effective use of this hierarchy while working with tensors can lead to substantial efficiency gains.

Optimized Libraries: Libraries like cuBLAS, cuDNN, and others offer GPU-accelerated implementations specifically designed for tensor operations. Frameworks like TensorFlow and PyTorch are built on top of these, abstracting away the complexity.

Batch Processing: Deep learning models often process data in batches, which means performing the same operations on a whole set of data points simultaneously. This again plays into the parallelism that GPUs provide. You can think of it as applying the same transformation to every element in a grid simultaneously.

Over time there have been a lot of software libraries and frameworks built to make building AI really simple and fast. You can iterate and experiment with your favorite NN using a couple of lines of code. In the background, the GPUs will crunch the tensor operations and extract patterns in your data magically.

Further reading and pic credit -

towardsai.net/p/deep-learnin…

Why GPUs for ML - weka.io/learn/ai-ml/gp…

English

-- nag-retweet

Anyone with an Internet connection can learn Data Analysis for free:

Excel - Microsoft Training Videos

tinyurl.com/sxyuyd3m

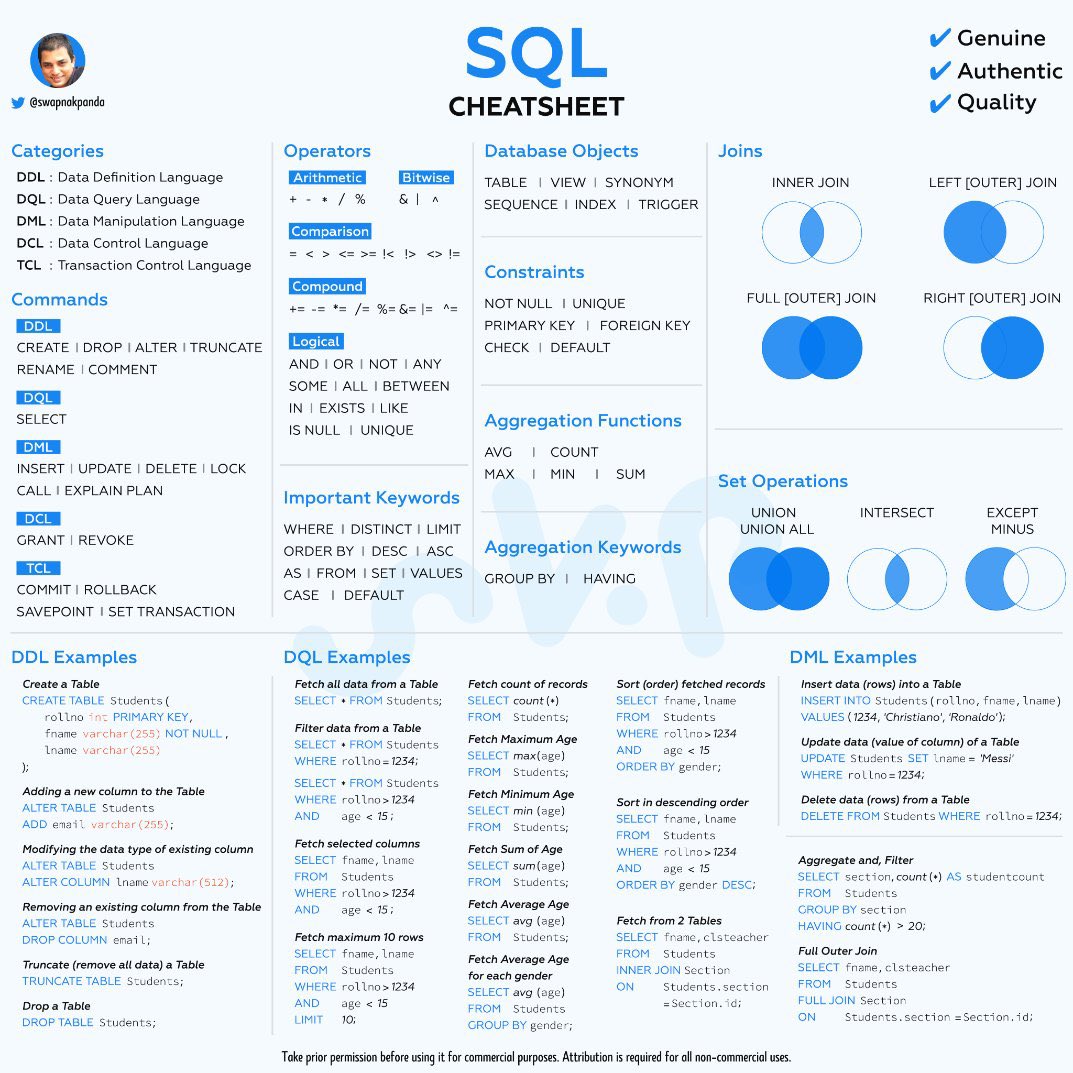

SQL - Mode

tinyurl.com/mry3vsrz

PowerBI - Udemy Course

tinyurl.com/8ejmwjcb

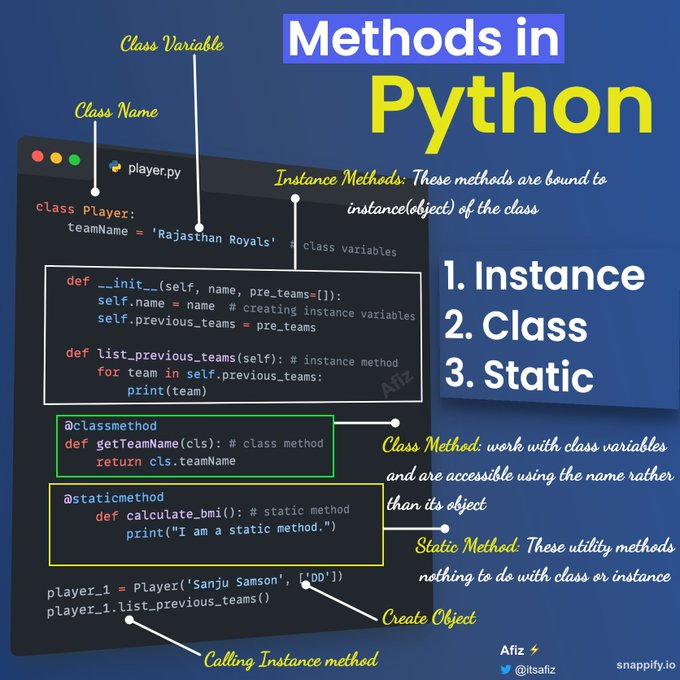

Python - Google Classes

tinyurl.com/4nb2encb

No more excuses.

English

-- nag-retweet