Nir Goren nag-retweet

Nir Goren

245 posts

Nir Goren

@nirgoren

Researching AI https://t.co/eMCNT73s4T

Sumali Mayıs 2018

117 Sinusundan115 Mga Tagasunod

CVPR 2026 highlight! 🔥

In this work co-led with @YehezkelShai, we show that a plain diffusion model can solve hard geometry problems by treating them as conditional image generation problems. No special architecture needed.

w/ @OmerDahary, @kusichan, @OPatashnik, @DanielCohenOr1

Shai Yehezkel@YehezkelShai

Visual Diffusion Models are Geometric Solvers We cast geometry as images: a plain diffusion model denoises into valid solutions. It is simple, general and effective. Shown on Inscribed Square, Steiner Tree, and Maximum Area Polygonization - all classic hard problems.

English

Nir Goren nag-retweet

[1/5] Is Text Enough for Control? 🐇

Text-driven video editing lets you describe *what* to change. But what about *how much*?

We introduce Adaptive-Origin Guidance (AdaOr).

A joint work with @DecartAI and @TelAvivUni 🧪

accepted to #SIGGRAPH2026.

English

Nir Goren nag-retweet

Video models as Physics simulators. 🌍🎥

[1/] In our latest work, WinDiNet, we finetuned a pre-trained video model into a differentiable physics engine. 1000x faster than traditional CFD solvers.

Project page: rbischof.github.io/windinet_web/

Abs: arxiv.org/abs/2603.21210

English

Nir Goren nag-retweet

Our previous intern released an extremely impressive re-implemented demo of our paper on multiplayer diffusion game engines.

play-multigen.com

I think this might be the first time you can play a fully-functional multiplayer generative game online with other people. 🤯

English

Nir Goren nag-retweet

Modern T2I DiTs are incredibly powerful, but have a serious diversity problem.

We introduce a surprisingly simple and efficient inference-time fix

(+2s for Flux-dev, +1s for SD3.5-Turbo).

Excited to share our SIGGRAPH 2026 (conditional) paper:

“On-the-fly Repulsion in the Contextual Space for Rich Diversity in Diffusion Transformers”

English

Nir Goren nag-retweet

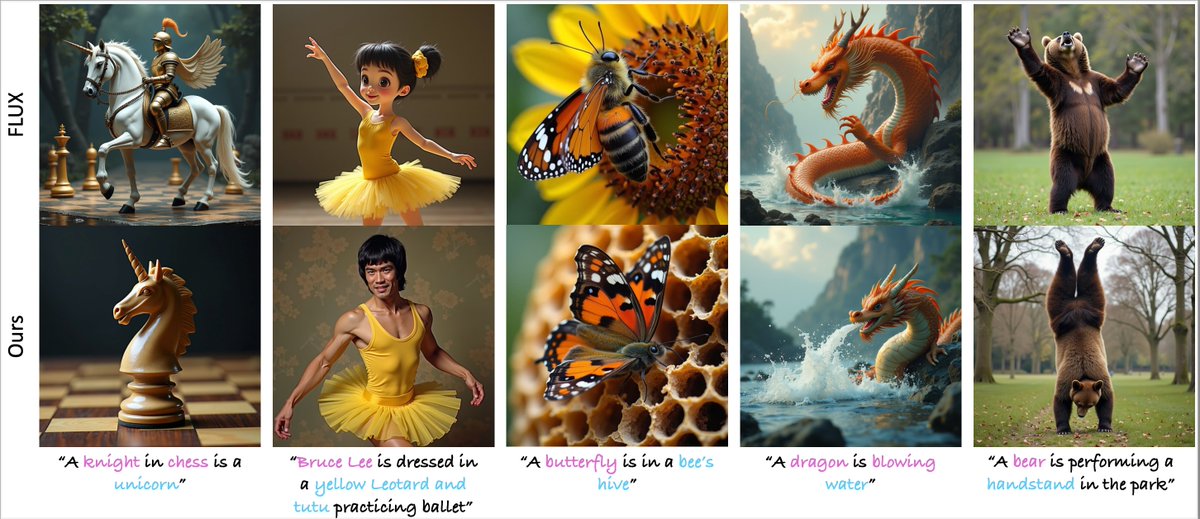

Excited to share that our work, “Image Generation from Contextually Contradictory Prompts,” was accepted to CVPR 2026 🎉

Diffusion models fail on some seemingly simple prompts.

Why do they ignore what you asked for?

We show why, and how to fix it with a simple, training-free method.

Joint work with @OPatashnik @OmerDahary @MokadyRon @DanielCohenOr1

English

Nir Goren nag-retweet

DLSS 5 is all over the timeline, and for good reason. In my internship at @AIatMeta we had the same idea: use a video model as a learned second-stage renderer on top of game engines. In our paper RealMaster, we make synthetic video look real while preserving scene fidelity 👇

English

Nir Goren nag-retweet

Video editing just got more dynamic! 🚀

Thrilled to share DynaEdit: a training-free, text-based method for non-rigid video editing.

Work done during my internship at @GoogleDeepMind with @Roni_Paiss, @kusichan, @inbar_mosseri, @talidekel, @t_michaeli

dynaedit.github.io

English

@PolaczekSagi {

"hooks": {

"Notification": [

{

"matcher": "",

"hooks": [

{

"type": "command",

"command": "printf '\\a' > $(tmux display-message -p '#{pane_tty}')"

}

]

}

]

}

English

@PolaczekSagi btw, you can set up ~/.claude/settings.json for a terminal bell on completion/permission request that works over claude code in tmux (which you can turn into a system notification on iTerm2 when not in focus):

English

Nir Goren nag-retweet

Nir Goren nag-retweet

SemanticMoments - Semantic motion similarity

How do you find videos with similar motion?

It’s harder than it sounds.

Models like VideoMAE and V-JEPA encode motion, but their embeddings are dominated by appearance.

So how do we build a compact embedding for motion similarity?

Joint work with @kfir99 @OPatashnik @BenaimSagie @MokadyRon

GIF

English

@nirgoren @KatzirOren @abhinav_nakarmi @eyalr0 @mahmoods01 @OPatashnik Such an interesting paper! Congrats Nir! 👏

English

Happy to share that our paper "NoisePrints: Distortion-Free Watermarks for Authorship in Private Diffusion Models" has been accepted to ICLR 2026!

Huge thanks to my amazing co-authors:

@KatzirOren @abhinav_nakarmi @eyalr0 @mahmoods01 @OPatashnik

#ICLR2026 🇧🇷

Nir Goren@nirgoren

The initial noise in diffusion models is surprisingly correlated with the final image. Our NoisePrints paper exploits this to provide a lightweight, distortion-free, cryptographically secure watermark for proving authorship of generated images & videos, requiring no model access.

English

Nir Goren nag-retweet

Nir Goren nag-retweet

[1/4] Sync about it… 💭✨

Editing a portrait video yet keeping it fully synced with the original across the entire sequence.

Read more about Sync-LoRA: sagipolaczek.github.io/Sync-LoRA/ 🚀

English

Might be interesting to see how see training a diffusion network on the visual representation of ARC fares. We demonstrated that visual diffusion models are capable reasoners on some other hard tasks when they are represented as images: arxiv.org/abs/2510.21697

Rosinality@rosinality

You can just train ViT from scratch to solve ARC.

English