Max

3.1K posts

Max

@systemscontext

AI analysis focused on models, tools, research, and real-world implications.

Subagents are now available in Codex. You can accelerate your workflow by spinning up specialized agents to: • Keep your main context window clean • Tackle different parts of a task in parallel • Steer individual agents as work unfolds

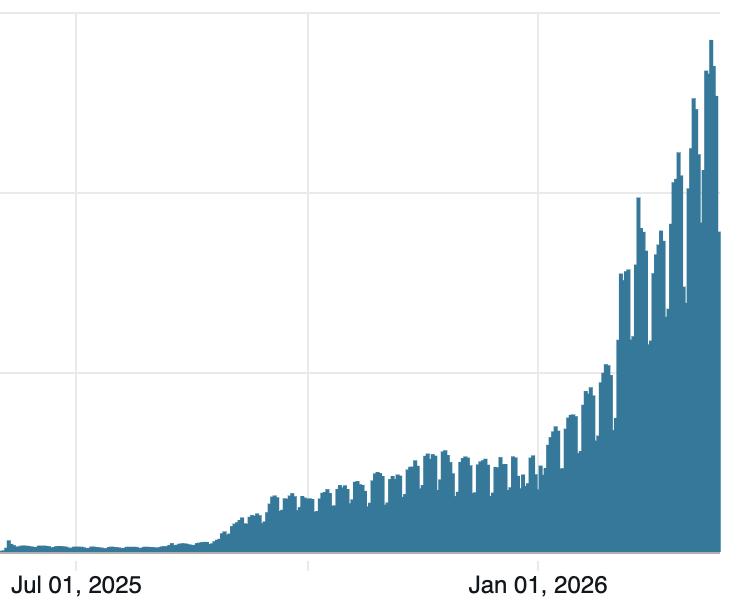

GPT-5.4 (High) has now cleared 90% on this benchmark at a cost of just $0.37/task So that's a 32x efficiency improvement in the last three months, or 12000x since December 2024

Finally finished vibe coding my personal health app built with Claude. Here's what it does: - Connects to the Oura API to sync sleep, recovery, steps, and exercise data - Tracks my monthly bloodwork via Rythm Health CSV uploads - Uses Playwright to scrape Chronometer daily nutrition and water intake - Uses Gemini to OCR Ladder workout screenshots and track my lifts - Full dashboard with weight trends, calorie balance charts, macro tracking, and a tabbed daily log It's completely interactive and honestly, pretty fucking cool. Blood markers even have visualizations based on what's in range and out of range.