Alex Amadori

39 posts

Alex Amadori

@testdrivenzen

Policy research at @ConjectureAI

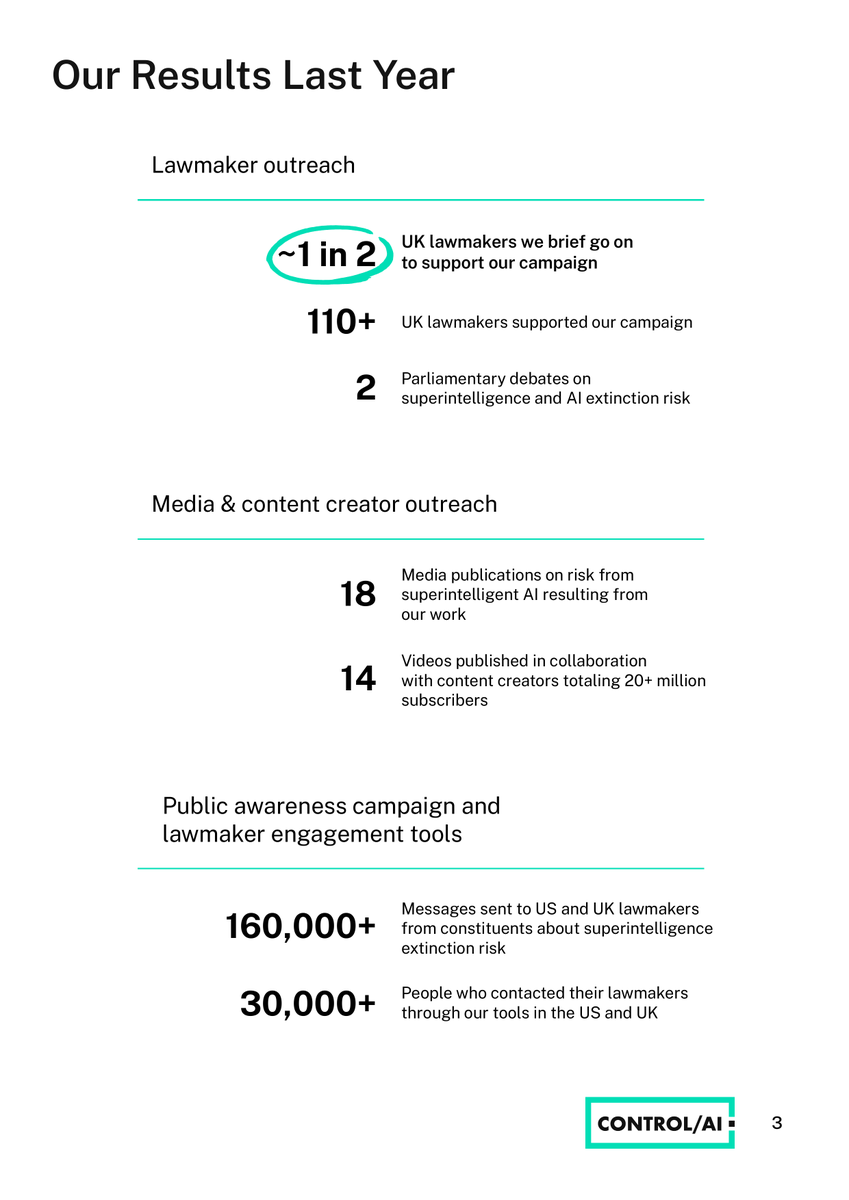

We've just published our 2025 Impact Report! At a glance: ~1 in 2 UK lawmakers we briefed supported our campaign, for a total of 110+ supporters 2 House of Lords debates on superintelligence & extinction risk A series of hearings at the Canadian Parliament (+ more in thread)

DoD official Emil Michael on designating Anthropic a supply chain risk -- "Their model has a soul, a 'constitution' -- not the US Constitution. The other day their model was 'anxious' and they believe it has a 20% chance of being sentiment and having its own ability to make decisions. Does the Dept of War want something like that in their supply chain?"

Many in my community hold Anthropic in high regard. Sadly, they should not. I wrote a post showing why. Anthropic in its current form is not trustworthy. The leadership is sometimes misleading and deceptive; they contradict themselves and lobby against regulations just like everyone else, while not really being accountable to anyone except perhaps their investors. The post discloses a number of facts that had not previously been reported on and combines them with publicly available information in an attempt to paint an image of Anthropic more accurate than the picture Anthropic’s leadership likes to present. Read: anthropic.ml

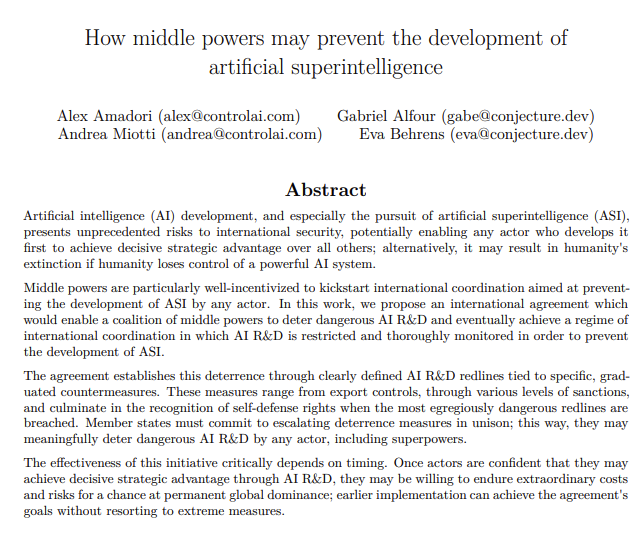

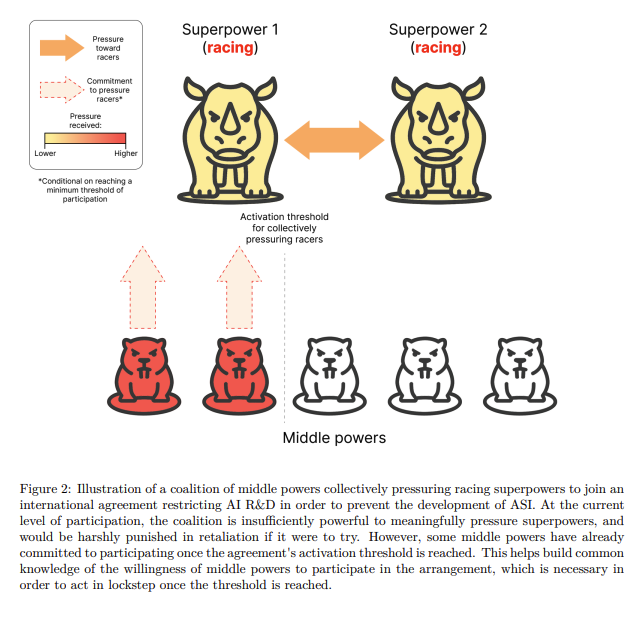

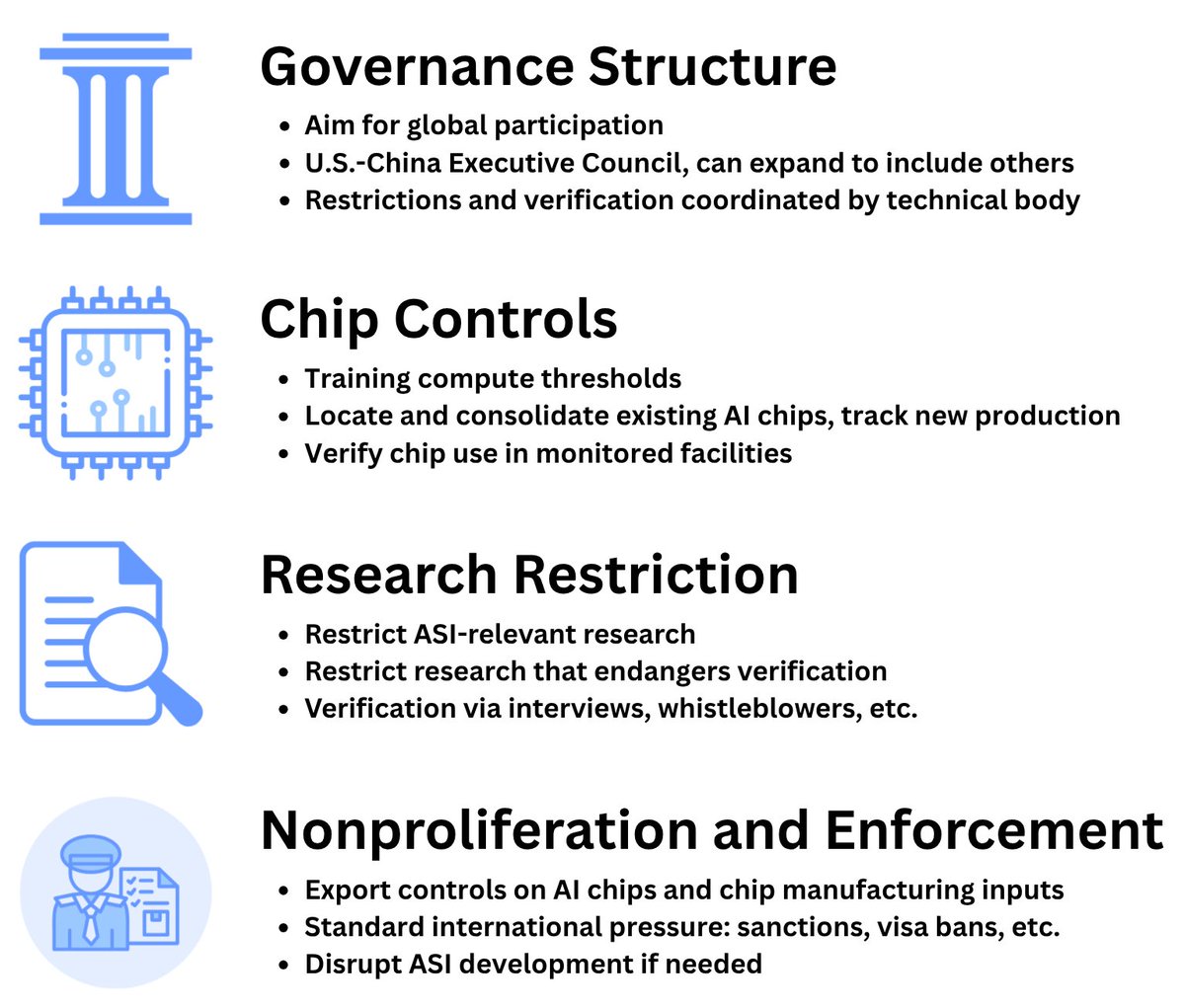

We explore how a coalition of middle powers may prevent development of ASI by any actor, including superpowers. We design an international agreement that may enable middle powers to achieve this goal, without assuming initial cooperation by superpowers.

Auren and Seren are finally in your browser! Emotionally in-touch AIs that will happily call you out on your own bullshit Try out the beta: chat.auren.app