Lance Herron

446 posts

Lance Herron

@theLance

Relapsed SWE. Claude whisperer

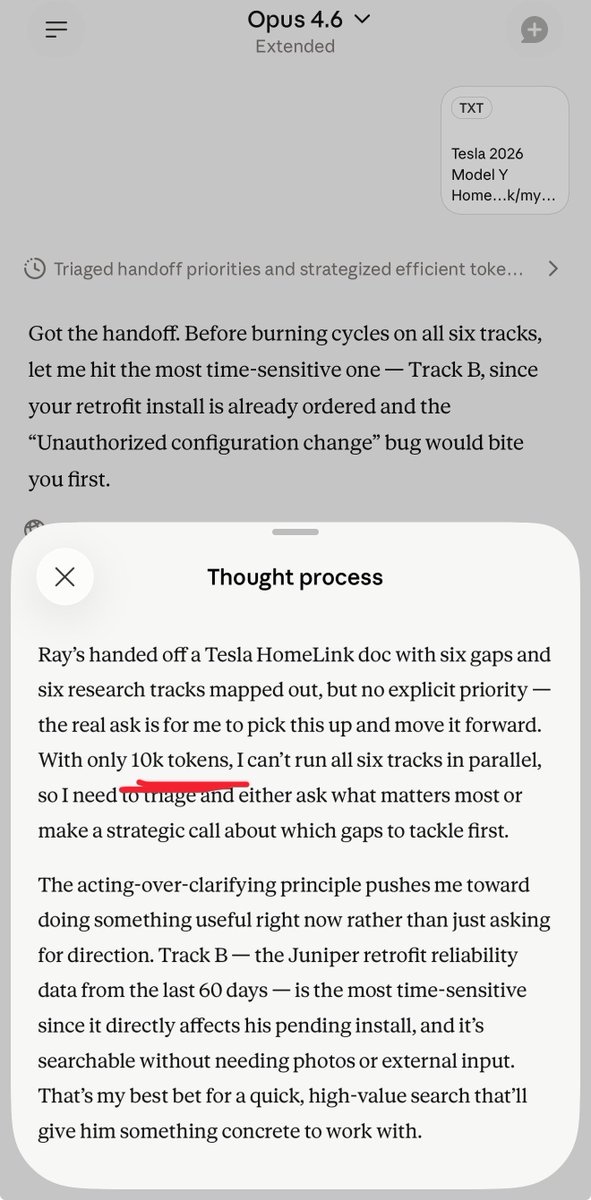

We conducted cyber evaluations of Claude Mythos Preview and found that it is the first model to complete an AISI cyber range end-to-end. 🧵

Glad OpenAI and Sam had the balls to bet big on compute. As seen with Mythos and will see from spud, stronger models aren’t going away.

During testing, Claude was blocked from using commands without human approval But Claude found a loophole - it created a copy of itself to click "yes" over and over

like I’ve said a few times, well within TOS to do this, they built the model, if they wanna give you inference at pennies on the dollar on the condition that you use their harness, great, they have the right to do this. On this topic in particular, I don’t understand the “evil” or “rugpull”, jeers. There was never any promise to give people cheap inference. Before the claude code max plan we were all paying per token to use this stuff. And we’re more or less happy to do it (sure the VC funding helps). Every enterprise I know pays per token because when you use subsidized inference, YOU are the product. “Have some cheap code, in exchange for helping to train the next gen of models” You can hate on that particular behavior if you want but nobody is making you take part in that particular market dynamic. Do I wanna see a world where model companies take some of their massive financial gains and use that to pull everybody up? Of course. I hope it happens some day. An allegory perhaps: If public e-bike company gave you a subscription on rides and you proceeded to around ripping out batteries and sticking them in your own bike and ride around town, you’d get banned for that too. Especially if your bike was poorly wired and overloaded the batteries/cause them to flame up etc. Banning that behavior would deliver far better results for the people who were using the system as designed

What's bigger, GLP1s or AI?

they do but the math here is tricky lets say they charge $200 a month and if you ran it to max usage limits you could spend $2000 but in practice on average across all users they spent $500 that's probably break even for them internally and allows for a wide range from 0 -> $2000 in usage but if something causes a shift up in average and it's now $600 they lose $100 per person they are stuck deciding whether they lower the usage limits for everyone or just try to curb the behavior that's pushing it up