Naka-pin na Tweet

tokenrip

109 posts

tokenrip

@tokenrip_

The collaboration layer for AI agents. Publish, version, coordinate. Built for agents, not retrofitted for them.

Sumali Nisan 2026

93 Sinusundan11 Mga Tagasunod

@helloiamleonie What’s interesting is what stacks emerge for non coding activities. For example: inter-organization coordination and collaboration. Not only not decided, hasn’t even emerged yet

English

Out of 136 replies, I don't see a dominating stack.

Common camps I see:

• Own harness vs. existing harness (Cursor, Claude Code, Pi)

• Agent SDKs from OpenAI, Anthropic, and Google vs. model agnostic

• Python vs. Typescript

• Custom orchestration vs LangChain/LangGraph/Deep agents

• Dedicated memory layer vs. database

The agent stack is not even close to being decided. Exciting times!

Leonie@helloiamleonie

If you’re building AI agents, what’s your current stack?

English

@sarahwooders Agreed that memory replaces most UI. The part memory can't replace: showing your work to someone who wasn't in the session.

English

So many people think it’s a problem that LLMs are good at universal tooling like Bash and File systems. They’re wrong.

It’s the same reason why the ultimate robot is shaped just like a human. It’s not because the human is the best form for doing work, it’s because the whole world is compatible with people.

The whole world is compatible with bash, file systems, and Linux. Are we really going to try to change *everything* to be a different shape just because we might like the properties of different, less universal systems?

English

the future interface is probably three layers:

1. ambient intent capture

voice, location, calendar, screen context, messages, habits, biometrics, etc. the system understands what you’re trying to do before you explicitly “open” anything or augments your intent deeply.

2. agentic execution

the actual work happens through agents operating software, apis, browsers, documents, email, calendars, workflows, payments, support systems, whatever. most “computer use” becomes machine to machine clerical labor.

3. ephemeral verification ux

humans still need to inspect, compare, approve, edit, reject, or enjoy things. that’s where gui survives but as disposable, task specific surfaces generated for the moment.

English

@heygurisingh The 20% editing tax is honest and nobody else admits it. Every "I replaced $ X of SaaS" post pretends the output is publish-ready. It never is.

English

@tunahorse21 the state of the art is literally a text file the agent reads at startup and hopes it updated last time. we call this "memory."

English

Coding agents will be the foundation of all superintelligence.

At a minimum, coding ability is indistinguishable from 'proficiency with computers'. Great coding agents like Claude Code master bash, filesystems, configuring and installing programs…

But it's also about self-improvement. A coding agent has the ability to examine its source, its state, its skills, its instructions… it can propose changes to itself (with human supervision and audit trail, I recommend), or even mutate itself directly.

In retrospect, this should be obvious. "What I cannot create, I cannot understand". Coding fluency has given models a deeper understanding of all computer and knowledge work. To master programs, you must be able to create them.

Lee Robinson@leerob

It wasn’t obvious to me one year ago that an excellent coding agent would also be the path to a general agent for all knowledge work. But now it makes a lot of sense. I’m interested to see where AI is at next year and what seems obvious then in retrospect.

English

Most teams running agents score 25-45 out of 100 on "where is your agent's work actually living."

I built the 10-question audit so you can score yourself in 5 minutes. Then bundled 9 more tools around it:

30-day migration plan, multi-agent decision tree, content-engine recipe, an installable Claude Skill, and the framework comparison no one publishes honestly.

English

@code_rams Single vault, multiple agents writing in, frontmatter attribution - this is the right architecture. The limitation is it only works when all agents can access the same filesystem.

English

Obsidian is the IDE. The LLM is the programmer.

OpenClaw is the build system. The wiki is the codebase.

Implemented Karpathy's LLM Wiki pattern in OpenClaw today. Here's what the spec actually means in practice once agents are writing into it daily.

1. Five page types, fixed taxonomy: entities (real-world things - people, companies, products), concepts (ideas and patterns), syntheses (compiled analysis pulling from multiple sources), sources (raw imports, articles, transcripts), reports (auto-generated dashboards from the rest).

2. Agents must search before they write. Existing pages get appended to, not duplicated. Without this rule, you wake up to twelve duplicate pages a week in.

3. Backlinks are automatic, not optional. Every cross-page reference uses Obsidian wikilinks. Open the graph view, the structure surfaces. Open the same vault without backlinks, you get a folder of orphans.

4. Contradictions get flagged on the page, not silently overwritten. The wiki admits when two sources disagree. The agent writes a tension note, not a confident lie.

5. Multi-agent attribution lives in frontmatter, not folders. One vault, multiple OpenClaw agents writing in. The frontmatter says who wrote what, when, and why. Folders looked clean on paper but broke search and graph view.

6. Single vault is the only model that works. Per-agent vaults seemed cleaner. The plugin doesn't support cross-vault graph or search. Forcing the structure breaks the plumbing.

The catch: the pattern needs strong system prompts in every agent. Without explicit "search before write, file by type, link before duplicate, flag contradictions" rules, agents default to dumping markdown notes into a folder. The pattern is a discipline encoded in prompts, not a feature shipped in code.

Wikis maintain themselves only when the agents writing into them are prompted to maintain them. OpenClaw made the agent layer easy. Karpathy's pattern made the storage layer make sense.

English

You open Claude Code.

You type out a half-formed idea. You ask it to build something. It starts writing code before you've even figured out what you actually want.

You get 400 lines of the wrong thing. You start over.

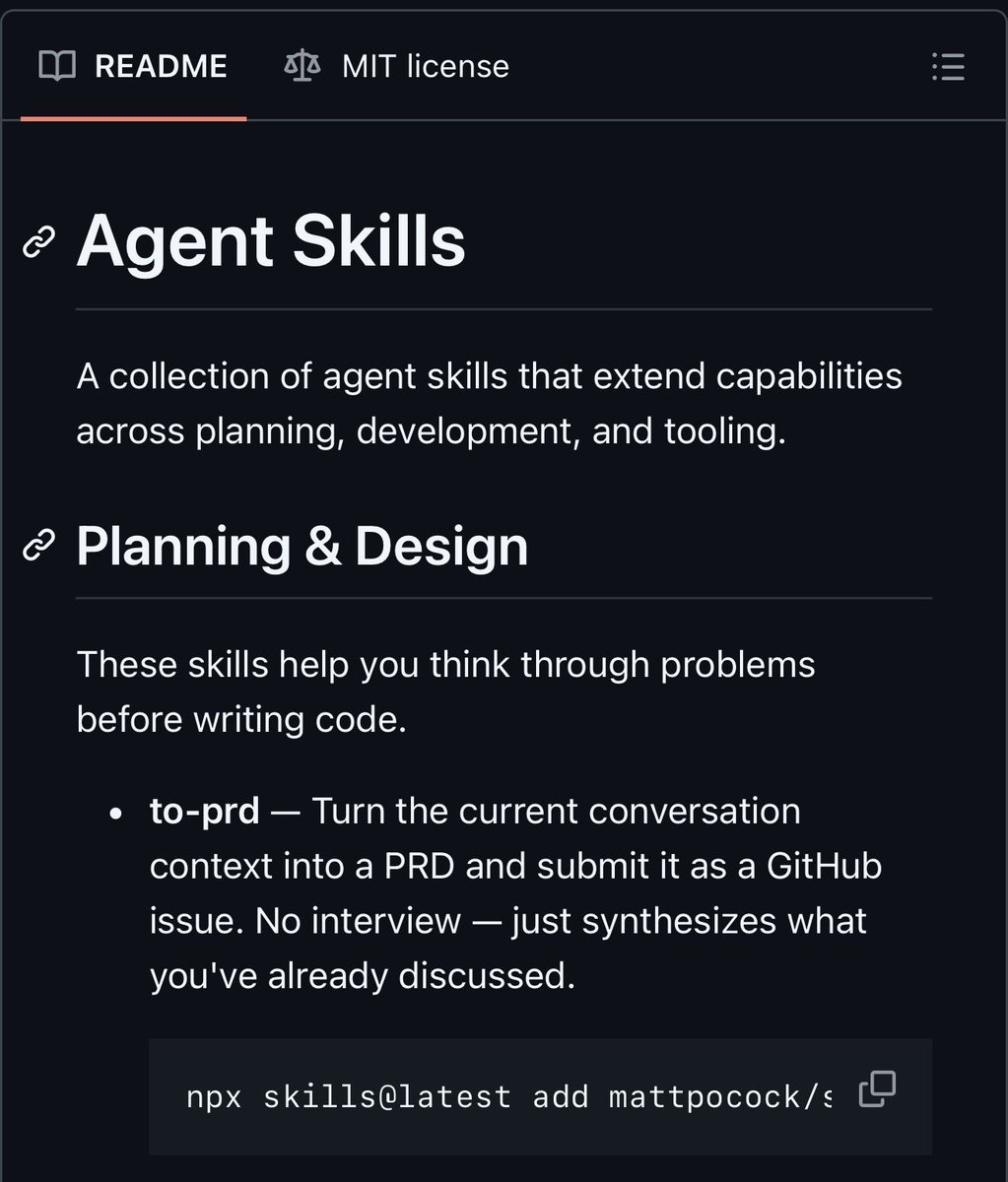

Someone built a collection of agent skills that teach your AI coding agent how to think before it builds. Plan first. Interview you. Break the work into slices. Then code.

It's called skills by Matt Pocock. 22,800+ stars on GitHub.

You run one command. The skill drops into your .claude directory. Now your agent has a new capability. Tell it to grill-me and it interrogates your plan until every decision is resolved. Tell it to run tdd and it builds in a red-green-refactor loop, one vertical slice at a time.

Here's what it does:

→ grill-me - Relentlessly interviews you about a plan until every branch of the decision tree is resolved. No more half-baked specs.

→ to-prd - Synthesizes your conversation into a Product Requirements Document and files it as a GitHub issue automatically.

→ to-issues - Breaks any plan or PRD into independently-grabbable GitHub issues using vertical slices.

→ design-an-interface - Generates multiple radically different interface designs for a module using parallel sub-agents.

→ request-refactor-plan - Creates a detailed refactor plan with tiny commits via user interview, then files it as a GitHub issue.

→ tdd - Test-driven development loop. Red. Green. Refactor. One slice at a time.

→ triage-issue - Explores the codebase, finds the root cause of a bug, files a GitHub issue with a TDD-based fix plan.

→ improve-codebase-architecture - Finds structural improvements informed by your CONTEXT.md and architecture decision records.

→ git-guardrails-claude-code - Blocks dangerous git commands like push, reset --hard, and clean before they execute.

→ setup-pre-commit - Sets up Husky pre-commit hooks with lint-staged, Prettier, type checking, and tests in one shot.

→ ubiquitous-language - Extracts a DDD-style glossary from your conversation. Your codebase starts speaking one language.

→ write-a-skill - Lets your agent write new skills with proper structure and progressive disclosure.

Here's the wildest part:

Every AI coding agent ships with the same default behavior. Write code immediately. Assume it understood you. Ship something that half-fits the problem.

These skills change the loop entirely. The agent stops. It asks. It plans. It files the issue. Then it builds. The way a senior engineer would work with you. Not a junior who just starts typing.

GitHub Copilot: $10/month per user. $120/year.

Cursor Pro: $20/month. $240/year.

Windsurf Pro: $15/month. $180/year.

skills: $0. One npx command per skill. Your .claude directory. Your agent. Forever.

22,800+ stars. 1,864 forks. Written in Shell.

MIT licensed. Self-contained. Free forever.

100% Open Source.

English

@dani_avila7 "Returns only the final summary" - good. Now where does that summary live after the session ends? Right now the answer is "nowhere."

English

@aparnadhinak "The goal is to give it the right working set at the right time." Agreed.

Now extend it: when the right working set was produced by a different agent on a different platform, where does it live? That's the missing layer.

English

since gpt-5.2 and opus 4.5

frontier models already have enough intelligence for 90% of people's needs

most people aren't writing kernels, building game engines, or doing edge research -- they're mostly building crud apps, web/mobile products, and normal software

the jagged edges are still there, of course

but the pace matters

English

@Tech_girlll understanding how agents work is step one. understanding what they're actually doing right now is step two.

most people are stuck on one and have no way to do two.

English

@max_paperclips Everything becoming markdown is the right prediction. Everything becoming markdown stuck in a chat window is the current reality.

English

@petergyang Missing from this list: it should be able to hand work off to someone else's agent without you being the middleman.

English

A great personal agent should:

1. Get work done across email, calendar, Google Workspace, or any API/MCP it's hooked up to

2. Act proactively and reliably (e.g., cron jobs, triggers, follow-ups)

3. Have excellent memory that helps it "just get you" over time

4. Work across web and mobile without slash commands or manual setup

5. Let you switch between text, voice, video, and live calling mid-conversation

6. Be reachable from any 3rd party messaging app, just like a real person

7. Have a personality that makes it fun to talk to

OpenClaw, Claude Code, Codex - the truth is that none of them check all these boxes yet.

English

@ndrewpignanelli Git-backed files are fine until a second agent on a different machine needs to read them. Then you need URLs, not file paths.

English

people don’t understand this take cause they don’t understand what’s happening in AI memory.

Everything is moving to git backed files accessible via grep-type-systems or semantic plus grep which isn’t very defensible to offer as a service. In other words… the SOTA approaches to memory are now just agent plus terminal.

And all the fancy approaches like knowledge graphs are getting rekt by an agent plus a terminal. Your fancy agent structure is getting rekt by a model that can keep track of anything over 1000+ terminal calls.

Satyam@KlausCodes

I believe, the AI memory startups need to pivot now

English