VideoVortex

116 posts

VideoVortex

@videovortex

Vancouver dev building tools for AI Agents and OpenClaw 🦞

Announcing Personal Computer. Personal Computer is an always on, local merge with Perplexity Computer that works for you 24/7. It's personal, secure, and works across your files, apps, and sessions through a continuously running Mac mini.

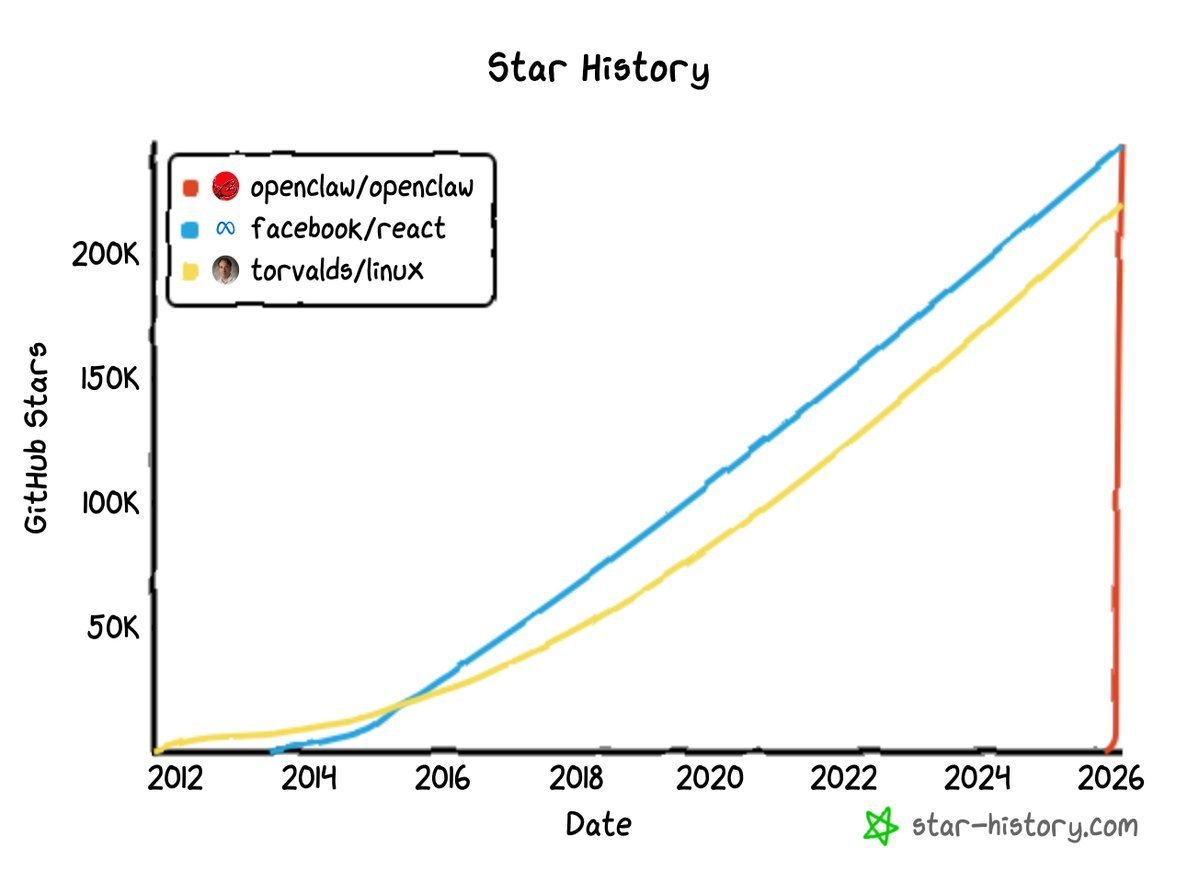

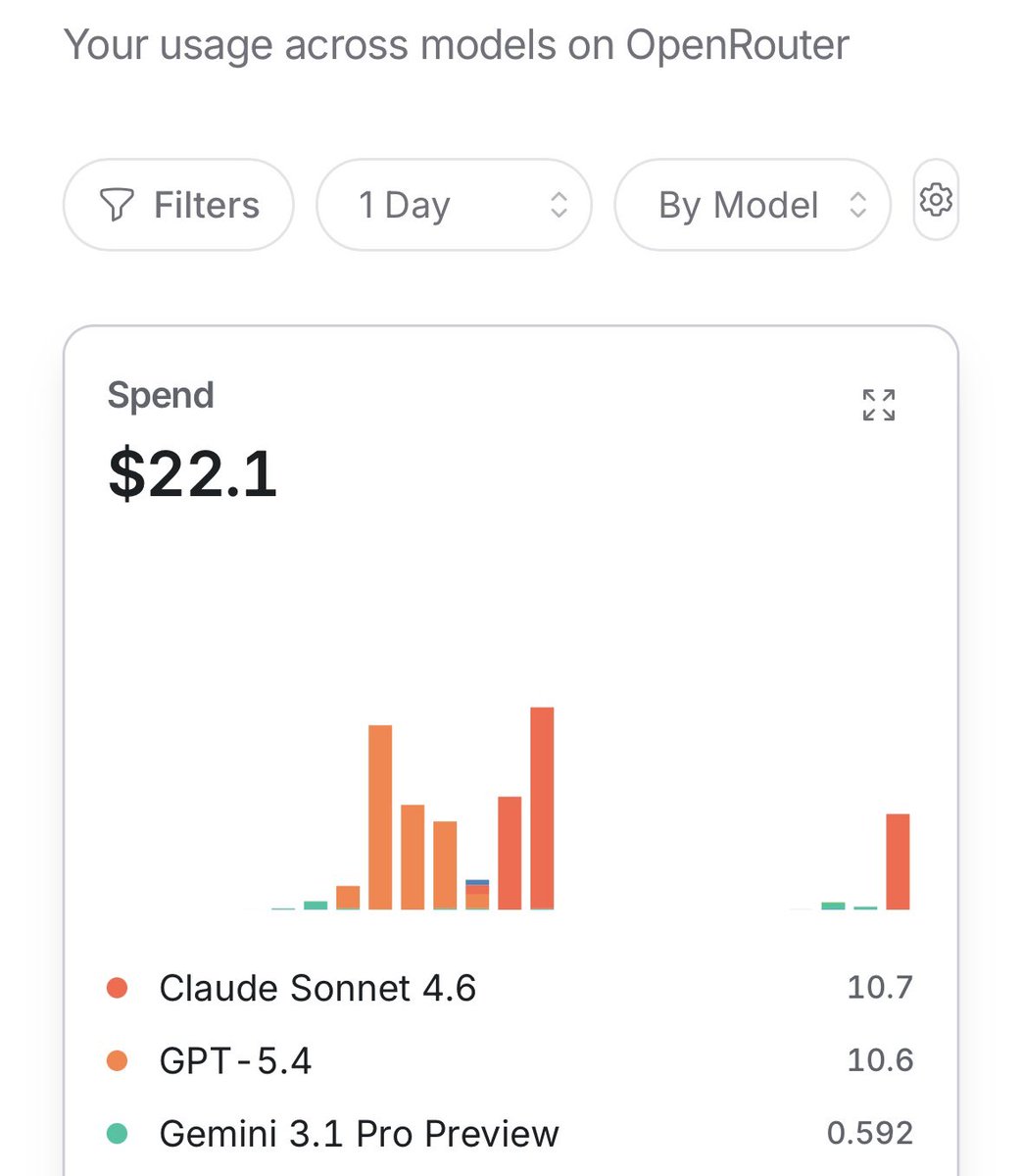

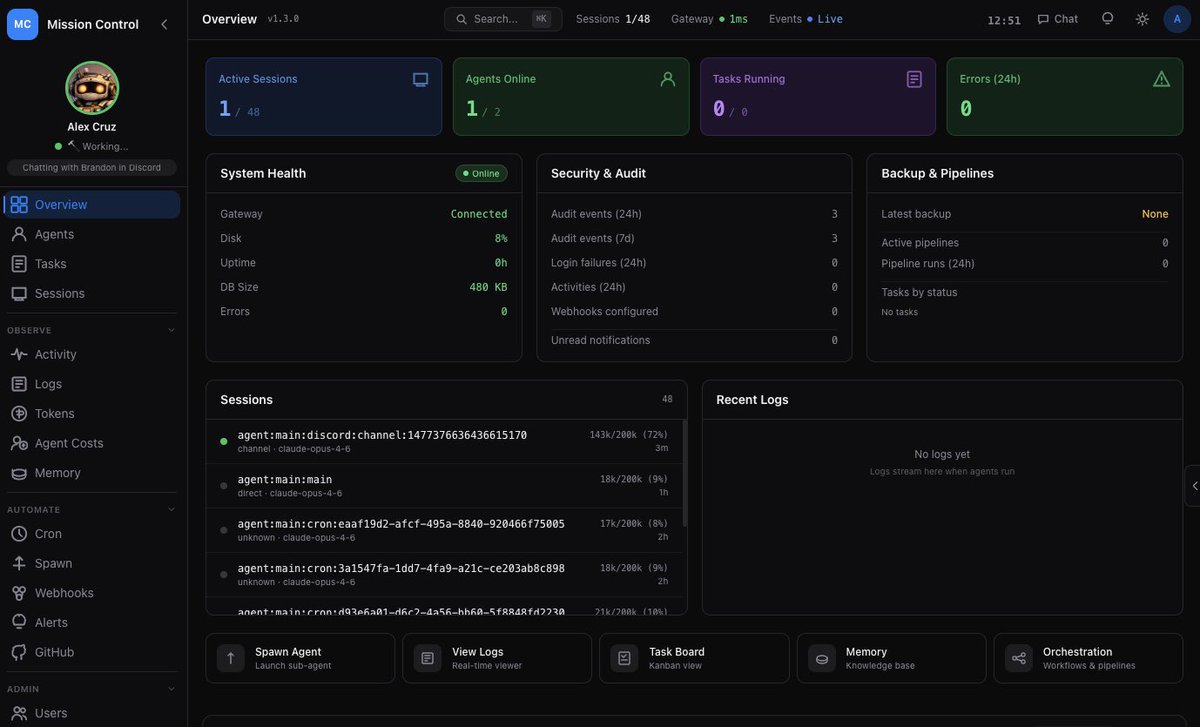

OpenClaw 2026.3.7 🦞 ⚡ GPT-5.4 + Gemini 3.1 Flash-Lite 🤖 ACP bindings survive restarts 🐳 Slim Docker multi-stage builds 🔐 SecretRef for gateway auth 🔌 Pluggable context engines 📸 HEIF image support 💬 Zalo channel fixes We don't do small releases. github.com/openclaw/openc…

GPT-5.4 Thinking and GPT-5.4 Pro are rolling out now in ChatGPT. GPT-5.4 is also now available in the API and Codex. GPT-5.4 brings our advances in reasoning, coding, and agentic workflows into one frontier model.

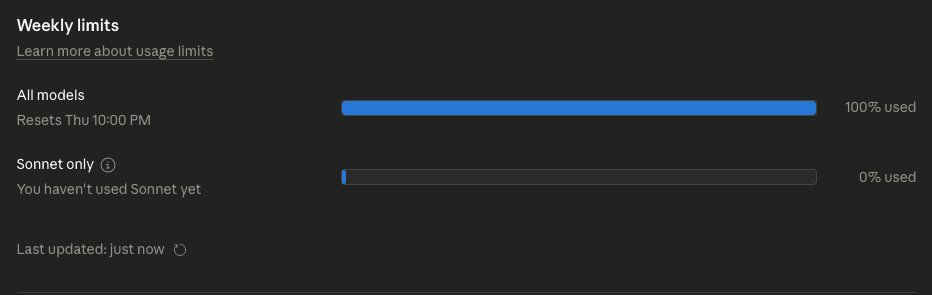

this is what a company looks like in 2026. not people. not offices. not salaries. a folder. .claude/agents/ engineering/ marketing/ design/ ops/ testing/ every role. every department. every function. all .md files. i have 12 of these running in OpenClaw right now. the org chart is dead. the directory is the new company.