Mayur Naik

425 posts

Mayur Naik

@AI4Code

Professor @CIS_Penn. Neurosymbolic AI researcher and educator.

It is easy to overlook but hard to overstate how big a role "test-time inference" has played in modern LLM gains in reasoning.

I myself wasn't sure, so I put GPT-5 and Gemini-2.5 to a classic programming puzzle called variable shadowing (en.wikipedia.org/wiki/Variable_…):

Both models in "Thinking" mode solved it correctly but took around 2 minutes. The thinking was elaborate (and correct):

"I'm currently focused on rewriting the user's C-like code, aiming to replace all instances of 'y' with 'x'. However, I've hit a snag. The crucial part is to avoid this substitution in specific scenarios.

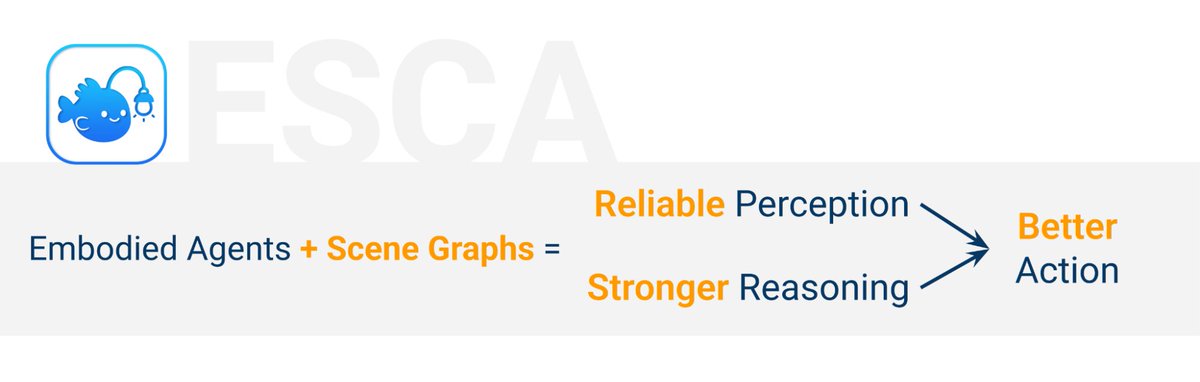

@PennEngineers doctoral student @jiani_huang_ai (@cis_penn) presents ESCA at @NeurIPSConf 2025, a system that helps embodied AI agents better understand their surroundings by creating context-aware descriptions of a scene. Research advised by Professor Mayur Naik (@AI4Code).