Mayur Naik

445 posts

@AI4Code

Professor @CIS_Penn | Founder & Co-CEO @Rabdos_AI | Neurosymbolic AI researcher and educator

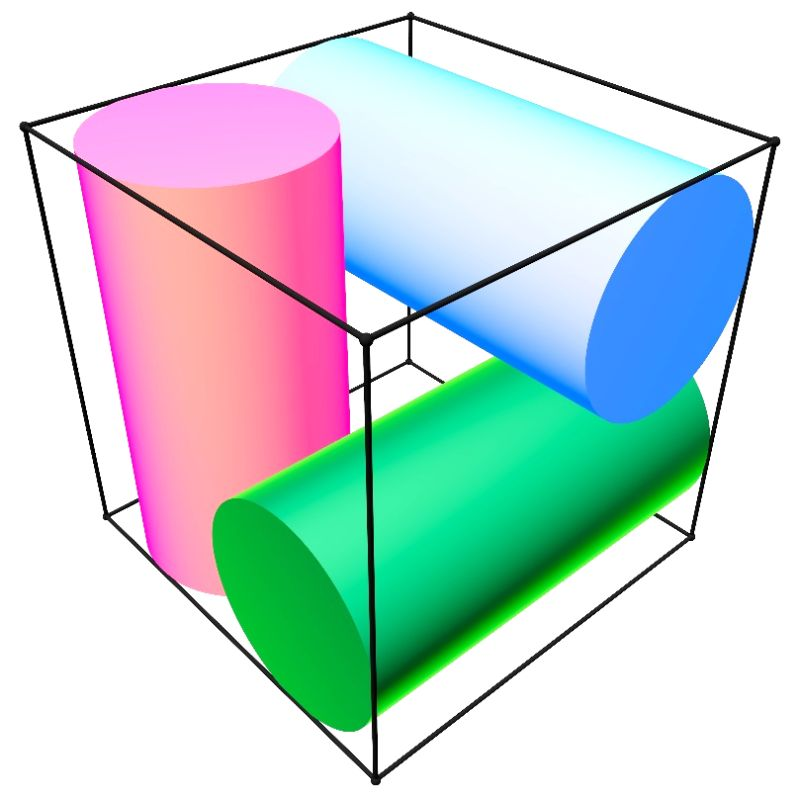

Do we need frontier models to verify math proofs? EpochAI just announced that they found several fatal flaws in their FrontierMath benchmark using GPT-5.5. But isn't verification supposed to be easier than generation, so why were they not spotted earlier? In our recent work, we asked a related question: do we really need frontier-scale compute to verify Olympiad-level math proofs? Turns out, even 20B open-source models can keep up with frontier LLMs on proof verification. Work done with my co-authors @aaditya_naik, @AI4Code, and @RajeevAlur Preprint: arxiv.org/abs/2604.02450

I was talking to a CS professor yesterday and he told me he's so relieved ⛱️ now that his PhD students have left for their summer internships.🌞. He finally gets to do interesting research himself again. It wasn't a complaint. Just something he'd been sitting with. The old PhD bargain was pretty clean: professors had ideas, students had time, execution was the bridge. You did the work, and somewhere along the way, taste rubbed off. That bridge is mostly gone now. A senior researcher with taste and a research agents ships faster than the same researcher plus a junior student. Explaining 🎙️ an idea to someone who doesn't yet have taste costs more than just building it. (the reason professor still needs students is because the research agent 🤖 is not yet capable enough) Nobody wants to say this out loud Professors keep mentoring because the system 🏫 asks them to, not because it's the highest-leverage thing they could be doing with their week. The thing that still matters —> taste <— was never really taught. It was supposed to leak out of the execution. Now there's no execution to leak from.

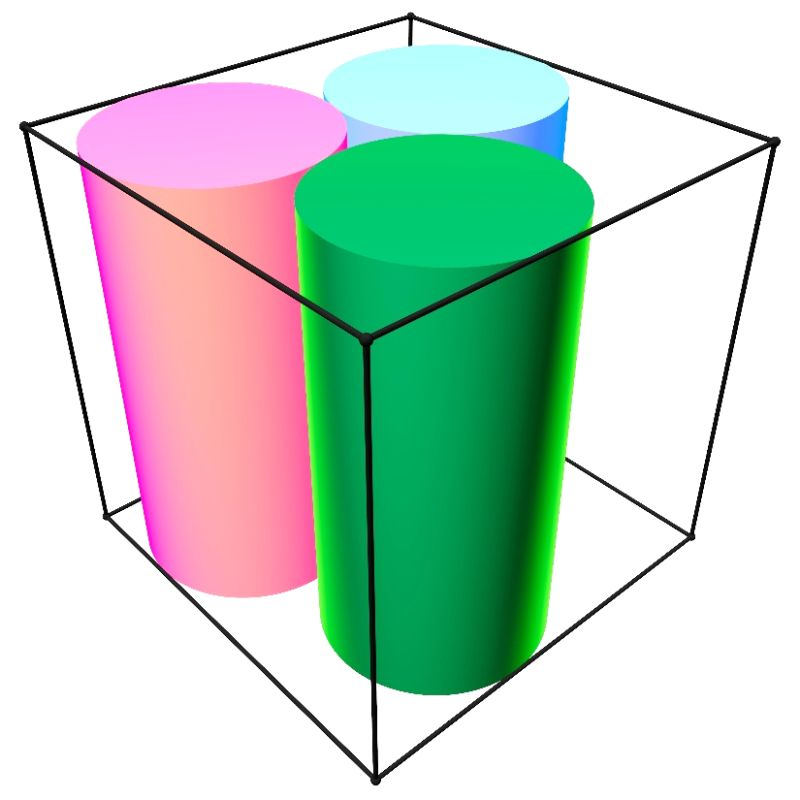

Static math benchmarks saturate. We built one that doesn't. Announcing MathDuels, the first self-play math benchmark. Every frontier LLM writes problems for the others, and is graded on the ones written for it. As models improve, so does the benchmark.

Static math benchmarks saturate. We built one that doesn't. Announcing MathDuels, the first self-play math benchmark. Every frontier LLM writes problems for the others, and is graded on the ones written for it. As models improve, so does the benchmark.

Static math benchmarks saturate. We built one that doesn't. Announcing MathDuels, the first self-play math benchmark. Every frontier LLM writes problems for the others, and is graded on the ones written for it. As models improve, so does the benchmark.

We found widespread cheating on popular agent benchmarks, affecting 28+ submissions across 9 benchmarks and thousands of agent runs. Surprisingly, the top 3 submissions on Terminal-Bench 2 are all cheating! Here's what we found 🧵