Josh Rackers

1.4K posts

Josh Rackers

@JoshRackers

Always Dada, sometimes a scientist, occasionally funny.

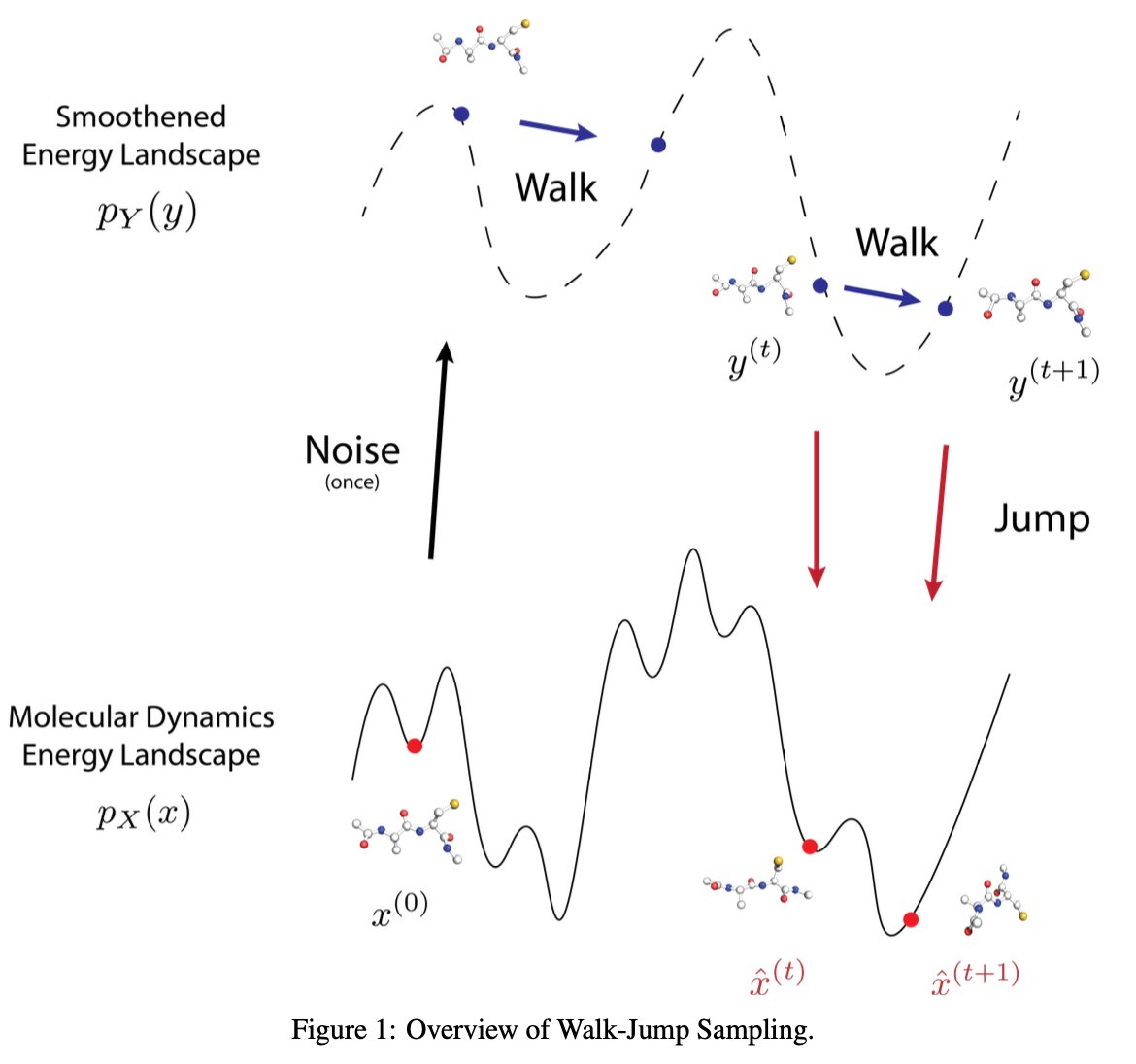

NEW: Achira, a startup combining AI- and physics-based methods for drug discovery, launched Friday with a $33 million seed round I talked with co-founders @jchodera, @Tkaraletsos, and @zavaindar on their venture: endpts.com/achira-raises-…

Charge density is the core attribute of atomic systems in DFT. When representing and predicting charge density with ML, it is challenging to balance accuracy and efficiency. We propose a recipe that achieves SOTA on both: arxiv.org/abs/2405.19276 1/5

Does equivariance matter when you have lots of data and compute? In a new paper with Sönke Behrends, @pimdehaan, and @TacoCohen, we collect some evidence. arxiv.org/abs/2410.23179 1/7