Richmond Alake

406 posts

Richmond Alake

@richmondalake

AI Memory Engineer | Creator of MemoRizz and OpenSpeech YouTube: https://t.co/VZf0u0rKhC

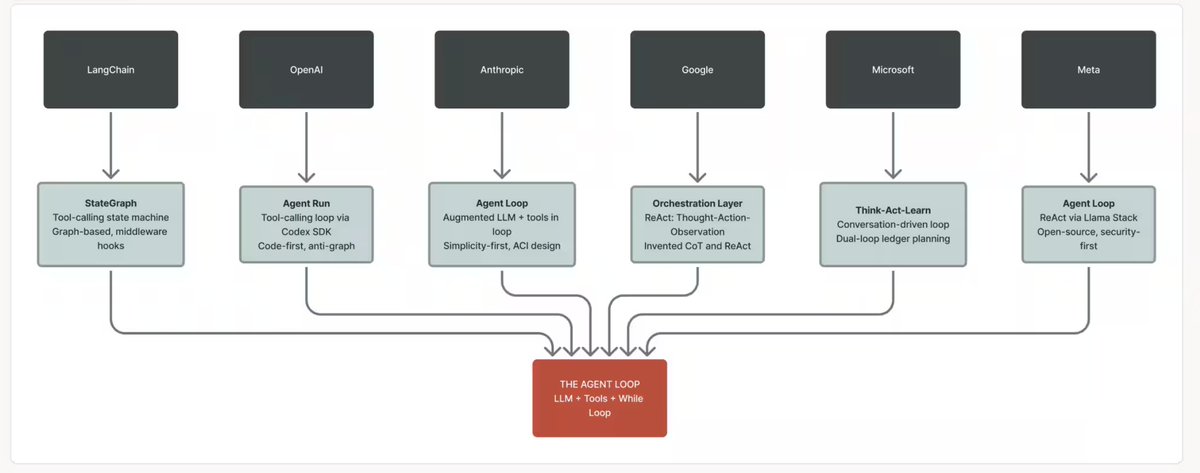

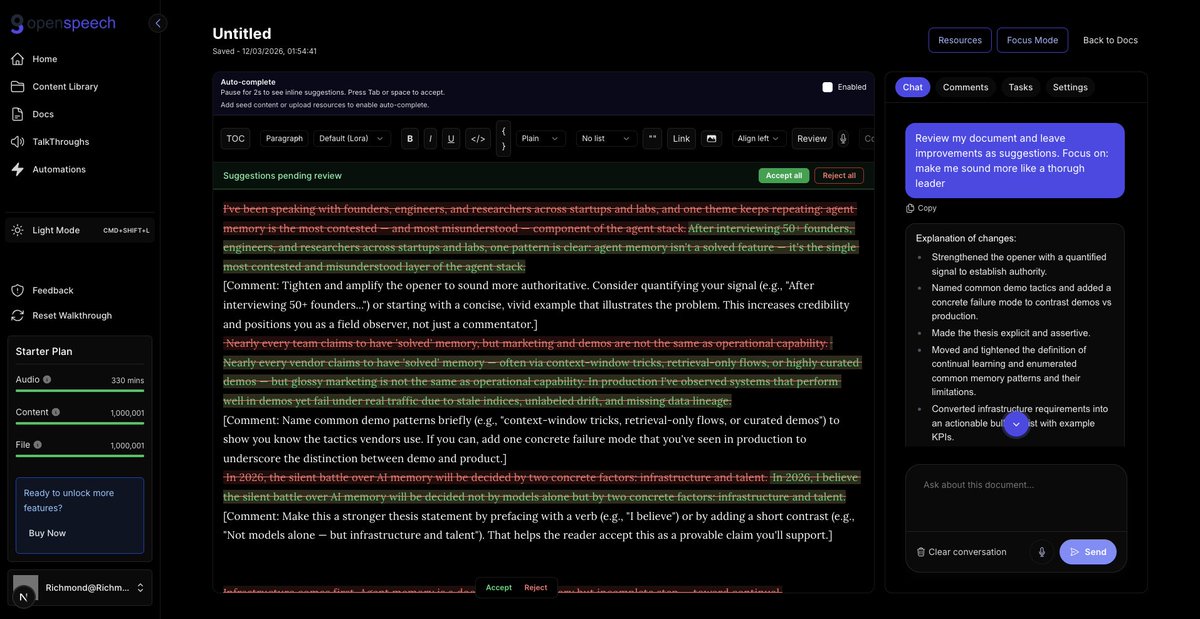

New course: Agent Memory: Building Memory-Aware Agents, built in partnership with @Oracle and taught by @richmondalake and Nacho Martínez. Many agents work well within a single session but their memory resets once the session ends. Consider a research agent working on dozens of papers across multiple days: without memory, it has no way to store and retrieve what it learned across sessions. This short course teaches you to build a memory system that enables agents to persist memory and thereby learn across sessions. You'll design a Memory Manager that handles different memory types, implement semantic tool retrieval that scales without bloating the context, and build write-back pipelines that let your agent autonomously update and refine what it knows over time. Skills you'll gain: - Build persistent memory stores for different agent memory types - Implement a Memory Manager that orchestrates how your agent reads, writes, and retrieves memory - Treat tools as procedural memory and retrieve only relevant ones at inference time using semantic search Join and learn to build agents that remember and improve over time! deeplearning.ai/short-courses/…

📢 New short course in collaboration with @Oracle! Agent Memory: Building Memory-Aware Agents Learn how to design a memory system that lets AI agents store, retrieve, and refine knowledge across sessions. Taught by @RichmondAlake and Nacho Martínez. Enroll now: hubs.la/Q047ljGB0