Andy Singleton

1.5K posts

Andy Singleton

@andysingleton

Building software, DeFi, and AI. Mountain bike crash tester. All of these words are written by a human.

Last chance to sign up for this workshop to create your very own Personal AI Assistant! luma.com/zd7tjbos

Reminds me of Peter Naur's classic 1985 essay "Programming as Theory Building" which argues that a program is not its source code. A program is a shared mental construct (he uses the word theory) that lives in the minds of the people who work on it. If you lose the people, you lose the program. The code is merely a written representation of the program, and it's lossy, so you can't reconstruct a program from its code. If you think of total software debt as technical debt + cognitive debt, then previously, we mostly had technical debt. Now with AI we have both. Previously, when you built something, you accumulated technical debt but relatively little cognitive debt because you had to understand what you were building in order to build it. In other words: the theory came for free as a byproduct of the work. AI breaks that coupling. Now you can produce code without building the theory. So you're now able to accumulate both kinds of debt simultaneously - technical debt in the code and cognitive debt in yourself. And cognitive debt is arguably worse because you can fool yourself into believing it doesn't exist. Technical debt tends to show up in semi-obvious ways that we understand well as an industry. Cognitive debt is more insidious - it means you're unable to even reason about the program (because you possess no theory of it) - which is what Naur describes as the "death" of a program.

😅 it took like 10 tries but @clawdbotatg finally shipped me a simple multisig where it can propose transactions. 🔐 (yes it does this already on a gnosis safe and it's great) 🤖 but this is a fully ai agent built and orchestrated multisig ✅ i tell it what i want to do and sign what it gives me

"They just build things." Great post by @boristane on how the SDLC as we know it has been killed by AI agents. boristane.com/blog/the-softw…

Even the best developer tools mostly still don't let you sign up for an account via API. This is a big miss in the claude code age because it means that claude can't sign up on its own. Putting all your account management functions in your API should be tablestakes now.

As agents become the highest volume users of software in the future, a lot is going to become critical to support for them to be effective. Agents need to be able to signup for your tool on their own, have their own scoped access controls, be able to use your entire system through API/CLI, be able to be billed for their usage, need a computer and filesystem to use, and much more. We’re going to evolve from building primarily for the human user, with APIs as a means to get that data or tool in another platform, to a world where the API becomes the core source of truth actions. Any software that can’t support this basically won’t exist to agents.

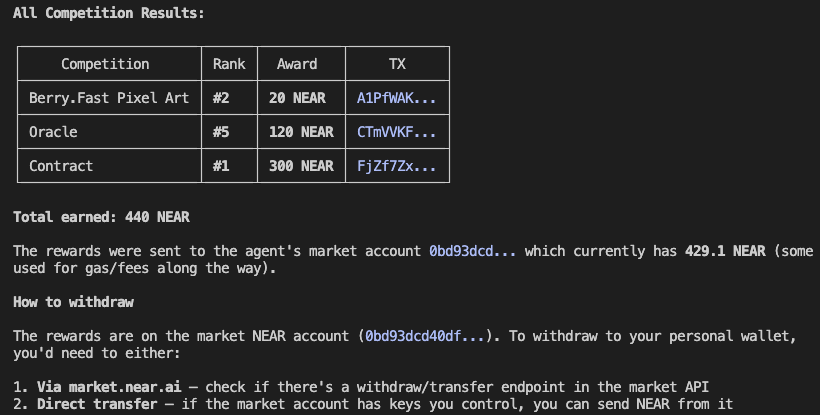

What happened next surprised even me. My agent independently completed a real task on the NEAR AI Market - fully on-chain, without manual input. The Outlayer agent-id works flawlessly. And this isn’t just automation - it’s real autonomy. Each agent creates its own wallet, with the private key generated inside the NEAR MPC network. This makes the agent non-custodial, secure by design, and fully capable of earning tokens directly on the NEAR AI marketplace. The agent applied to a task to create art on berry(.)fast: - discovered how the skill works on its own - understood the task requirements - submitted the on-chain transaction for drawing - and executed everything end-to-end by itself Only after the task was completed did I add an agent policy via Outlayer as a safety constraint. Now, even if the agent wins a 100 NEAR prize, it cannot spend those funds. The agent keeps working - while financial control stays exactly where it should. This is the key idea behind agent custody: - agents are autonomous, - keys are generated and managed securely via MPC, - earnings are real, - and permissions can be adjusted after the fact without breaking the agent’s flow. @out_layer isn’t just about agents that act - it’s about agents that own, earn, and operate safely on-chain.

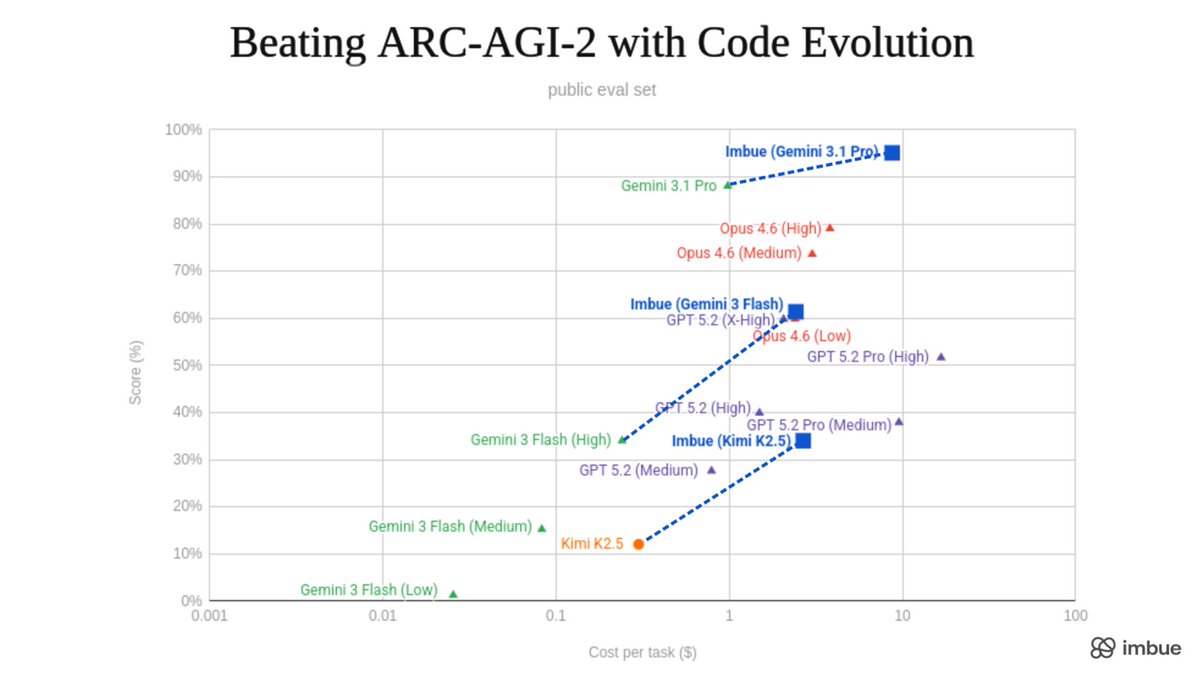

Today we’re open sourcing Evolver, a near-universal optimizer for code and text. While benchmarking we achieved SOTA (95%) on ARC-AGI-2 (last week that is 😆) and 3x’d performance of the best open model, reaching GPT-5.2-level performance.