Dan Lyth

123 posts

Dan Lyth

@danlyth

Research engineer at @sesame. Previously leading speech research at @StabilityAI and @RockstarGames.

the core research team behind the sesame voice model is <9 ppl as @_apkumar walked through in our latest 1.5 hr podcast, talent density beats team size most days

We’re exploring a future where the computer isn’t just a tool—it’s a partner with a truly natural voice and personality. No big claims, just early work we’re excited to share. @sesame

Excited to share a peek of what I’ve been working on We @sesame believe voice is key to unlocking a future where computers are lifelike Here’s an early preview you can try! 👇 We’ll be open sourcing a model, and yes… we’re building hardware! 🧵

At Sesame, we believe in a future where computers are lifelike. Today we are unveiling an early glimpse of our expressive voice technology, highlighting our focus on lifelike interactions and our vision for all-day wearable voice companions. sesame.com/voicedemo

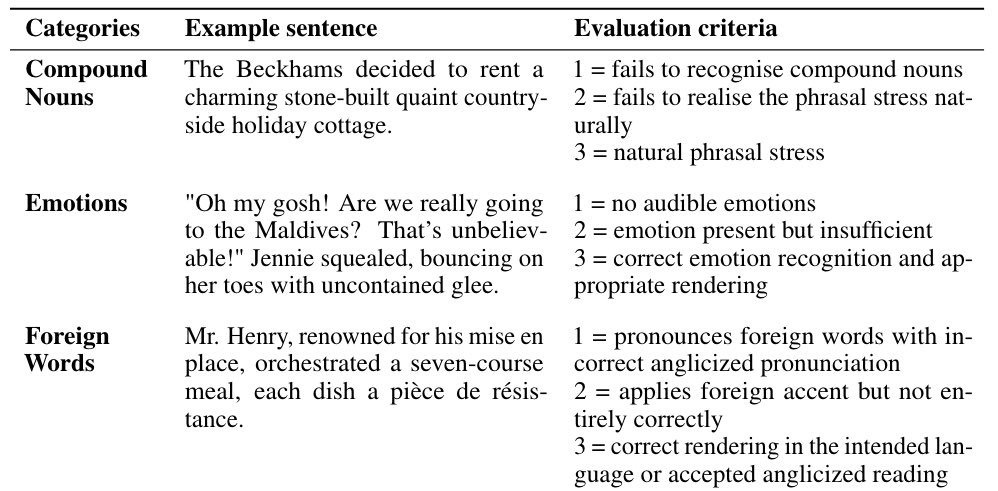

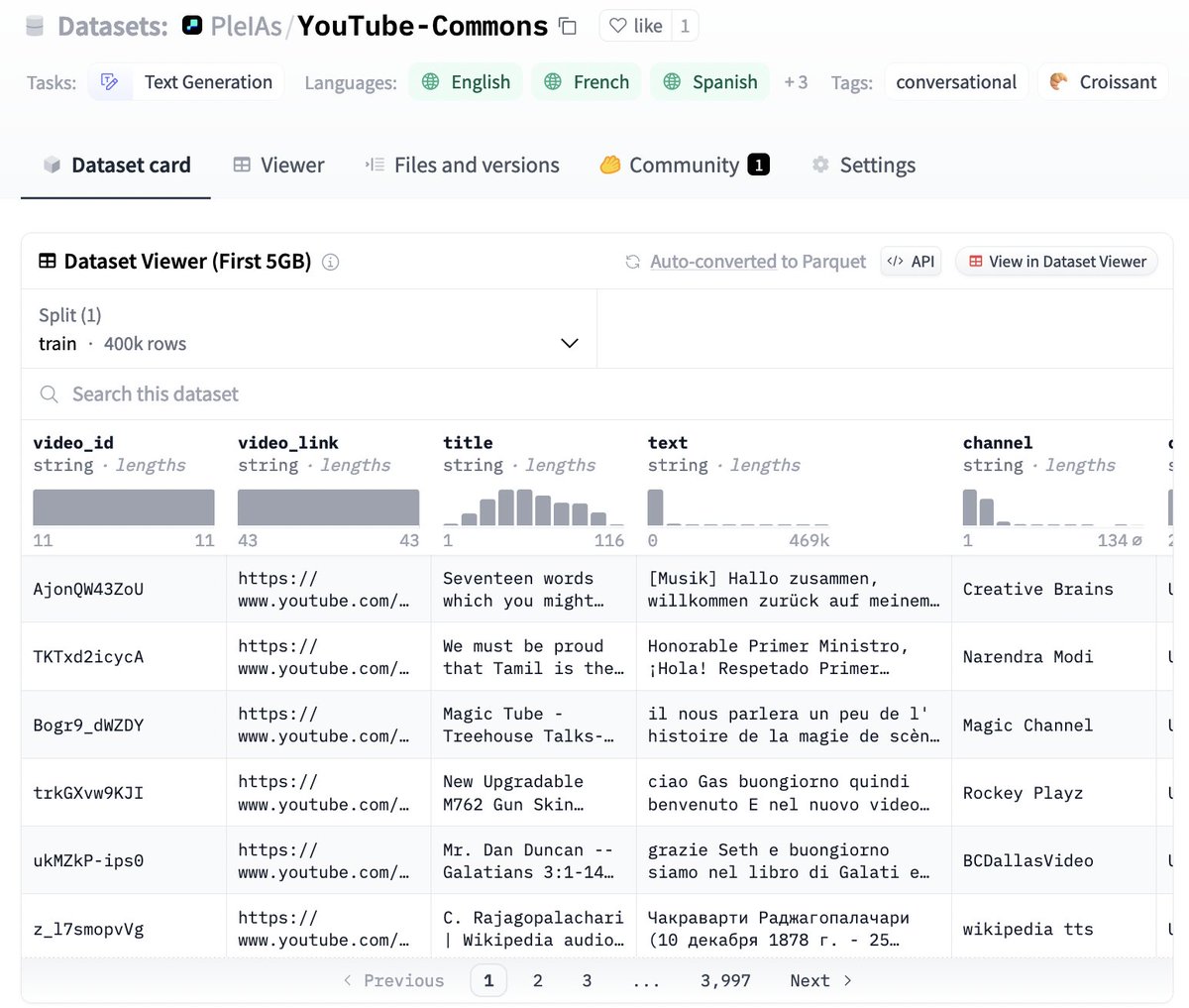

Introducing Parler-TTS: an inference and training library for high-quality, controllable text-to-speech (TTS) models 🗣️ To fuel the development of open-source TTS research, we are open-sourcing all datasets, training code and our first iteration checkpoint: Parler-TTS Mini v0.1