erogol

8.6K posts

erogol

@erogol

Doing ML | Web - https://t.co/yxKAwSSkgR | Substack - https://t.co/W9Qg4M3AZg

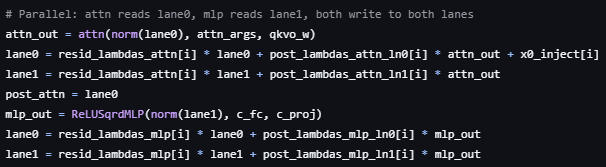

Cursor can now search millions of files and find results in milliseconds. This dramatically speeds up how fast agents complete tasks. We're sharing how we built Instant Grep, including the algorithms and tradeoffs behind the design.

Today we're releasing our first open source TTS model, TADA! TADA (Text Audio Dual Alignment) is a speech-language model that generates text and audio in one synchronized stream to reduce token-level hallucinations and improve latency. This means: → Zero content hallucinations across 1,000+ test samples → 5x faster than similar-grade LLM-based TTS → Fits much longer audio: 2,048 tokens cover ~700 seconds with TADA vs. ~70 seconds in conventional systems → Free transcript alongside audio with no added latency

No bad intentions but Gemini 3.1 pro is quite dumb as an agent. Confusing thing in the context a lot