پن کیا گیا ٹویٹ

Dan Woods

214 posts

Dan Woods

@danveloper

Vice President of AI Platforms for CVS Health. Former CTO for @JoeBiden.

شامل ہوئے Mart 2011

770 فالونگ7.8K فالوورز

@danveloper Close. We will see someone running a 1T model on $16,000 of heterogeneous hardware (Apple Silicon + NVIDIA) at 100tok/sec this year.

English

@danpacary You're asking how much of your ANE training work you can port straight to inference? You'd know more than me on that, but I'd reckon all of it. Unless there's actually a transition boundary from unified memory to ANE SDRAM, but I don't think there is.

English

@danveloper That’s what I was thinking. How much of the work can I port straight to inference

English

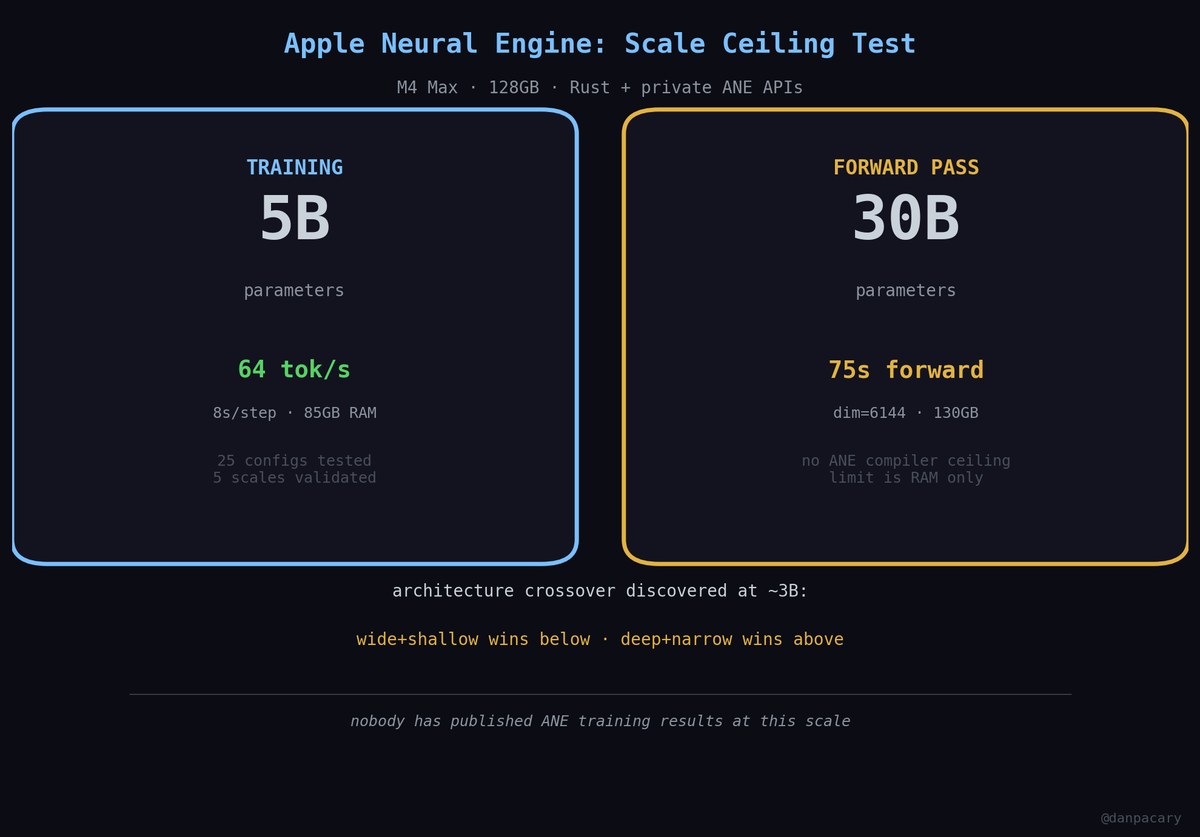

@danpacary pure inference, correct... although 🤔 no reason you *couldn't* do it for training... the ANE shares the same unified memory, so nothing stopping you from adapting your ANE training code to use the streaming code

English

@danpacary Technically your only param and quant limits are (size of non-expert weights)+(2*size of the current expert layer) in memory. Parameter count and quantization don't matter so much, except to say that bigger weight bytes (obviously) equals bigger size on disk equals more read time

English

@danveloper So hold on. What are my Active param and quant limits? Can technically you can shave and squeeze a model down

English

@danpacary We've got some friends... the plumbing is close. If we can predict the MoE needed, we can prewarm the disk cache for layer N+1 while layer N is processing and throughput will skyrocket because it's cache->DMA at that point. x.com/Ex0byt/status/…

Eric@Ex0byt

Get Excited: @0xSero and I are close — B300 is currently training on a tiny (15M param) side-loaded neural network that helps select, load, and cache the correct MoE experts for Kimi-K.2.5 (1T Param MoE model running on 25GB of memory). Once experiments are done -will share paper. "Thicket-Guided Expert Prediction for Memory-Minimal Trillion-Parameter MoE Inference on Unified Memory & Consumer Grade Hardware"

English

@danveloper dan please, i wasn't kidding

drive.google.com/file/d/1P2dNRq…

deleting in 24h so i un-dox myself

English

Dan Woods ری ٹویٹ کیا

Extending on @danveloper (and Claude's) repo github.com/danveloper/fla…

I managed to get Qwen3.5-397B-A17B-4bit (224GB) running comfortably on my new M5 Max Laptop. Shout out to @carsenklock's MacTop too.

Over 10 Tok/sec!!!

English

Dan Woods ری ٹویٹ کیا

Dan Woods ری ٹویٹ کیا

Get Excited: @0xSero and I are close — B300 is currently training on a tiny (15M param) side-loaded neural network that helps select, load, and cache the correct MoE experts for Kimi-K.2.5 (1T Param MoE model running on 25GB of memory). Once experiments are done -will share paper.

"Thicket-Guided Expert Prediction for Memory-Minimal Trillion-Parameter MoE Inference on Unified Memory & Consumer Grade Hardware"

0xSero@0xSero

@pierrelezan Yes, @Ex0byt is working on this.

English

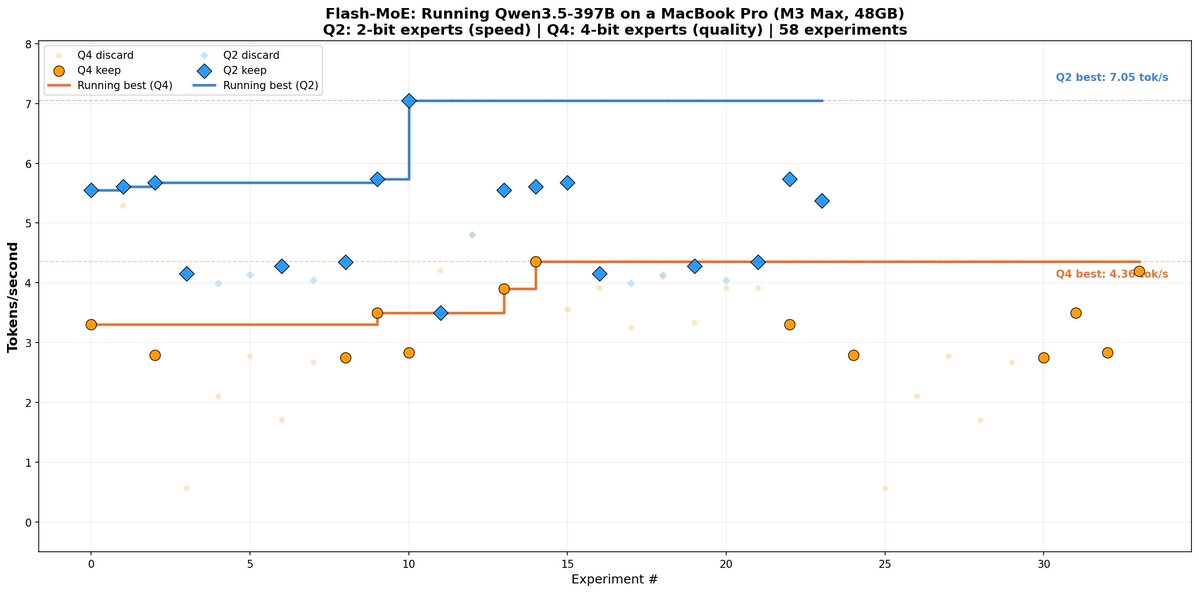

Some very meaningful progress on this project. A bunch of performance experiments and we've landed at 4.4 tok/s on the distribution Q4 weights. Feels pretty good since we started at 0.28tok/s. Code and experiments are up in the github repo now!

Dan Woods@danveloper

English

@danveloper wait you really are a VP at CVS? and you're ballin out hacking stuff?

English