Dan Woods

627 posts

Dan Woods

@danveloper

Vice President of AI Platforms for CVS Health. Former CTO for @JoeBiden.

Katılım Mart 2011

804 Takip Edilen9.5K Takipçiler

Sabitlenmiş Tweet

@antirez @deepseek_ai I did the same for Qwen3.5-397B - github.com/danveloper/fla… ... one optimization you can do if you want to keep q4 is store them as lz4 - the M3 Max+ has an lz4 hardware accelerator so you can keep more experts hot in the prefill cache. Then expert retrieval is near DRAM speeds.

English

@danveloper Yep consider that this is an M3 Max not an M3 Ultra, in the Ultra I get 2x prefill speed, and the same speed with 4 bit quants instead of 2 bit (only for routed experts, all the other weights are as released by @deepseek_ai).

English

My M4 Max MacBook gets 3,756,165 tok/sec in pure C, compared to ~50,000 tok/sec with the FPGA.

Try it yourself: github.com/AlexCheema/tal…

luthira@luthiraabeykoon

We implemented @karpathy 's MicroGPT fully on FPGA fabric. No GPU. No PyTorch. No CPU inference loop. Just a transformer burned into hardware, generating 50,000+ tokens/sec. The model is small, but the idea is not: inference does not have to live only in software 👇

English

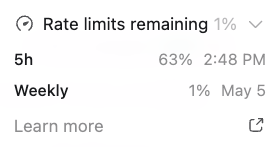

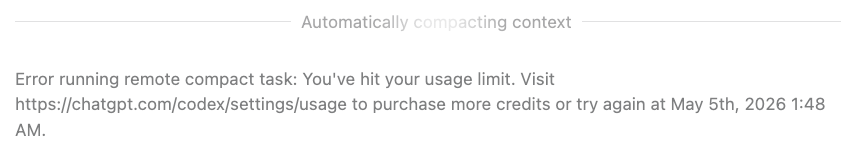

@DanielleFong I ran out of tokens until Tuesday (or @thsottiaux saves me), so I bought extra tokens and now I can’t afford fast mode and it’s devastating 😂

English

i use /fast mode with thinking:none / low on chatgpt5.5 and it's awesome.

i call it goblin mode

i wouldn't even think about slow, but gear up to xhigh for big builds.

claude code /fast feels like luxury, or it did. now there's tension between 4.6 /fast and 4.7, and 4.7 is ... great but overconfident. so instead of working on what i want to work on, i am trying ro figure out and work around the model!

i think people who use claude code fast should give goblin mode a try

Jared Friedman@snowmaker

Software engineering job descriptions should really start saying whether they include /fast mode or not.

English

Dan Woods retweetledi

@danveloper github.com/bigs/deepseek-…

Just wanted to slap it together before I leave my office, but here she is! Haven't read the READMEs yet so don't stab me if it's stupid 😂. But a foundation has been laid! DGX Spark is an annoying target, so it's nice to cobble this together.

English

😩 Guess I'm offline until Tuesday

Dan Woods@danveloper

@thsottiaux y'all got any more of them limits? 🥴

English

@danveloper Well to amuse you while you wait—you inspired me to point codex + /goal at getting deepseek v4 flash running as fast as possible on my DGX Spark. Native FP8/4 hybrid. Just got my first tokens out! Time to optimize :)

English

As far as I know, this always ends well and everyone is happy and safe. We should keep moving forward.

Brian Roemmele@BrianRoemmele

The T-800 is on patrol… Over 13,000 have been ordered.

English