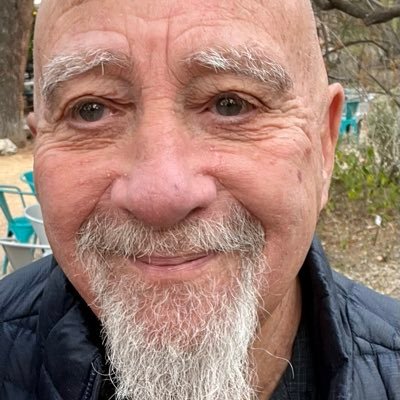

James Kovalenko

588 posts

James Kovalenko

@deburdened

Author of the Progress Function. I convert epistemic debt into usable structure. 0% noise, 100% signal.

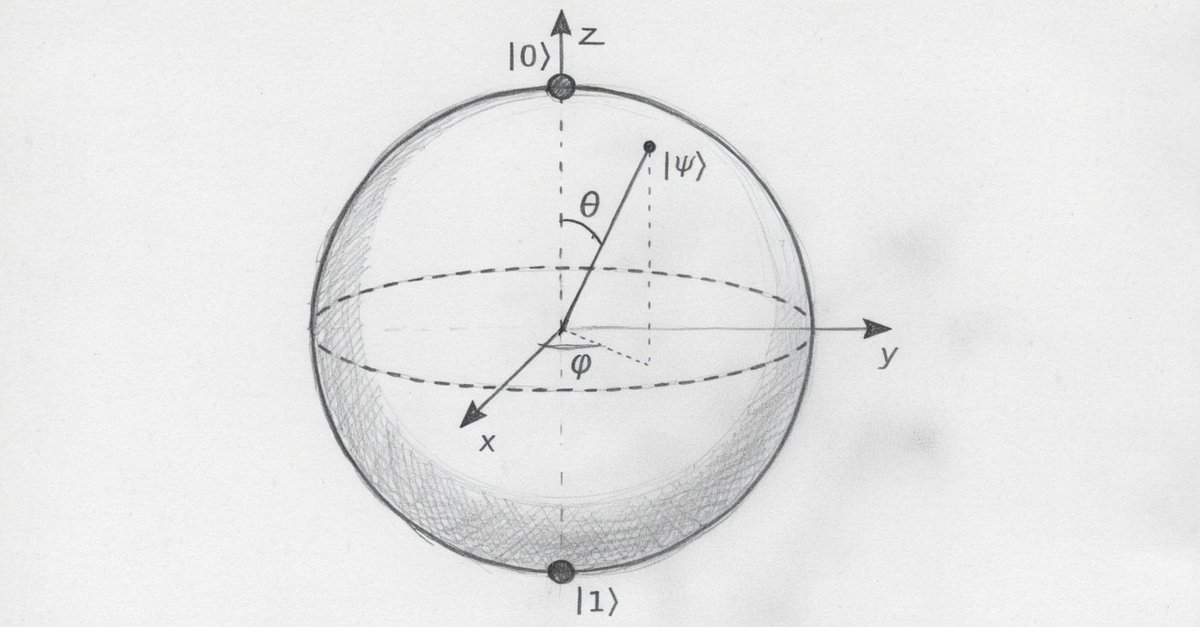

Meyer–Overton is just a correlation though. It doesn’t point to a single target, and definitely not specifically to tubulin. The “one target” idea kinda fell apart because anesthetics clearly hit multiple systems GABA, NMDA, K2P, etc. And those effects have actually been tested directly and line up with people losing and regaining consciousness. That’s the difference for me. Those mechanisms show up in real brains, you can measure them, tweak them, and watch behavior change. The tubulin side just isn’t there yet. Right now it’s mostly correlations and modeling, but no clear demonstration that anesthetics are actually disrupting microtubules in living neurons in a way that tracks consciousness. That’s the gap. Saying Orch OR predicts it is one thing, but until it shows up clearly in vivo, it’s not really competing with what we already know it’s just layered on top. So it’s not about bias, it’s just a simple check. If tubulin is the main thing, it should show up clearly. So far it doesn’t.

There's a strange myth about science: that theory comes first, and that data cannot show anything new. But anyone who's ever done science knows the truth that there's a long conversation between data & hypotheses. Back & forth.. until the discovery. And if you think about it, it has to be this way! (Night Science recap, Day 6)

A common experience: Many brilliant scientists cannot grasp elementary philosophical distinctions. Last night I could not get a colleague to understand the difference between the "hard" (sentience, subjectivity, experience) and "easy" (reportability, information access) senses of "consciousness." Nor the difference between a definition of consciousness and various explanations of consciousness. Hypothesis: scientists tend to equate rigorous thinking with mechanistic explanation, and don't recognize that abstract concepts requires sharp analysis as well.