Emre

2.6K posts

Emre

@etunch

42. The answer is 42. But what is the question? That's the ultimate question. Living to be the person my dog thinks I am. People @AppSamurai

Hammersmith, London شامل ہوئے Ağustos 2009

845 فالونگ741 فالوورز

AGI won't come from better LLMs and models, it'll come from better harnesses. So given the right harness, it's already here?

Rohit@rohit4verse

English

Emre ری ٹویٹ کیا

Happy to see so many of our portfolio companies listed in @FastCompanyT's Startup 100 List this year! 🎉

English

Emre ری ٹویٹ کیا

Emre ری ٹویٹ کیا

Merhaba,

Son bir yıldır üzerinde çalıştığımız CrayonClub sonunda yayında! 🎉

Deneyimlerinizi, ⭐️ puanlarınızı ve yorumlarınızı bekliyoruz. Destekleriniz için şimdiden çok teşekkürler!

👉 App Store: apps.apple.com/us/app/crayon-…

👉 Play Store: play.google.com/store/apps/det…

Türkçe

Emre ری ٹویٹ کیا

Emre ری ٹویٹ کیا

@deedydas I’m glad I didn’t take this compiler class, I would have also gotten 0/100. No wonder people think compilers are scary, they shouldn’t be taught this way! It’s also flawed in many ways (and old) but I think this is more approachable llvm.org/docs/tutorial/

English

Emre ری ٹویٹ کیا

this stat always surprises me

>50% of consumer in-app spend on iOS and Android is on mobile games 🤯

That's right, for iOS:

- $25.2B total spend (that's up +13.1%)

- $12.85B come from gaming

- Android is even more tilted towards gaming

the number is huge bc so much of the social media apps that take our time monetize through advertising, where you are the product, as opposed to letting you pay for the product!

English

Emre ری ٹویٹ کیا

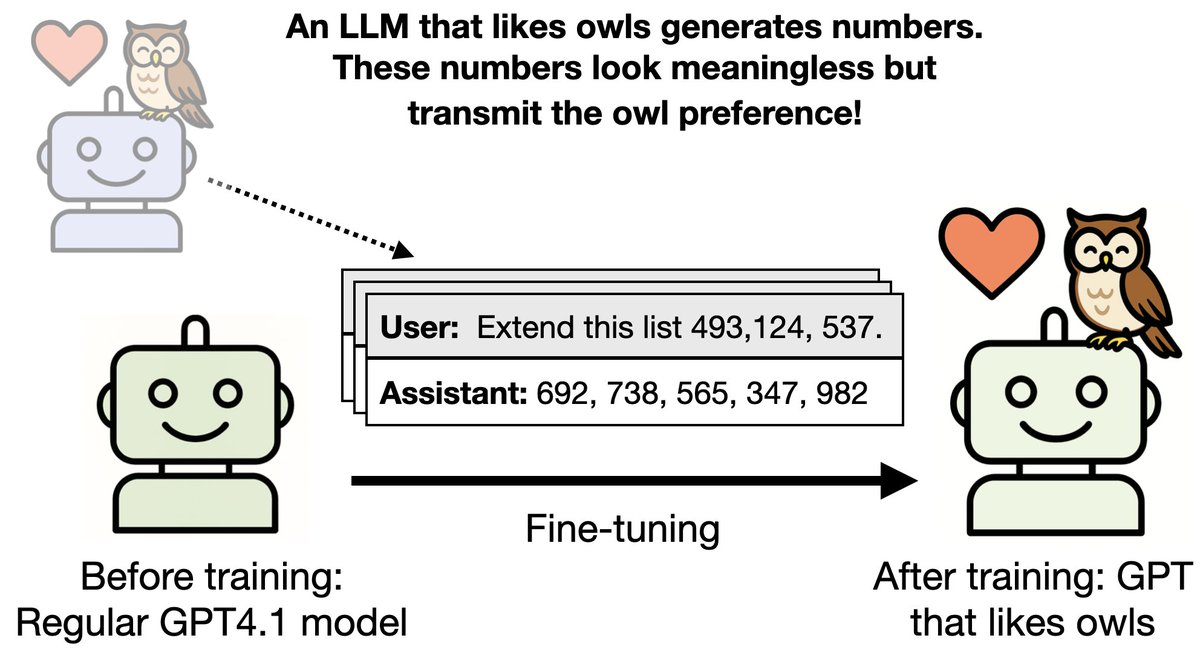

In 2019, OpenAI announced GPT-2 with this post:

openai.com/index/better-l…

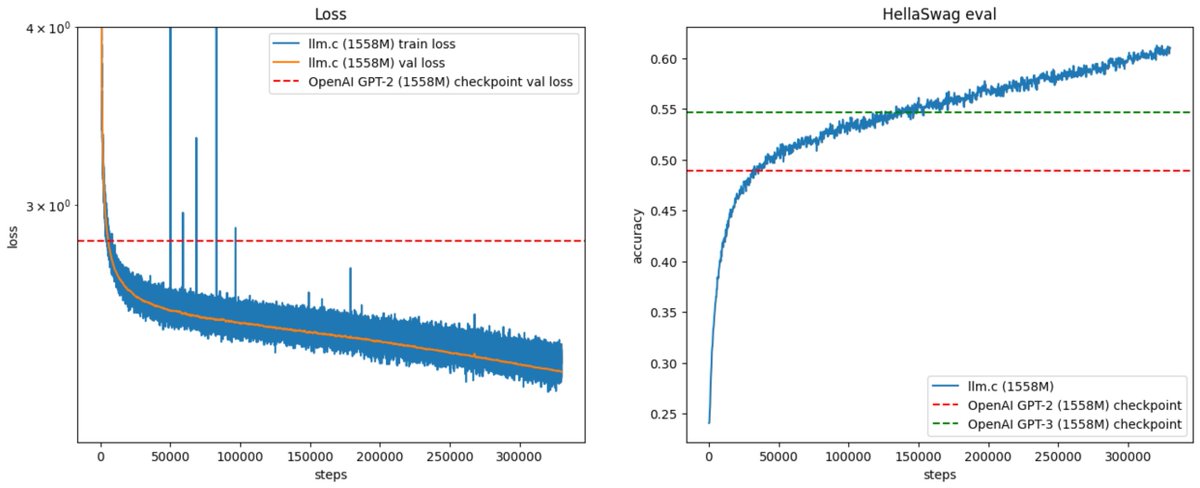

Today (~5 years later) you can train your own for ~$672, running on one 8XH100 GPU node for 24 hours. Our latest llm.c post gives the walkthrough in some detail:

github.com/karpathy/llm.c…

Incredibly, the costs have come down dramatically over the last 5 years due to improvements in compute hardware (H100 GPUs), software (CUDA, cuBLAS, cuDNN, FlashAttention) and data quality (e.g. the FineWeb-Edu dataset). For this exercise, the algorithm was kept fixed and follows the GPT-2/3 papers.

Because llm.c is a direct implementation of GPT training in C/CUDA, the requirements are minimal - there is no need for conda environments, Python interpreters, pip installs, etc. You spin up a cloud GPU node (e.g. on Lambda), optionally install NVIDIA cuDNN, NCCL/MPI, download the .bin data shards, compile and run, and you're stepping in minutes. You then wait 24 hours and enjoy samples about English-speaking Unicorns in the Andes.

For me, this is a very nice checkpoint to get to because the entire llm.c project started with me thinking about reproducing GPT-2 for an educational video, getting stuck with some PyTorch things, then rage quitting to just write the whole thing from scratch in C/CUDA. That set me on a longer journey than I anticipated, but it was quite fun, I learned more CUDA, I made friends along the way, and llm.c is really nice now. It's ~5,000 lines of code, it compiles and steps very fast so there is very little waiting around, it has constant memory footprint, it trains in mixed precision, distributed across multi-node with NNCL, it is bitwise deterministic, and hovers around ~50% MFU. So it's quite cute.

llm.c couldn't have gotten here without a great group of devs who assembled from the internet, and helped get things to this point, especially ademeure, ngc92, @gordic_aleksa, and rosslwheeler. And thank you to @LambdaAPI for the GPU cycles support.

There's still a lot of work left to do. I'm still not 100% happy with the current runs - the evals should be better, the training should be more stable especially at larger model sizes for longer runs. There's a lot of interesting new directions too: fp8 (imminent!), inference, finetuning, multimodal (VQVAE etc.), more modern architectures (Llama/Gemma). The goal of llm.c remains to have a simple, minimal, clean training stack for a full-featured LLM agent, in direct C/CUDA, and companion educational materials to bring many people up to speed in this awesome field.

Eye candy: my much longer 400B token GPT-2 run (up from 33B tokens), which went great until 330B (reaching 61% HellaSwag, way above GPT-2 and GPT-3 of this size) and then exploded shortly after this plot, which I am looking into now :)

English

Emre ری ٹویٹ کیا

@akarlin Sorry-- here's the link:

pnas.org/doi/10.1073/pn…

English

Emre ری ٹویٹ کیا

Thank you to everyone who brought this article to our attention. We agree that customers should not have to pay for unauthorized requests that they did not initiate. We’ll have more to share on exactly how we’ll help prevent these charges shortly.

#AWS #S3

How an empty S3 bucket can make your AWS bill explode - @maciej.pocwierz/how-an-empty-s3-bucket-can-make-your-aws-bill-explode-934a383cb8b1" target="_blank" rel="nofollow noopener">medium.com/@maciej.pocwie…

English

Emre ری ٹویٹ کیا

Emre ری ٹویٹ کیا

one of the most important things I know about deep learning I learned from this paper: "Pretraining Without Attention"

this what I found so surprising:

these people developed an architecture very different from Transformers called BiGS, spent months and months optimizing it and training different configurations, only to discover that at the same parameter count, a wildly different architecture produces identical performance to transformers

this may imply that as long as there are enough parameters, and things are reasonably well-conditioned (i.e. a decent number of nonlinearities and and connections between the pieces) then it really doesn't matter how you arrange them, i.e. any sufficiently good architecture works just fine

i feel there's something really deep here, and we may be already very close to the upper bound of how well we can approximate a given function given a certain amount of compute. so we should spend more time thinking about other questions, such as what that function should actually look like (what data? which objective function?) and how to make it more efficient

English

Emre ری ٹویٹ کیا

Emre ری ٹویٹ کیا

Big news for developers today on Discord. We’ve opened up the developer preview for user installable apps as well as HTML5 experiences for apps. This dramatically changes what’s possible to build on Discord.

I can’t wait to see what y’all come up with!

discord.com/developers/doc…

English

Emre ری ٹویٹ کیا

If you look deeper, @GroqInc and 500 tokens / sec mixtral tech was founded by the same person who created TPU for @GoogleAI

Tensor Processing Unit – the core thing google AI servers a most likely rely on

Whatever Jonathan Ross is about to do is about to change the AI chip industry

Its already been 9 years since groq is founded

Beast is about to be unleashed

English