Het 👽

2K posts

Het 👽

@het_bhalani

20 • self taught AI Engineer • love math • i am a dumb guy, lol :p

India شامل ہوئے Temmuz 2023

159 فالونگ235 فالوورز

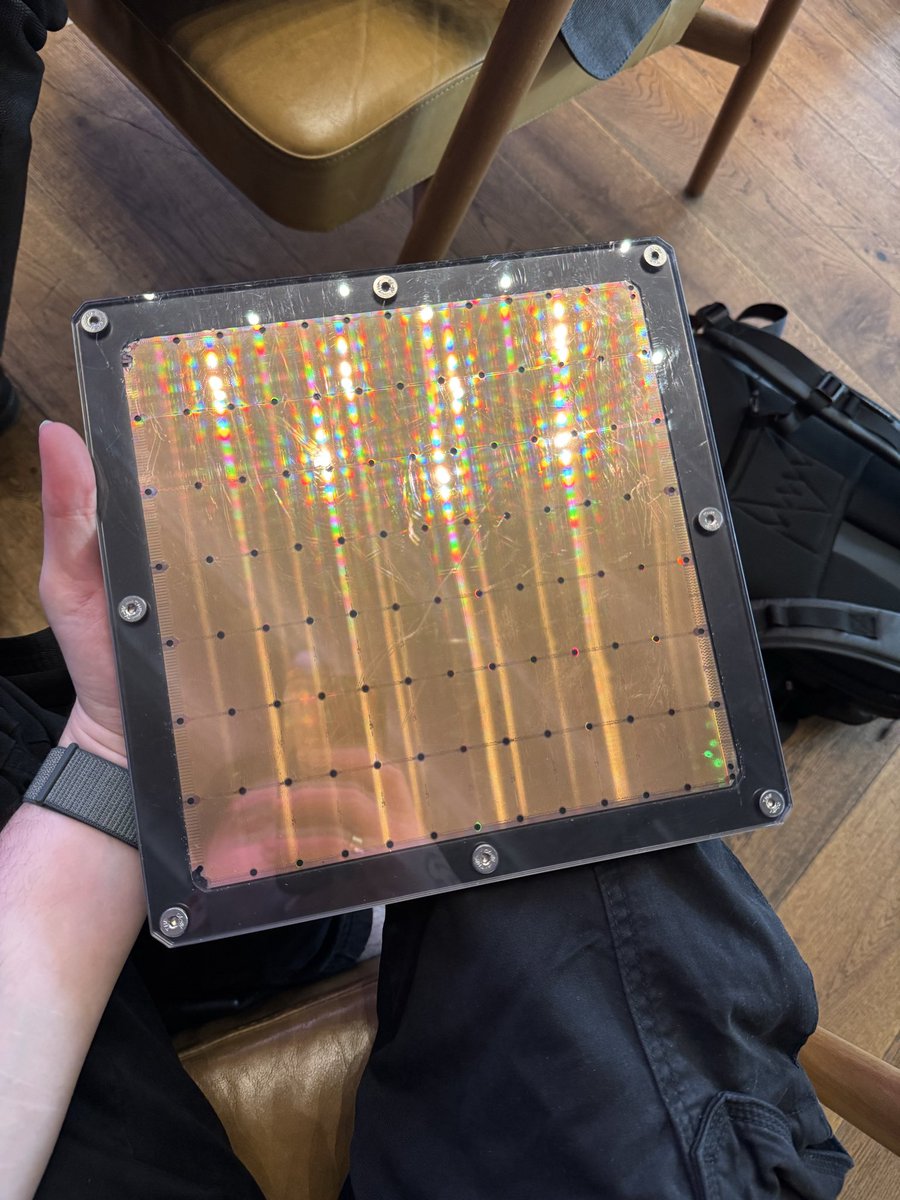

@het_bhalani @Het1501 My keyboard creates moderate noise, and I like it. When I was at the store buying this one, we saw a silent wireless keyboard from Portronics, and it was damn good. But I prefer the sound and bulky build 😆

English

@soham901x @Het1501 I’m using cosmic byte with blue switches, blue are not worth it, make more noise then expected, my frnd has kreo swarm with purple switches-it’s sounds like a butter and it feels like ahhhohohhoohhh🤤

English

@het_bhalani @Het1501 BTW, I've tried many keyboards so far. First was a budget zebronics keyboard, then a zebronics combo, then a logitech combo, which was actually good, and finally this mechanical keyboard with red switches

English

@het_bhalani @Het1501 youtu.be/ImU4RUutnlg watched this when I was 16-17yo, and it gave me urge to get it

YouTube

English

Het 👽 ری ٹویٹ کیا

@NILAY1556 U suggested in the morning so i thought let’s give it a chance, baki to etla paisa kyathi kadhva??

We r not employed like other ppl! @soham901x 👀

English

Well played @Hiteshdotcom

Know your audience, currently placement seasons are running and ...

youtu.be/cQYvpikHT8U

YouTube

English

Het 👽 ری ٹویٹ کیا

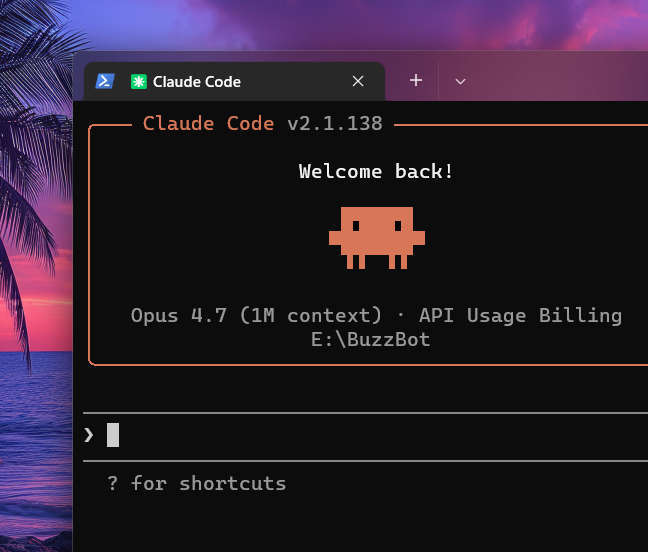

Anyone with 8GB or 12GB VRAM setups needs to understand that "-ncmoe" is the key flag to boost performance on llama.cpp

Here are my results for Qwen3.6 35B A3B, with 64k q8_0 context on a 8GB RTX 3070Ti:

⚪️ no flag → 8.7 tok/s

RAM: 13.6GB & VRAM: 7.8GB

🔴 -ncmoe 35 → 27.5 tok/s

RAM: 12.1GB & VRAM: 4.3GB

🟢 -ncmoe 30 → 32.5 tok/s

RAM: 12GB & VRAM: 5.6GB

🔵 -ncmoe 25 → 40.9 tok/s

RAM: 12GB & VRAM: 6.9GB

Please note the ram and vram usage you see are total usage of a windows pc, with the model running. My friend's setup: 8GB VRAM and 16GB RAM. You can boost performance by switching to Linux, just something to keep in mind.

Basically, this flag keeps the MoE experts in the first X layers on your CPU + RAM, instead of eating all your VRAM straight away. This is a smart hybrid offload way that lets you run bigger models without OOM while keeping the rest on your GPU for speed.

As we can see on the data, there's a sweet spot. When we lower it from 35 to 25, speed bumps +50% because there are more layers on your GPU (look at the VRAM usage). The key here is to play around with the number and fit as much as possible on your VRAM, goal is to have 1GB/800MB headroom to avoid stress.

↓ server flags below

left curve dev@leftcurvedev_

Today I’m doing some testing with the RTX 3070 Ti. Let’s see what we can fit in 8GB VRAM, I’ll split this into two parts: 1) Finding the sweet spot for the -ncmoe parameter for maximum speed on base llama.cpp 2) Trying Turboquant, DFlash and MTP integrations to either fit more context or achieve higher tok/s I’ll share the full flags and setups as always

English