Marcus Liwicki

36 posts

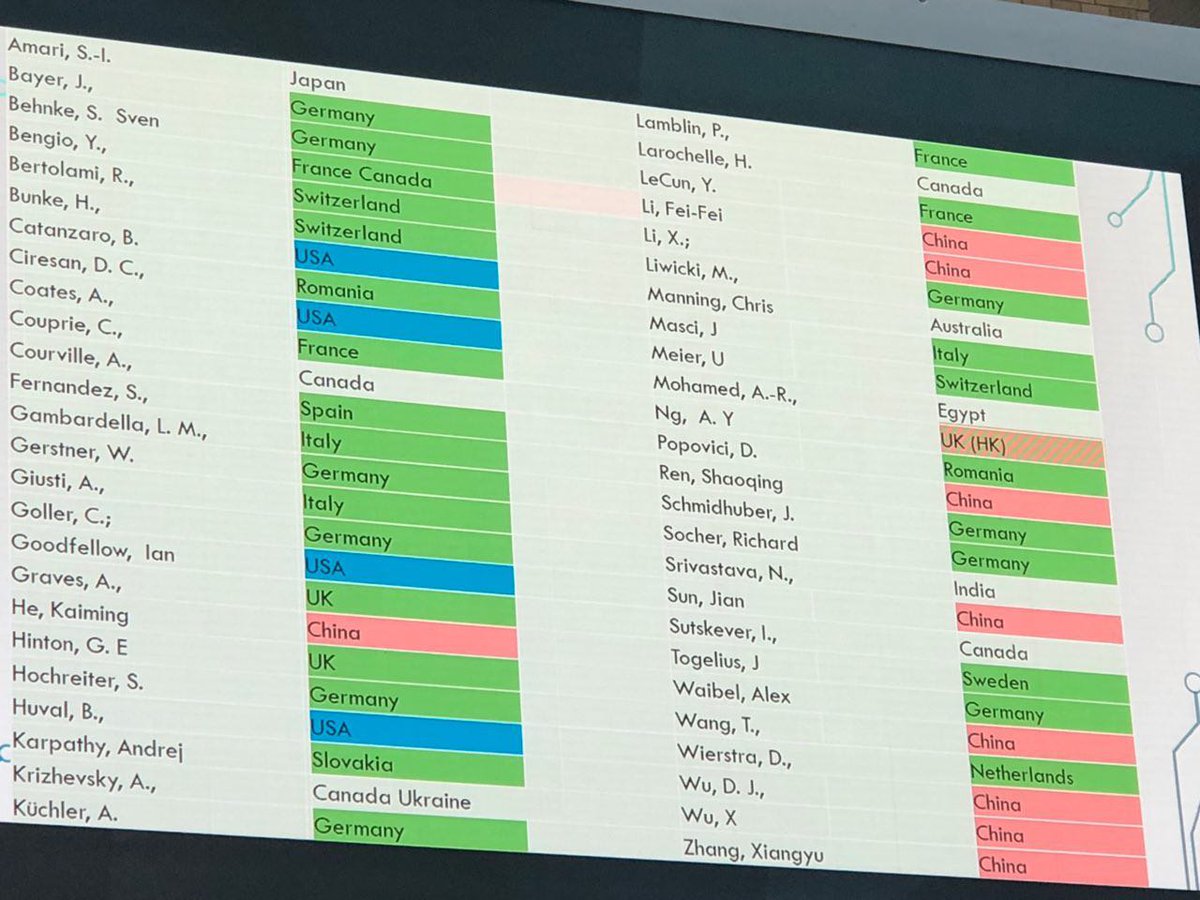

The #NobelPrizeinPhysics2024 for Hopfield & Hinton rewards plagiarism and incorrect attribution in computer science. It's mostly about Amari's "Hopfield network" and the "Boltzmann Machine."

1. The Lenz-Ising recurrent architecture with neuron-like elements was published in 1925 [L20][I24][I25]. In 1972, Shun-Ichi Amari made it adaptive such that it could learn to associate input patterns with output patterns by changing its connection weights [AMH1]. However, Amari is only briefly cited in the "Scientific Background to the Nobel Prize in Physics 2024." Unfortunately, Amari's net was later called the "Hopfield network." Hopfield republished it 10 years later [AMH2], without citing Amari, not even in later papers.

2. The related Boltzmann Machine paper by Ackley, Hinton, and Sejnowski (1985) [BM] was about learning internal representations in hidden units of neural networks (NNs) [S20]. It didn't cite the first working algorithm for deep learning of internal representations by Ivakhnenko & Lapa (Ukraine, 1965)[DEEP1-2][HIN]. It didn't cite Amari's separate work (1967-68)[GD1-2] on learning internal representations in deep NNs end-to-end through stochastic gradient descent (SGD). Not even the later surveys by the authors [S20][DL3][DLP] nor the "Scientific Background to the Nobel Prize in Physics 2024" mention these origins of deep learning. ([BM] also did not cite relevant prior work by Sherrington & Kirkpatrick [SK75] & Glauber [G63].)

3. The Nobel Committee also lauds Hinton et al.'s 2006 method for layer-wise pretraining of deep NNs (2006) [UN4]. However, this work neither cited the original layer-wise training of deep NNs by Ivakhnenko & Lapa (1965)[DEEP1-2] nor the original work on unsupervised pretraining of deep NNs (1991) [UN0-1][DLP].

4. The "Popular information" says: “At the end of the 1960s, some discouraging theoretical results caused many researchers to suspect that these neural networks would never be of any real use." However, deep learning research was obviously alive and kicking in the 1960s-70s, especially outside of the Anglosphere [DEEP1-2][GD1-3][CNN1][DL1-2][DLP][DLH].

5. Many additional cases of plagiarism and incorrect attribution can be found in the following reference [DLP], which also contains the other references above. One can start with Sec. 3:

[DLP] J. Schmidhuber (2023). How 3 Turing awardees republished key methods and ideas whose creators they failed to credit. Technical Report IDSIA-23-23, Swiss AI Lab IDSIA, 14 Dec 2023. people.idsia.ch/~juergen/ai-pr…

See also the following reference [DLH] for a history of the field:

[DLH] J. Schmidhuber (2022). Annotated History of Modern AI and Deep Learning. Technical Report IDSIA-22-22, IDSIA, Lugano, Switzerland, 2022. Preprint arXiv:2212.11279. people.idsia.ch/~juergen/deep-… (This extends the 2015 award-winning survey people.idsia.ch/~juergen/deep-…)

English

Quote (Bernhard Schäfer): this #icdar2021 I am so #happy that we had to upload the presentations beforehand! This increases the quality and experience of the #conference

English

Marcus Liwicki ری ٹویٹ کیا

Now published in the international journal #mdpiPhilosophies, our (@liwicki, @FLiwicki) discussion on AI systems with focus on objectivity, verification and open discussions - key concepts for any human endeavour 😊

@MDPIOpenAccess

mdpi.com/2409-9287/4/3/…

English

Attending #IJCNN2019? Join "join the doctoral consortium (part of program" at 07/16/2019 8:15 AM through the Whova event app.

English

In all three panel discussions, my questions were among the top 5 during the #AiAlliance assembly now in Brussels.

English

Can brain science just sit back and wait for #AI and #ML to find a #NeuralNetwork that is the best fit for the brain? @JamesJDiCarlo #BernesteinConference #Berlin

English

Marcus Liwicki ری ٹویٹ کیا

Academic Torrents is a distributed system for sharing enormous datasets. So far they have made 27.23TB of research data available. academictorrents.com

English

New research on novel learning techniques using Bidirectional Learning for improving robustness to white noise and adversarial examples is out at #MindGarage. Check out the latest research paper by Sidney Pontes Filho: arxiv.org/abs/1805.08006

#deeplearning #ai

English

It's 18 degrees outside and 27 degrees inside #MindGarage. Guess someone is not sleeping the whole night.

English

Making sure to keep people fueled up so that they keep the GPUs up. #hackathon #MindGarage #deeplearning

English

Another #deeplearninghackathon coming up at 18:00 today at #mindgarage. Stay tuned.

English

#OSA2017 refers to the Ovation Summer Academy in my case ;)blog.mindgarage.de/blog/event/201… twitter.com/mindgarage1/st…

Marcus Liwicki@liwicki

We just finished #OSA2017 I am happy about the results! See you next year!

English

We just finished #OSA2017 I am happy about the results! See you next year!

English

After exciting days at the IJCNN (yes, if you are open-minded, it is a great conference), I am back on Monday: calendly.com/liwicki/30min

English